JobServer.NET for Windows Administrators Guide

“JobServer.NET” and “JobServer.NET for Windows” are trademarks of XCENT

JobServer.NET for Windows Copyright 2008-2026 XCENT

Table of Contents

- Table of Contents

- Introduction

- JobServer.NET Platform

- Getting Started with JobServer.NET

- Job Definitions

- Job Triggers

- Job Steps

- Job Modules

- Connections

- Global Variables

- Job Monitor

- Job History and Log

- Managing Multiple Job Servers

- Pre-Installed Triggers

- JobServer.NET Installed Modules

- [ActiveDirectory] Find Users

- [Email] Send Message

- [Excel] Template Merge

- [Facebook] Post Message

- [Files] Are Identical

- [Files] Checksum

- [Files] Compress

- [Files] Contains

- [Files] Copy/Move

- [Files] Decode Base64

- [Files] Decompress

- [Files] Delete

- [Files] Delimiter Convert

- [Files] Download Zone Info

- [Files] Encode Base64

- [Files] Find

- [Files] Find And Replace

- [Files] Generate Filename

- [Files] Join

- [Files] Mutex Lock

- [Files] Mutex Unlock

- [Files] Pick Subset

- [Files] Read

- [Files] Render FileList as Html

- [Files] Set Attributes

- [Files] Split

- [Files] Text Convert

- [Files] Touch

- [Files] Validate Checksum

- [Files] Write

- [Folders] Check Size

- [Folders] Create

- [Folders] Delete

- [FTP] Copy/Move from Remote

- [FTP] Copy/Move to Remote

- [FTP] Delete from Remote

- [FTP] Find on Remote

- [Hyper-V] Action

- [Hyper-V] Checkpoint

- [IIS] Action

- [Images] Constrain

- [Images] Contact Sheet

- [Images] Encode

- [Images] Overlay

- [Images] Quantize

- [Images] Rotate

- [JSON] Minify

- [JSON] Prettify

- [JSON] Render Html

- [LogFiles] Archive

- [Logic] Branch

- [Logic] Compare And Branch

- [Logic] Contains

- [Logic] Stop

- [M365] Extract Attachments

- [Machine] Activate Power Plan

- [Machine] Hardware Inventory

- [Machine] Purge Downloads

- [Machine] Service Control

- [Machine] Shutdown/Restart

- [Network] Http Action

- [Network] Http Ping

- [Network] VPN

- [Parameter] Set

- [PDF] Merge

- [PDF] Password Maintenance

- [Perl] Execute

- [PGP] Decrypt

- [PGP] Encrypt

- [Python] Execute

- [Shell] Command Line

- [Shell] PowerShell Command

- [Shell] Sleep

- [Shell] Sleep Random

- [Slack] Send Message

- [SMS] Send Message

- [SQL Server] Agent

- [SQL Server] Create Tables

- [SQL Server] Execute

- [SQL Server] Export

- [SQL Server] Get Value

- [SQL Server] Import

- [SQL Server] SSAS Execute

- [Teams] Copy/Move From Remote

- [Teams] Copy/Move To Remote

- [Teams] Find Files

- [Teams] Send Message

- [Telegram] Send Message

- [Twitter] Send Message

- Custom Modules

- Requirements for Creating Custom Modules

- Creating a Custom Module with a Visual Studio Project

- Creating a New Custom Module

- Modifying an Existing Assembly to Become a Custom Module

- Installing a Custom Module in JobServer.NET

- Finding Out More on Creating Custom Modules

- Introducing FileGroups When Using FileLists

- Additional References

- Advanced Configuration

Introduction

JobServer.NET is a Business Process Automation (BPA) platform that is built both to provide a high degree of functionality right from the moment of installation, as well as also being a highly extendable framework for customization for and in alignment with your business practices and workflows. Starting with a simple pipeline process, existing manual processes can become fully automated in minutes. The JobServer.NET platform provides a variety of built in triggers and modules which enables many layers or combinations of operations to be defined for automation of varying complexity creating robust ways of solving workflow issues. These various processes can be completely internal to your operations, or extend all the way to any edge reaching directly out to your clients, vendors, or operational partners.

The extensibility of the platform provides a direct way for taking this existing functionality to the next level by implementing modules that can delve even deeper into areas that may be highly unique to your business requirements. As well as providing future modules and support for extending into systems using technologies such as Robotic Process Automation (RPA), RPAAI, and Hyperautomation.

JobServer.NET Platform

The JobServer.NET platform consists of multiple components which work together as a single cohesive system to provide a feature-rich apparatus for creating, managing, monitoring, and scheduling jobs. An individual job can range from a simple one step process up to a comprehensive set of steps working in tandem to solve a more involved set of requirements.

Some examples of jobs that JobServer can perform out of the box, without any custom programming:

- Twice a week, copy files from a local folder to an FTP server.

- Every night at 3:00 AM, look for new log files and compress them.

- Watch a folder, and if it contains any files over a certain age, compress them to an archive folder.

- Watch a folder, and if any new image files appear, resize and/or rotate them.

- Monitor a disk drive or network volume and generate a warning email if free space dips below 15%.

- Four times a day, only on weekdays, execute a SQL command.

- Send a notification if a remote server cannot be pinged.

The major components which make up the JobServer.NET platform is described below to provide an overview of their respective roles.

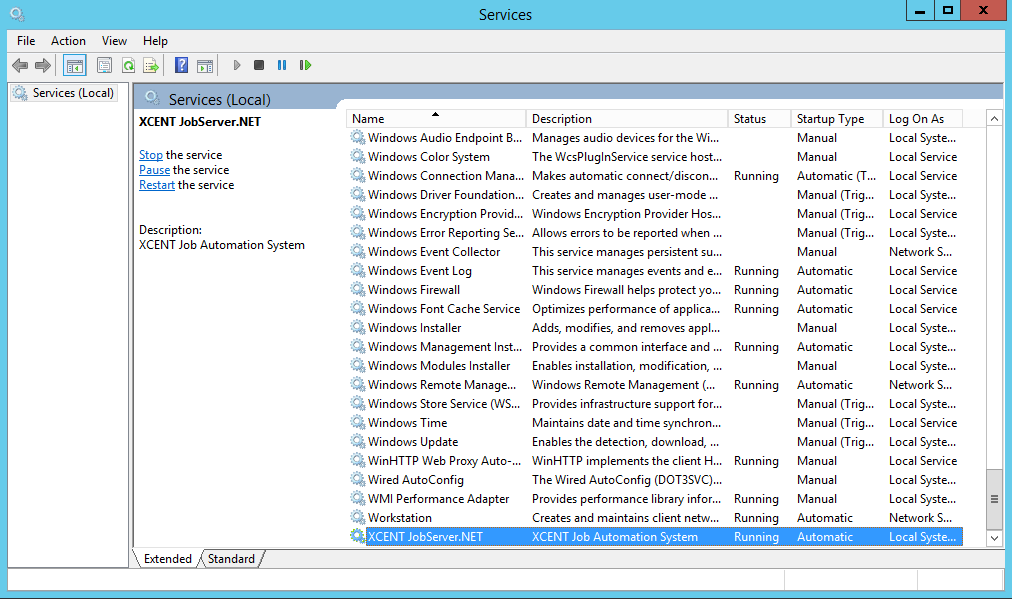

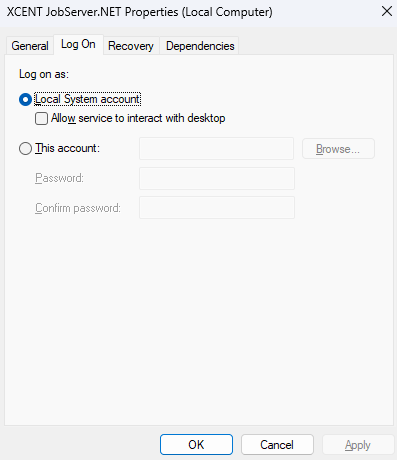

JobServer.NET Service

The JobServer.NET Service is the main component of the entire system. It is the scheduling and application platform that everything else communicates with. This runs as a standard Windows service which you will see listed as XCENT JobServer.NET. Once installed, the JobServer.NET service should always be running and under normal conditions should only ever be stopped when the Operating System is shutting down or restarting, or when an update to JobServer.NET is being installed.

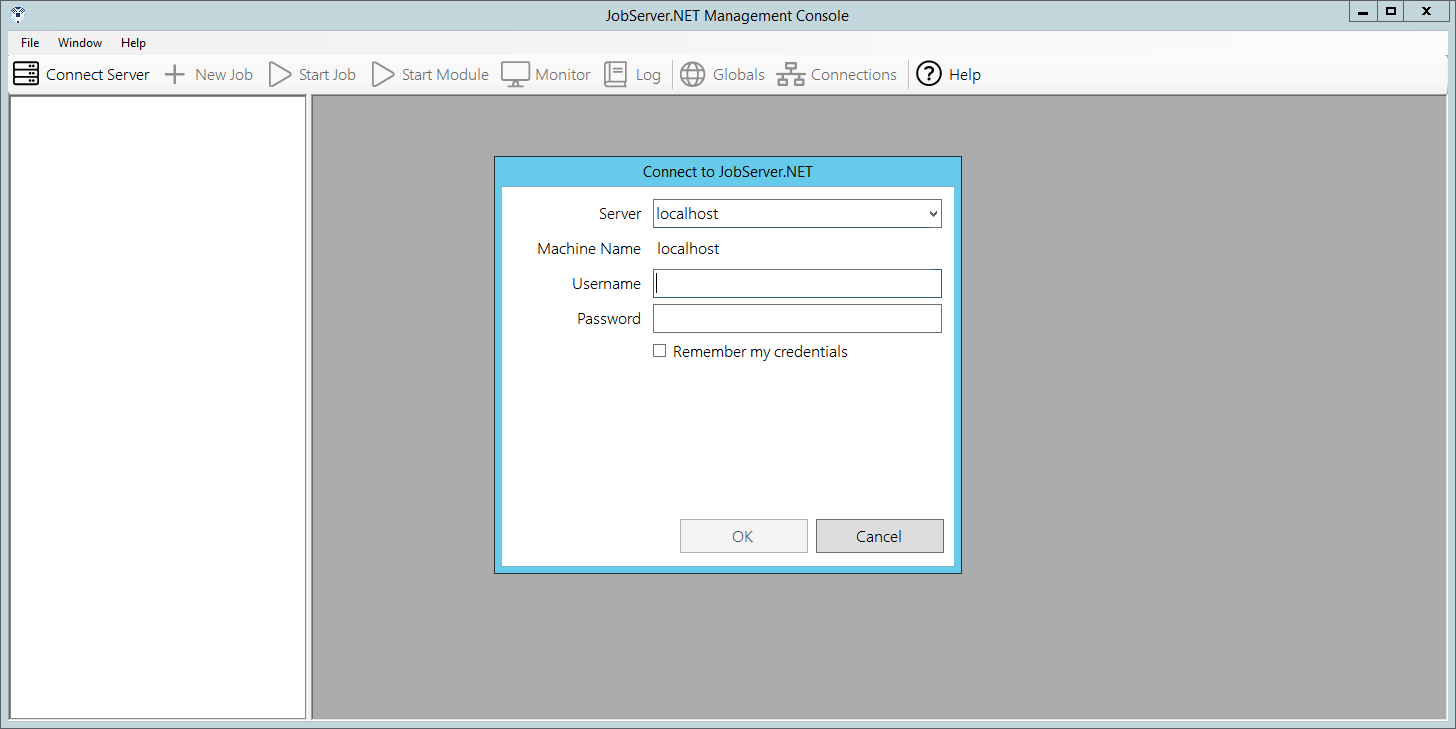

JobServer.NET Manager

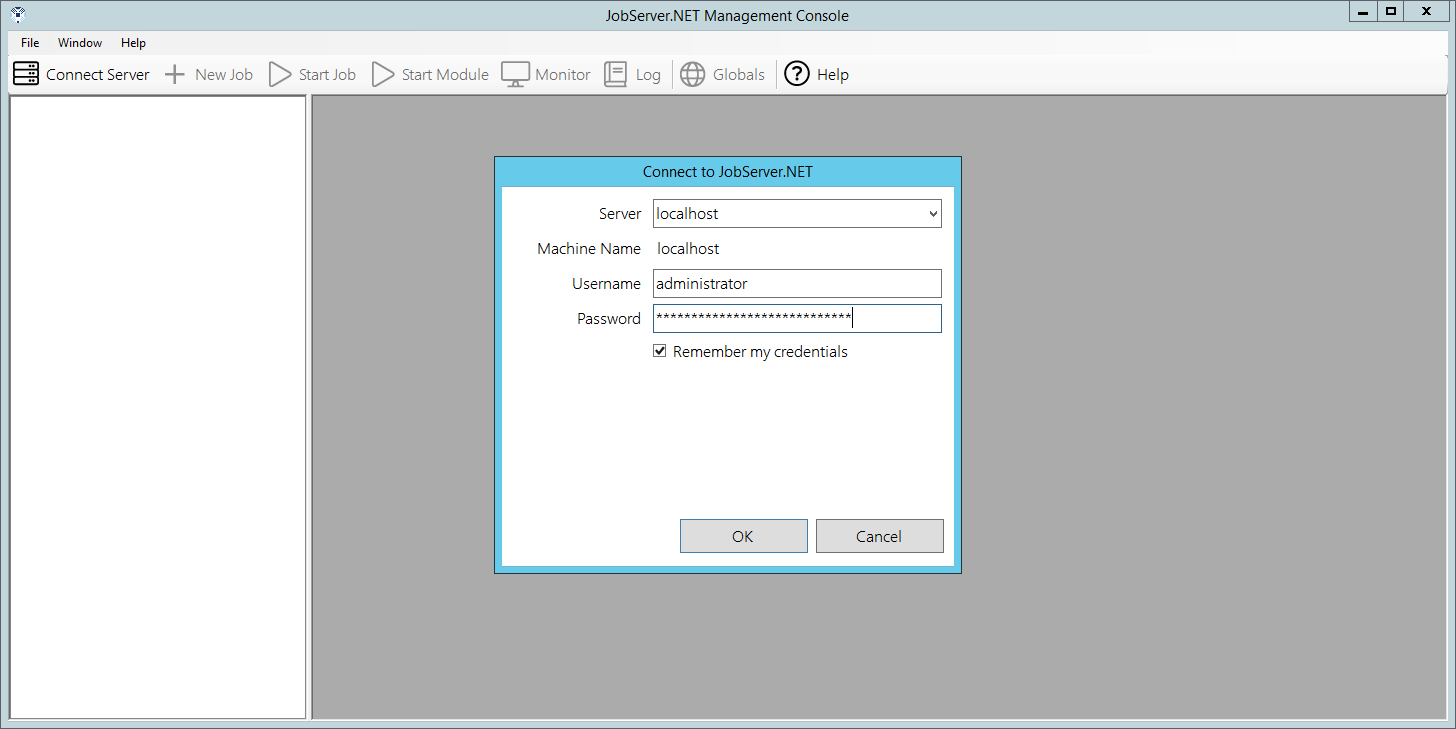

The JobServer.NET Manager is a Windows desktop application which can be installed and run on the same machine running the JobServer.NET Service, or any machine which can communicate with it. Typically, this means when JobServer.NET is installed on a machine on a private network, any machines you want to use the management application from should also be located on the same private network. When you start the management application, it will prompt for an authorized login. When the service is installed on a machine that is an Active Directory (AD) member, a valid login will be the same as your AD credentials for any administrator accounts on your network. When the service is installed on a machine that is not part of an Active Directory network, then any administrator account on that machine can be used as a valid login. Additional authorization options can be found under Authentication Options in the Advanced Configuration section.

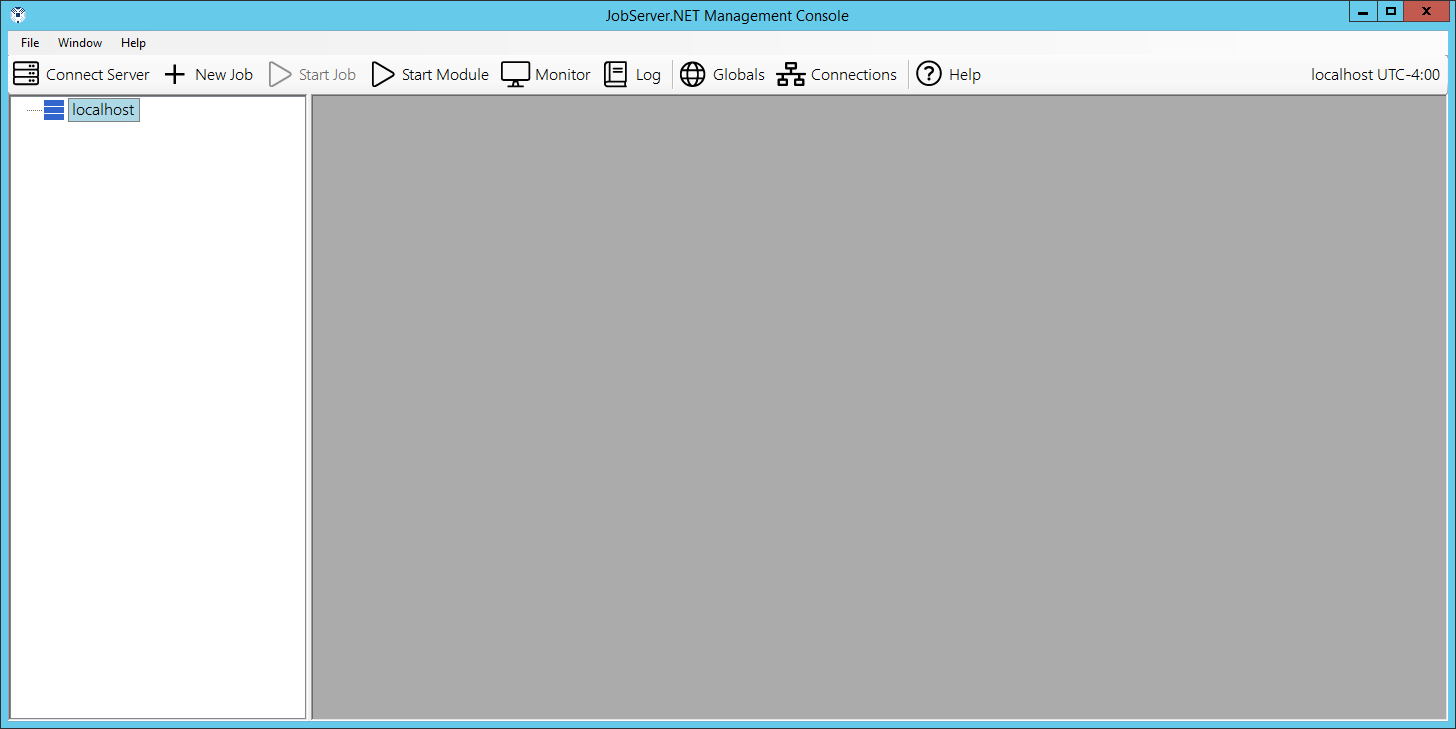

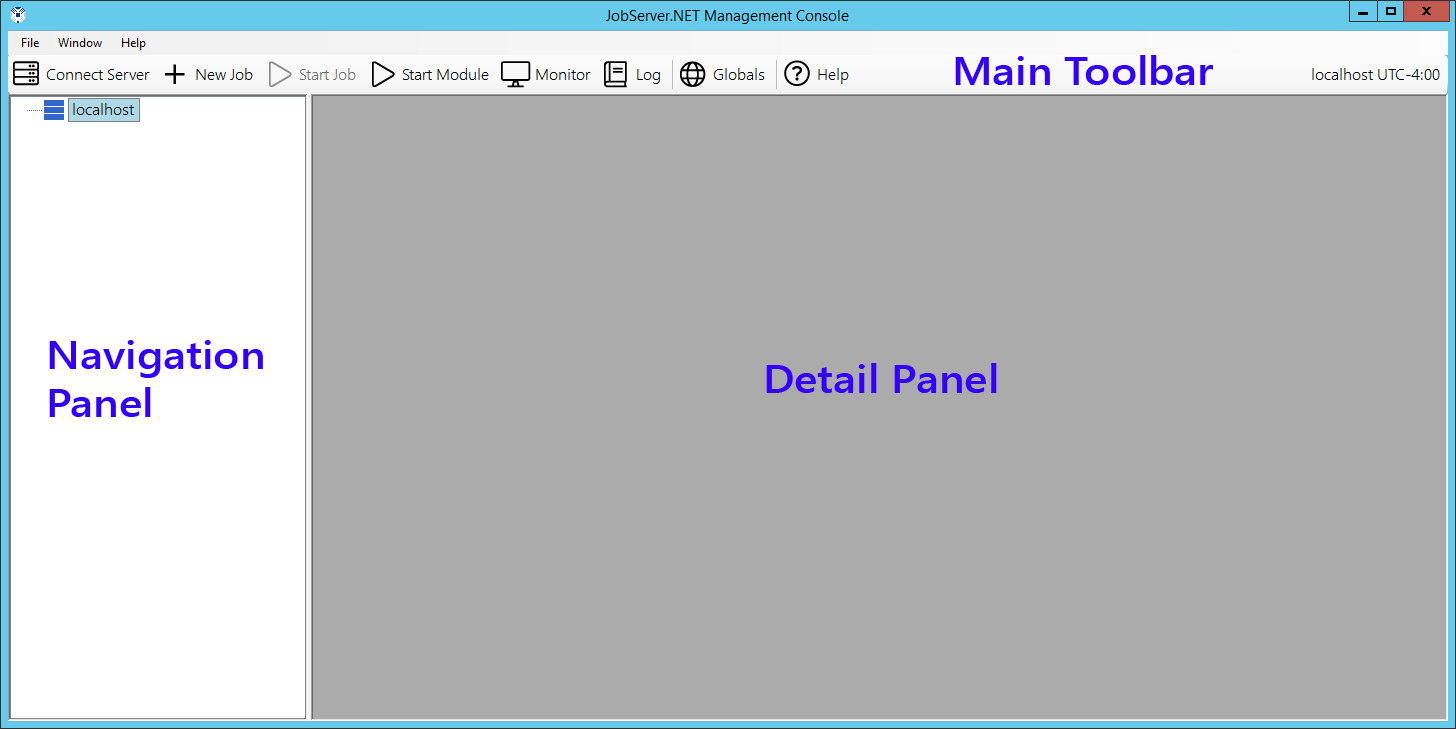

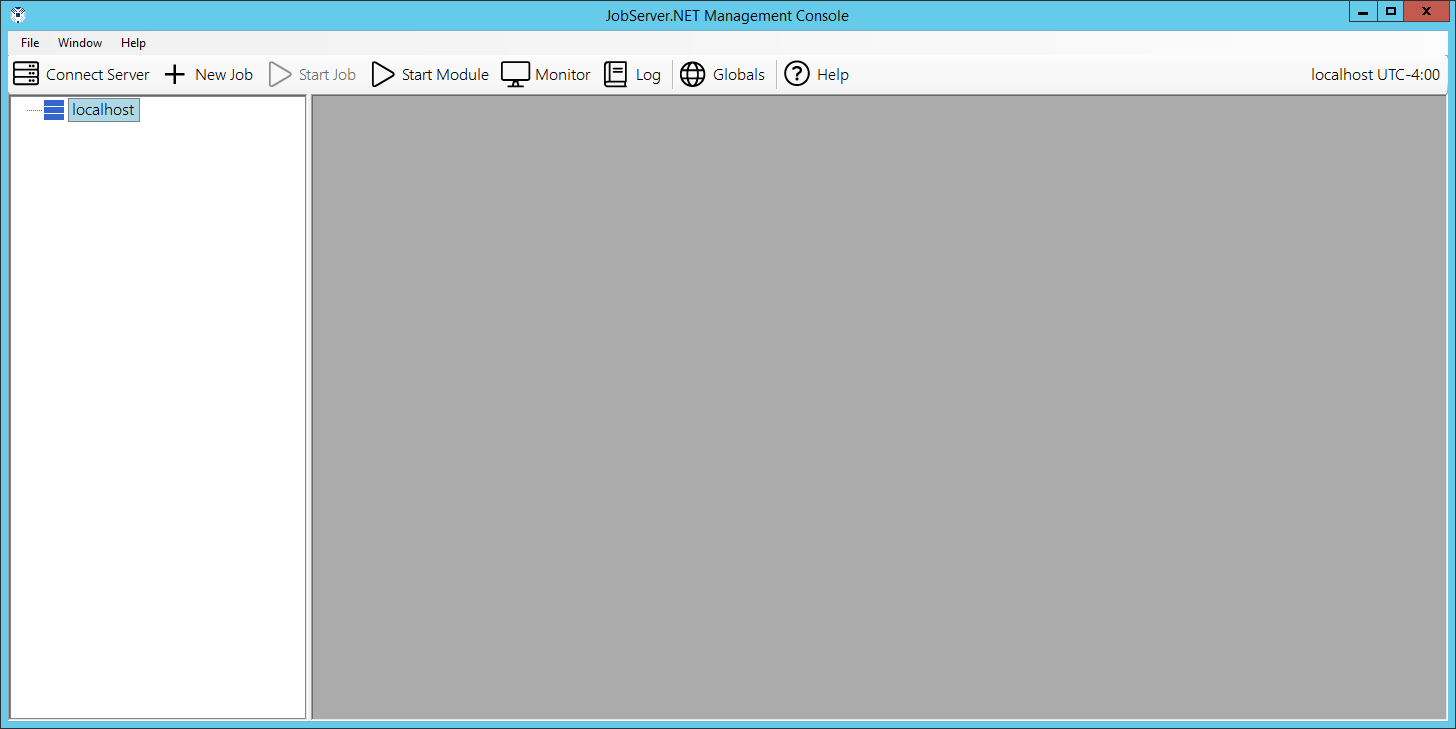

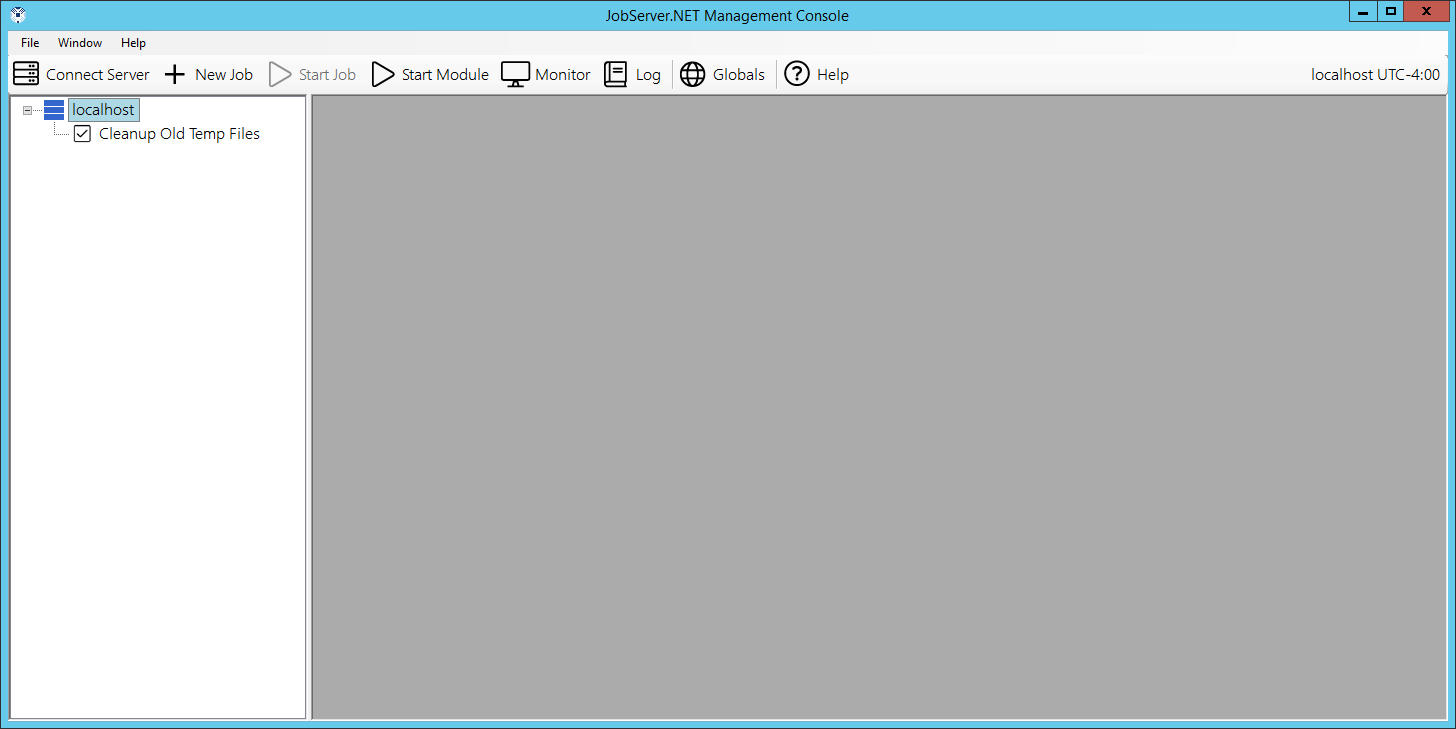

Once you are successfully logged in, the initial management application should just show your connected server and will have no jobs defined initially.

JobServer.NET Mobile Manager

Enterprise licenses of JobServer.NET will soon have the optional feature of a mobile management application for Android and iOS phones and mobile devices. Enterprise subscriptions and licensees with an active maintenance package will receive notification about the availability of the mobile management application as soon as it is released.

JobServer.NET Plugins

Plugins in JobServer.NET run directly within the JobServer.NET service. There are distinct types of plugins, Triggers and Modules. Each type has its own role within the system.

Triggers

A trigger is simply a component that can start a job.

Each trigger has its own settings that configure the conditions needed for the JobServer service to load and run a given job definition.

Probably the most used trigger is the Scheduler, which allows you to define a date and time to start running a job definition and has a multitude of recurrence options.

The next most used trigger would be the File Watcher. This trigger allows JobServer to monitor a specific folder for any new or changed files in that folder.

The Pre-Installed Triggers section outlines in detail all the triggers pre-installed with JobServer.NET.

Modules

A module within JobServer is a component which can be used by a job to perform a specific task or action. A job consists of one or more steps that execute modules, optionally started by a trigger.

A very frequently used combination for the Scheduler trigger might be to use the [Files] Delete module to clean up old log files an application might leave behind by running at a late hour every Sunday night.

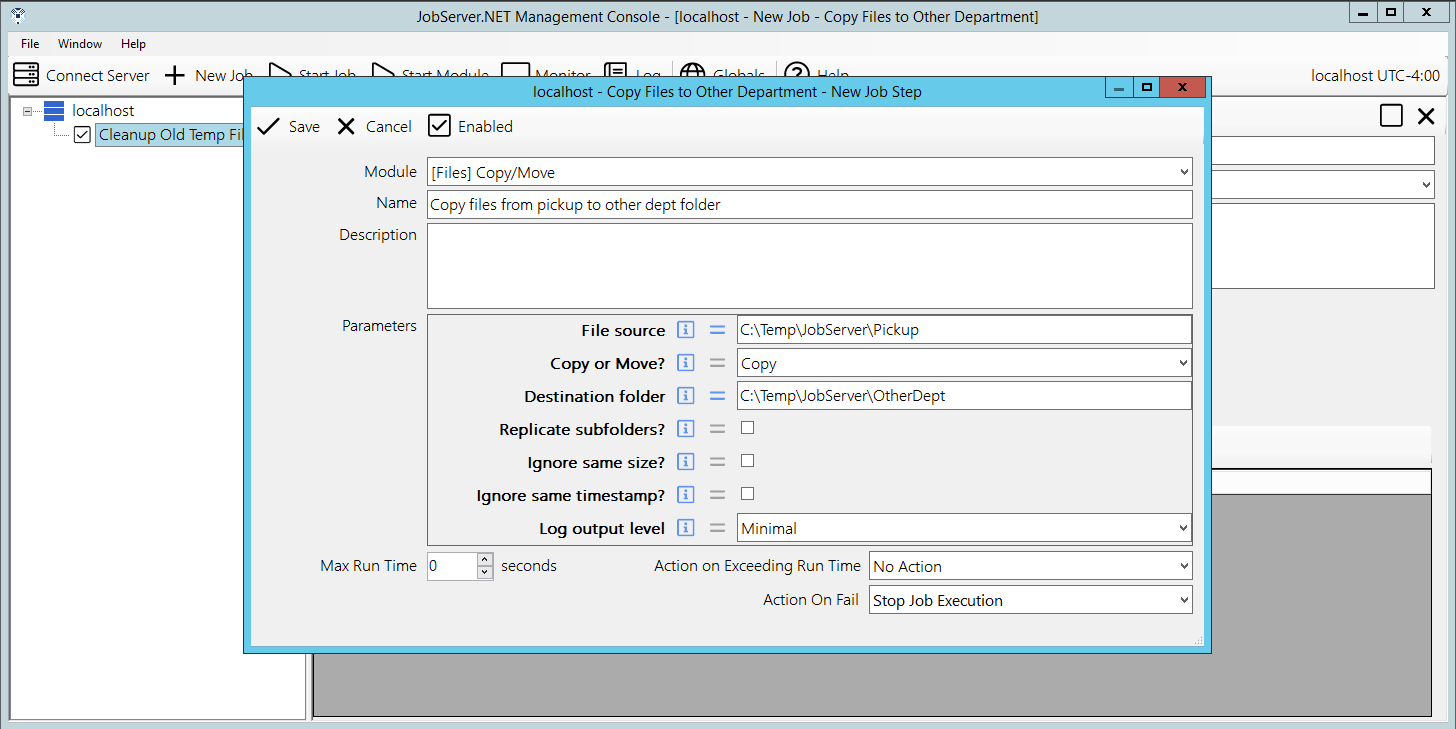

Another commonly used combination might take the File Watcher trigger and use it in combination with the [Files] Copy/Move module to copy the files to another location on the machine or on your network.

The Installed Modules section discusses in detail the many useful modules included with JobServer.

The Custom Modules section provides information on creating your own modules. One of the most useful features of the JobServer.NET platform is that C# developers can create their own modules and combine them with all the other pre-installed triggers and modules available.

Getting Started with JobServer.NET

Installation Requirements

Both the JobServer.NET Service and the JobServer.NET Manager are built using Microsoft .NET Framework 4.8 and inherit a portion of the minimum system requirements based on this. Either or both can be installed on any 64-bit version of Windows 7 or later, and Windows Server 2008 R2 SP1 or later. This includes:

| Windows Server Versions | Windows (Desktop) Versions |

|---|---|

| Windows 2025 | Windows 11 |

| Windows 2022 | Windows 10 |

| Windows 2019 | Windows 8.1 |

| Windows 2016 | Windows 8 |

| Windows Server 2012 R2 | Windows 7 |

| Windows Server 2012 | |

| Windows Server 2008 R2 SP1 | |

Note: versions of Windows Server 2008 and earlier (Windows 2003, Windows 2000, etc.), are not supported due to Microsoft not supporting installation of versions of the .NET framework greater than 4.5 for these older operating systems.

Minimum available disk space requirements: 500 MB (Recommended: 1GB)

While the basic installation of the service and manager applications do not have a heavy storage requirement, the amount of actual disk space required once you start adding jobs can vary significantly based on your configuration settings. Additionally, if your machine does not have the minimum required version of Microsoft .NET Framework installed, this does not account for space that might be needed to update your installed version of the .NET Framework.

Minimum available memory requirements: 256 MB (Recommend: 1GB)

While the basic installation of the service and manager applications do not have a heavy memory requirement, the use of the various plug-ins and how you may configure them will have the potential to require more available memory to function or to extract best system performance. Jobs will require additional memory only while they are running and automatically free up resources when complete.

For machines where only the manager application will be installed, both the disk space and memory requirements are significantly reduced.

Prerequisites

Versions of Windows 10 (version 1703) and earlier, as well as Windows Server 2016 and earlier may need to have Microsoft .NET Framework installed or updated before installation can complete. For these older versions, Framework 4.8 or later will need to be installed. For newer versions of Windows and Windows Server, a compatible version of .NET Framework is already installed by default.

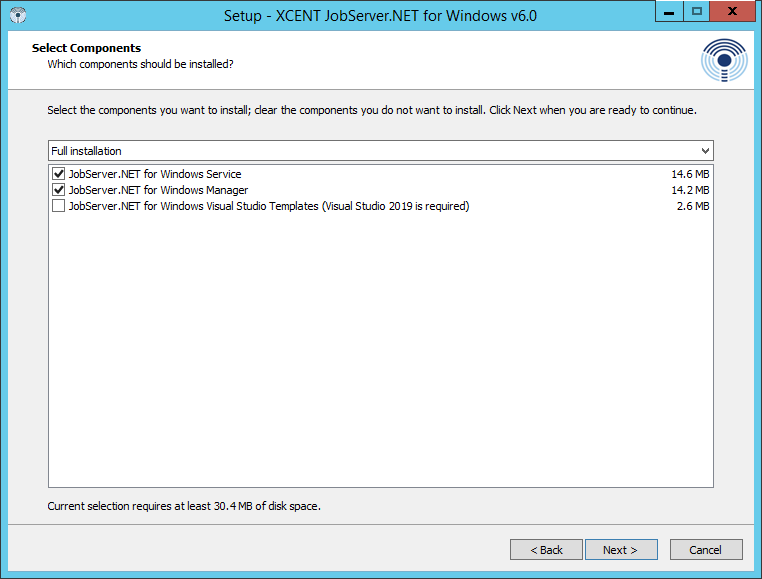

Download and Installation

Depending on where you received or viewed this documentation from, we we want to make it clear and obvious that to get the genuine and most recent installation package for JobServer.NET, all you need to do is go to https://jobserver.net/downloads. The installation package allows you to choose if you are installing just the Service, Manager, or other related tools. Just this one installer is needed no matter which parts of JobServer.NET you need to set up. When you run the installer, it will default to installing most of the options. If you only need Manager, then deselect all options except the Manager. Otherwise for most installations, the default options are all you need.

When the installer runs, any prerequisites that are not detected will be prompted for installation, such as minimum required Microsoft .NET Framework. Once the installer has completed, the Service will be running in the background which can be seen by viewing the Windows Services app (previously also found in Control Panel in older OS versions). It should provide you with the option to start the newly installed Manager application.

When running the Manager application, it should become apparent that JobServer.NET integrates immediately into your existing network configuration. There is not a default account or password to connect the Manager application to the Service application as due to this integration with Windows security, your login to JobServer.NET is tied to your Windows network or machine credentials. Thus, to login the first time, your available methods will depend on if your machine is running as a domain member on your network or is a standalone or workgroup machine.

For machines that are domain members, then your login credentials are your standard Active Directory network credentials for any accounts that are administrators or have had JobServer.NET based groups assigned to them. See the Authentication Options section if you prefer to use accounts that are not administrators.

For machines that are not domain members, then your login credentials are your local machine-based login credentials. This means you just use the same login and password you would use to login to an administrator account on the machine. The same JobServer.NET based groups can be defined on a local machine’s accounts if you prefer to not use the administrator account(s).

Once you successfully login for the first time on a given machine with a new installation, you will be automatically prompted to create your first job. If you have any trouble logging into the Manager application, see the online guide at https://kb.jobserver.net/Q100001 for a guided tour on diagnosing issues with connections or authentication.

The rest of this section will walk you through the basics of setting up and creating some example jobs and getting you familiar with the overall features you need to get started with using JobServer.NET.

Settings

The JobServer.NET Service operates in an invisible fashion if you do not actively have the Manager application open and are sitting and watching it. Of course, this is exactly what it should be doing most of the time. But on the occasions when something happens or a problem is detected, it can notify you or your team about any of these detected issues. It does this by sending a notification. Notifications can go to one or more team members via email and the Notification Settings option is how you configure this.

To configure email notifications, you will need the connection information for your network’s SMTP email server. The connection information options are described here to help guide you. Once you have entered the correct details, you can use the Test button on the settings form to send a test notification from JobServer.NET. Keep in mind that the test feature sends the notification email immediately, but depending on your email configuration, it might take some time for it to arrive. It is not unusual for externally hosted email services to take a couple of minutes to show new messages in your inbox.

If you have any trouble with your notification settings, see the online guide at https://kb.jobserver.net/Q100002 for a guide on configuring SMTP connections.

| Setting | Description |

|---|---|

| SMTP Server | The fully qualified URL to your email server. If you need to specify a port different from the default port, you can specify it by adding a colon and the desired port number after the URL. Examples: smtp.example.com, smtp.example.com:2525 |

| Username | If the above email server requires authentication, then the username for the account should be specified here. Note that many times these account names will look like or are the same as the sending email address, but this is not always true. Example: user@example.com |

| Password | If the email server requires authentication, then this will be the password for the account specified with the username setting. |

| Use Secure Transport | If the email server requires or allows encrypted communications, this option should be checked to turn on the encryption option. Most modern email servers support this and we highly recommend using encryption if it is available. Many mail servers are starting to require all connections to be encrypted. |

| Send As | The email address of the account that the email sent by JobServer.NET appears to come from. Some email servers may require this to be a valid email address. Otherwise, a common option here is to put a non-existent address so if someone accidentally replies to it, they will get a bounced message right away. Note: this should always just be a single email address. Examples: noreply@example.com, support@example.com |

| Deliver To | The email addresses of the accounts that should receive notification messages. If your email server supports group addresses and you have one defined for the role, then we highly recommend putting just the defined group address here. Otherwise, you can specify any number of recipients for notifications by simply separating email addresses with a comma. Examples: admin@example.com, jean@example.com, joe@example.com, itsupport@example.com |

Layout of the Manager Application

Once you are logged into the manager application, you can see that there are three main elements to the application’s layout. Below the menu bar on the manager window is the main toolbar. Most of the buttons on the main toolbar are not enabled and cannot be used until you have taken some other steps which we will get to shortly. The two buttons that are always enabled are the Connect Server button, which we will be addressing in one of the next sections. And there is the Help button which will open your default browser to the main help page for JobServer.NET.

Below the main toolbar, we see the rest of the window is split into two panels. The left-side panel is the navigation panel. The navigation panel will display our server connection(s) and hierarchical lists of jobs for each server. The right-side panel is the control area. As you perform various actions, they will open different windows in the control area. You may have multiple windows open in the control area and can switch between them as needed. In between the navigation panel and the control area, the bar can be grabbed with your pointer to resize the panels to best suit your display.

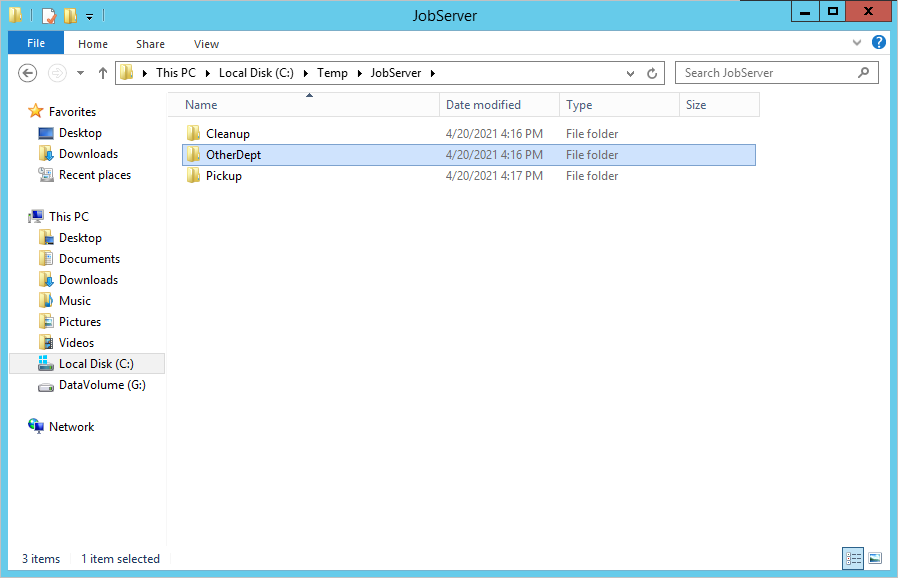

Prepare Test Folder Location

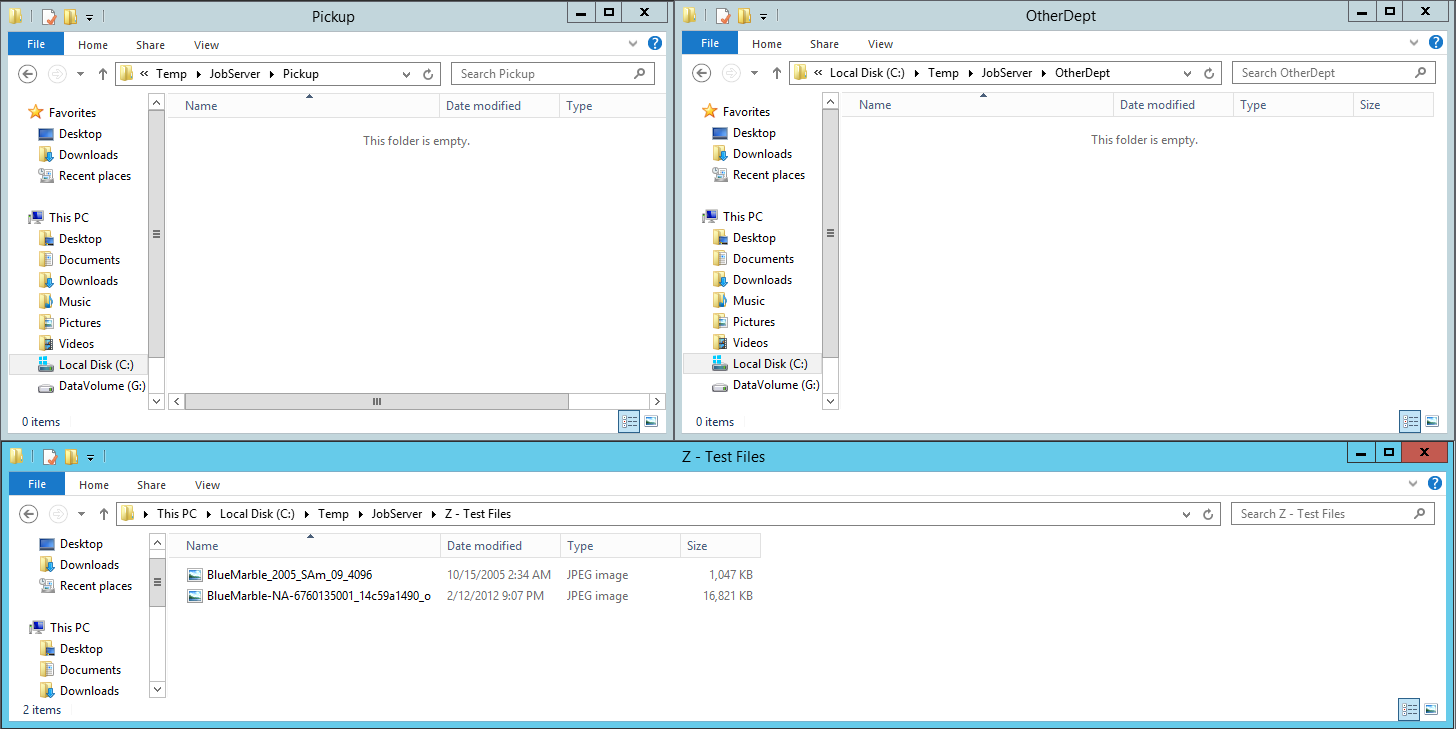

Since the following topics in this section will walk you through creating some example jobs, you will want to prepare for this by creating or picking an appropriate test folder location. Most Windows machines may have a temporary folder as C:\Temp. This should be fine for most people, but you can certainly pick any location that is more suitable. Just substitute your desired path for C:\Temp in the examples below. To start with, create a JobServer folder in C:\Temp, and then underneath the JobServer folder, create the following subfolders: Cleanup, OtherDept, and Pickup. You should wind up having the following folders:

- C:\Temp\JobServer\Cleanup

- C:\Temp\JobServer\OtherDept

- C:\Temp\JobServer\Pickup

If you need more detail than presented here to follow along with instead of the steps outlined in this section, see the guide at https://kb.jobserver.net/Q100003 for a more visual tutorial.

Creating a Schedule Based Job Definition

Once you have completed your notification settings, we can try setting up a sample schedule-based job definition. Schedule based jobs mean that you are going to run a job definition one or more times on some determinable date and time based pattern. This is done by using a Scheduler trigger and is one of the most used methods for starting jobs. When you need to pick up files from some location on your network, run and deliver some type of report, or perform updates of data in a database, the scheduler is a reliable building block to make these types of processes happen.

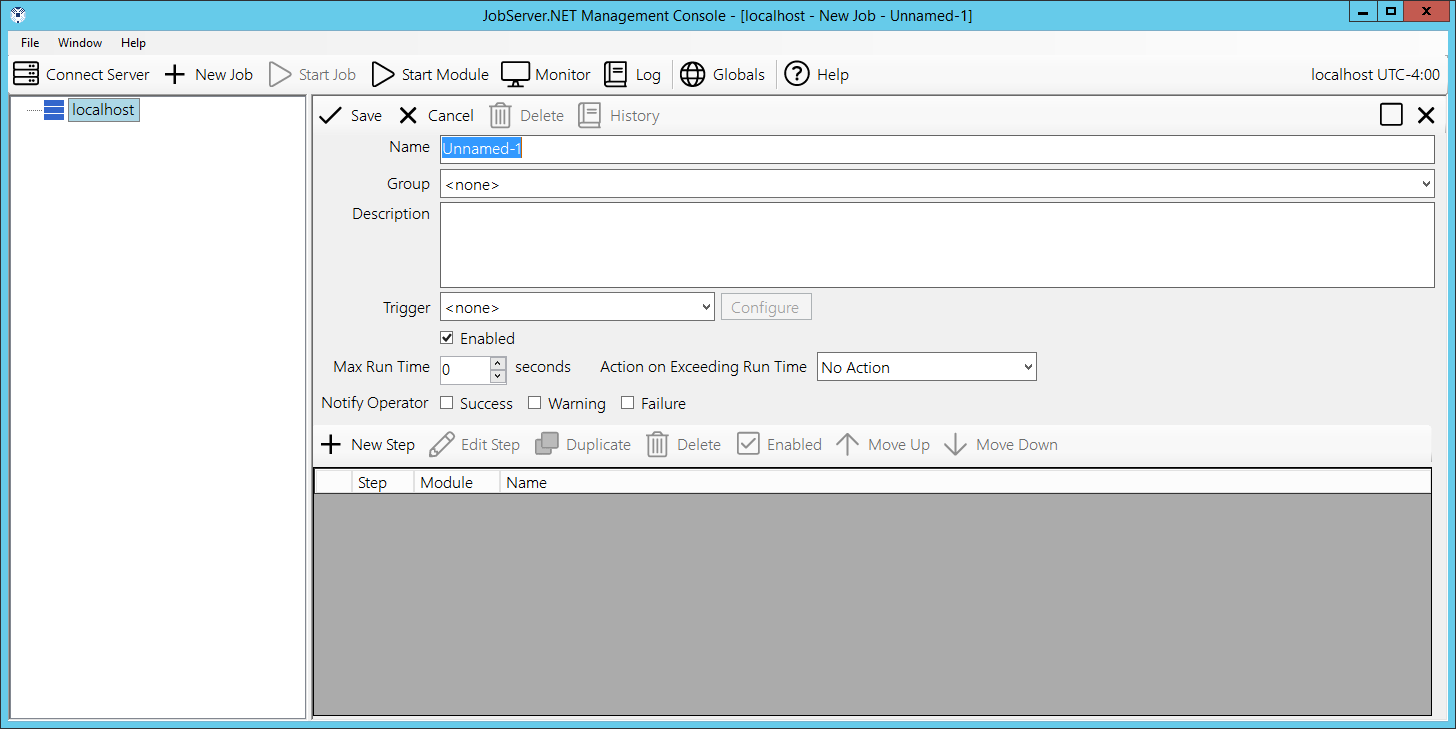

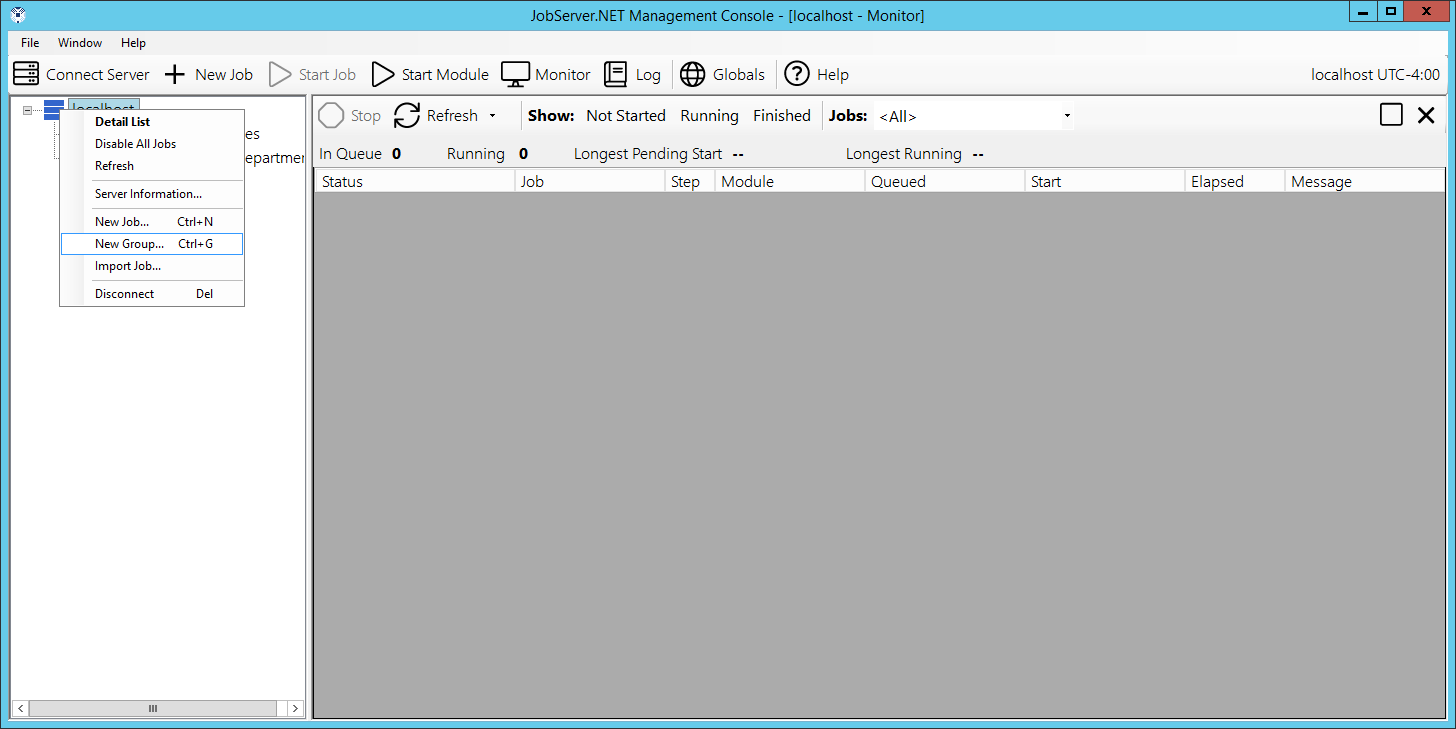

To start with creating an example scheduler, look at the manager application. In the navigation panel, it shows you the JobServer.NET machine(s) for which you are connected to. Under each server you can be connected to, you can see a view of the job definitions that are defined on each server. For a new installation, you will just see your connected JobServer.NET machine and no job definitions listed below it.

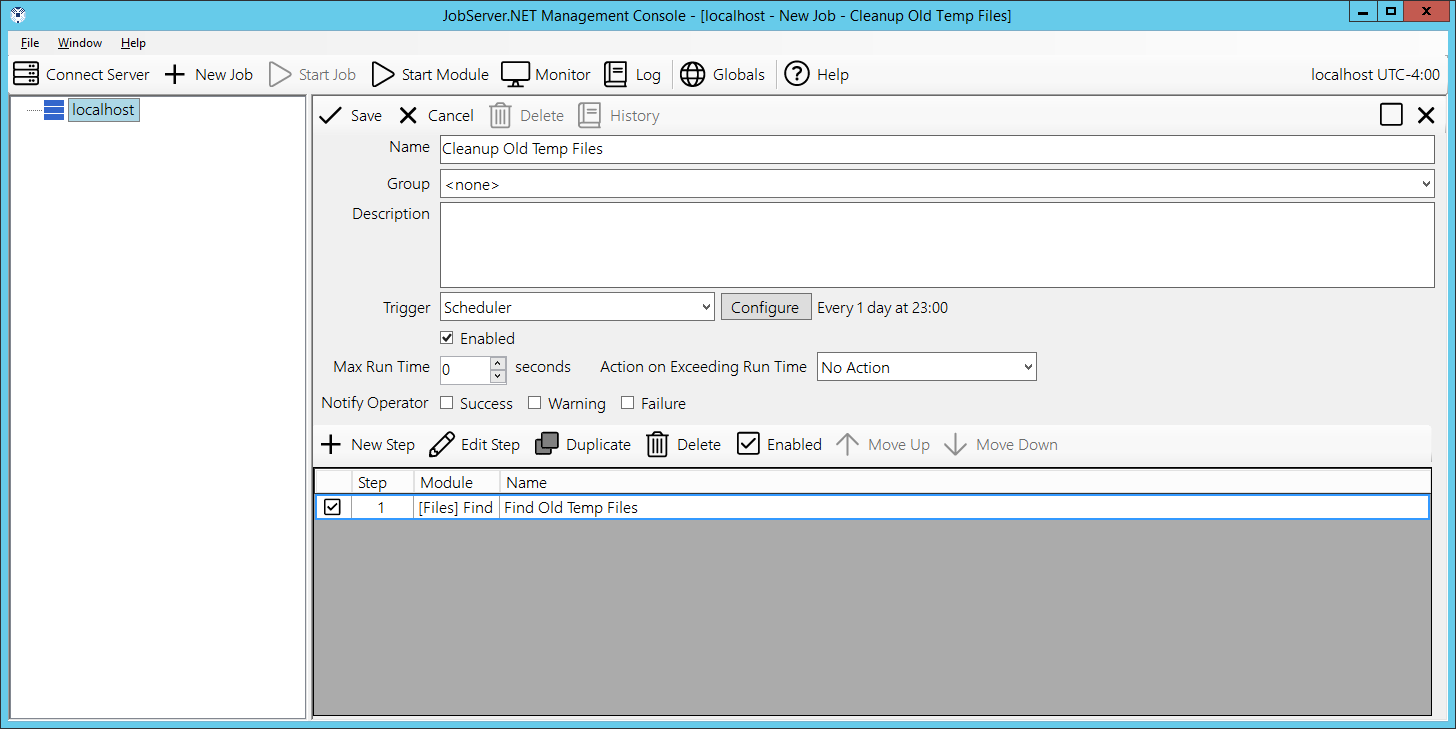

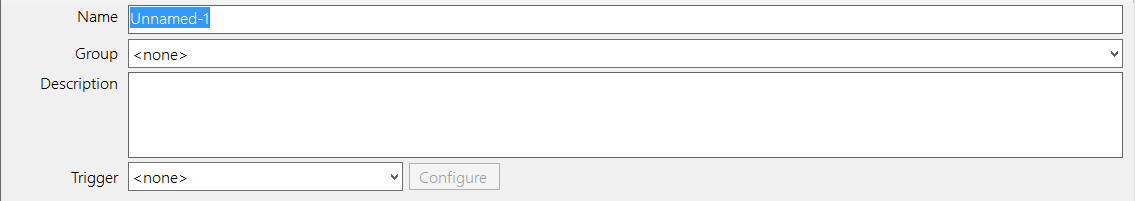

When you right click on your connected server, you will see a pop-up menu list of options for your server. Select the New Job option from the menu and in the control area will show a new empty job definition form. For the Name field, enter “Cleanup Old Temp Files”. Then from the Trigger field a few lines down, select the Scheduler option from the drop-down list.

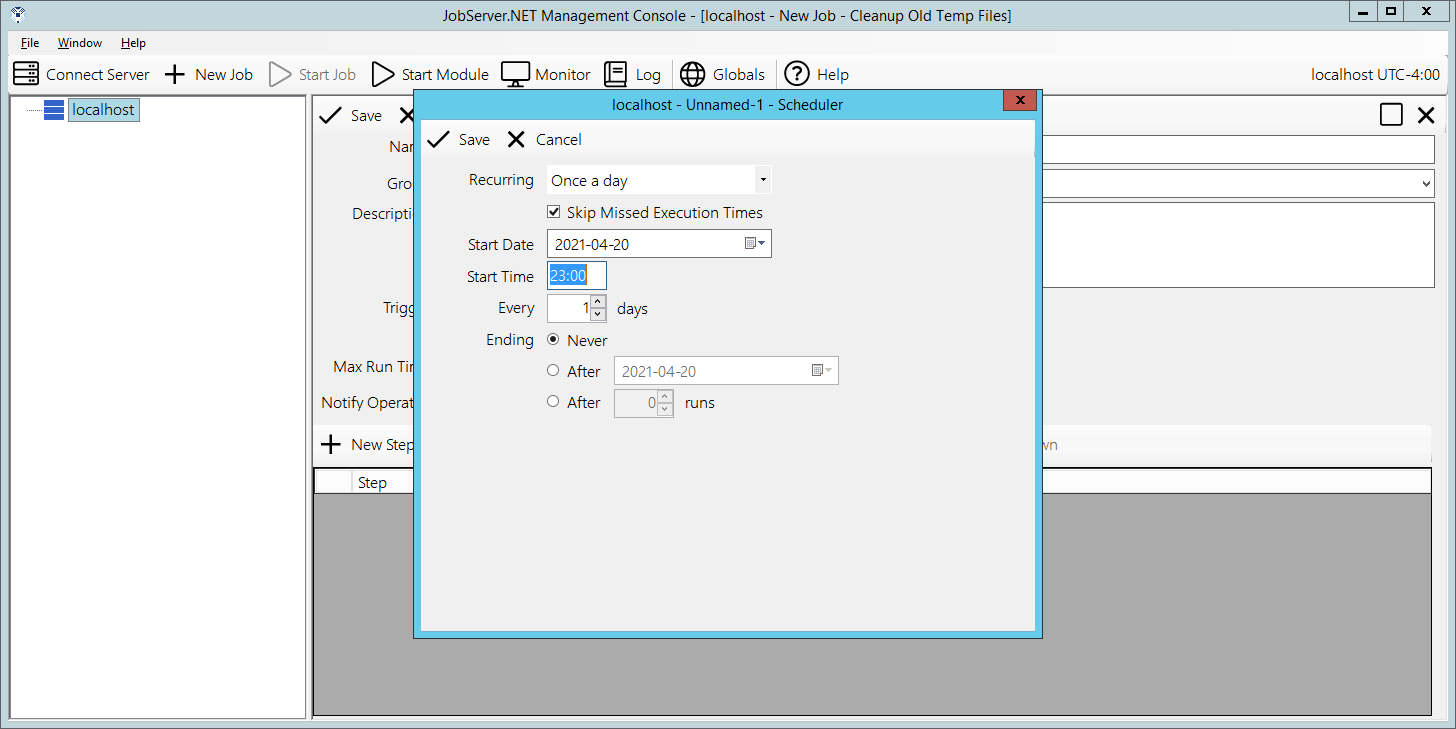

Once you do, you will see a new dialog open with the options for the scheduler trigger. The first step of course is the only visible option so far, picking a Recurring option. Start off by picking the Once a day option, as the other options are covered in more detail later. The Start Date will default to the current date, so we just want to pick a time of day you want to cleanup some old files. Since JobServer.NET displays times in 24-hour format, pick a time suitable for you. We might recommend entering 23:00 so this job will run an hour before midnight each day. Click the Save button when you are ready.

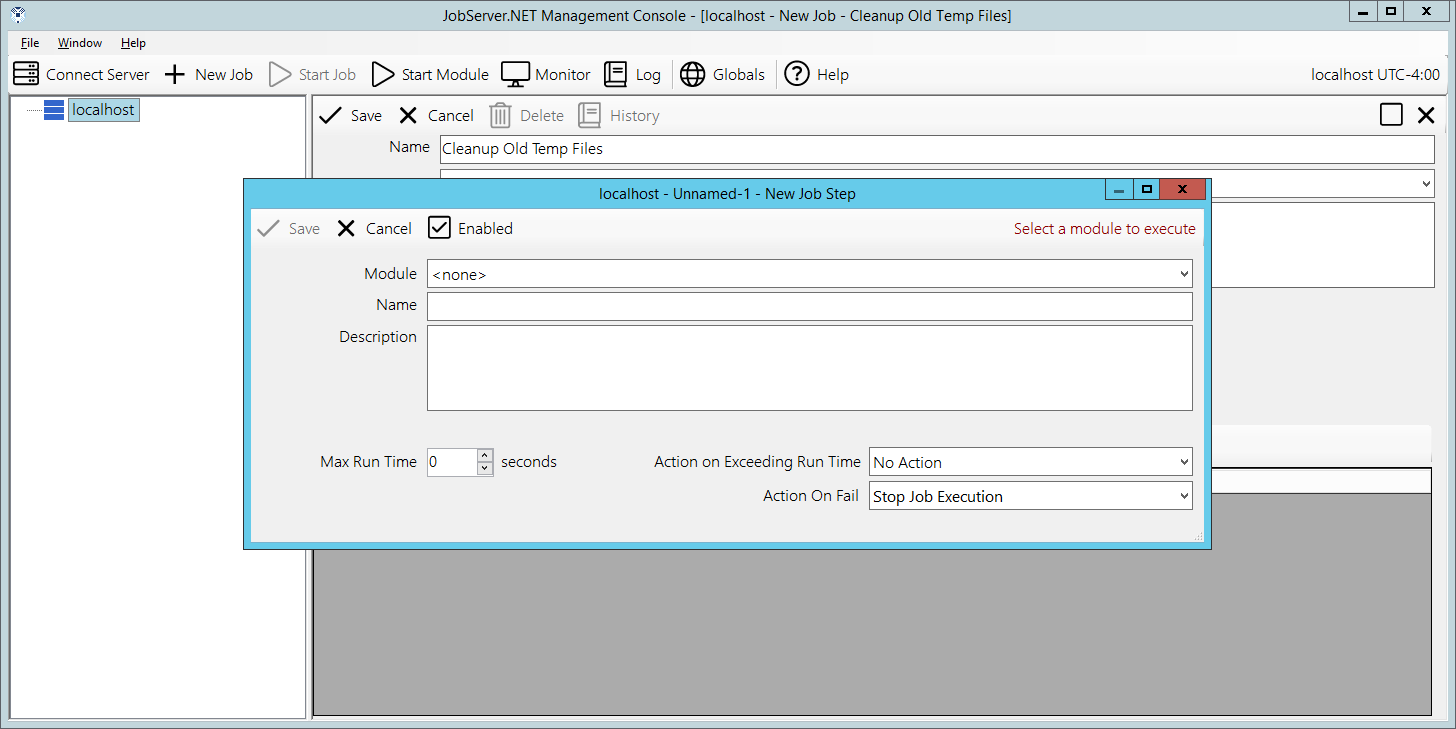

At this point we have started defining this job and when we want it to run. Now we need to tell it what we want it to do. In the bottom section of the job definition, you want to locate and click on the button titled New Step. This will open the new job step dialog. The first field here is the Module field, click here to see the list of installed modules and locate the one called [Files] Find and click on it. This module is used to search for any number of eligible files that meet all the search criteria you might need.

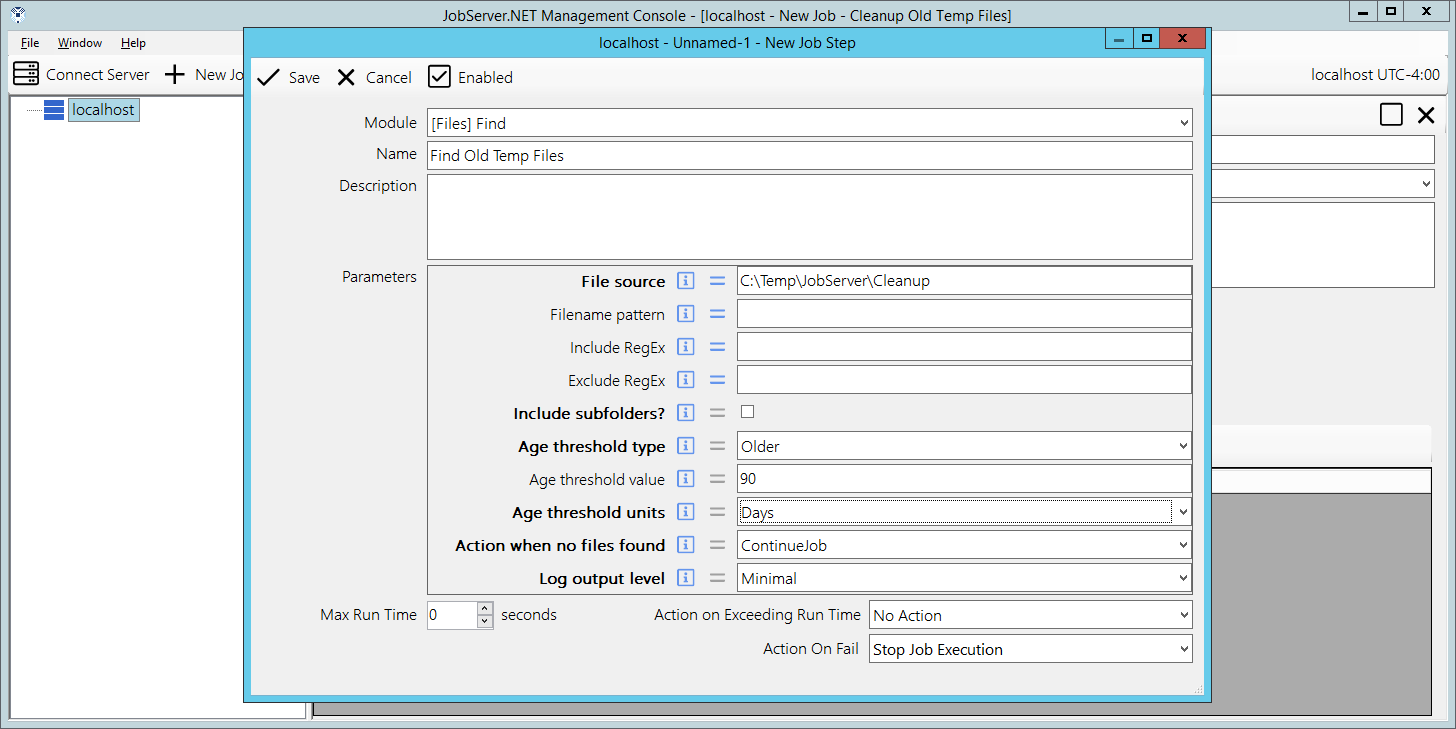

When that has been selected, you should now see the Files Find module has loaded and is now showing all the configuration parameters available. Before we just to that, update the Name field by entering “Find Old Temp Files” into it. Then locate the first parameter called File Source and add a path to a folder on your JobServer.NET machine that contains some old files you no longer want to keep around. For testing, we will use one of the test folders we created above. So, for this example we are putting the folder path of “C:\Temp\JobServer\Cleanup” into the File source parameter. Next, we change the Age threshold type parameter to a value of Older. Then we set Age threshold value to 90. And finally set Age threshold units to Days.

This combination of options tells the Files-Find module that we want to find all files in this folder that are older than 90 days old. So, this step is selecting all the files that we are about to do something within the next step. We can now finish this step by clicking the Save button and returning to the main job definition.

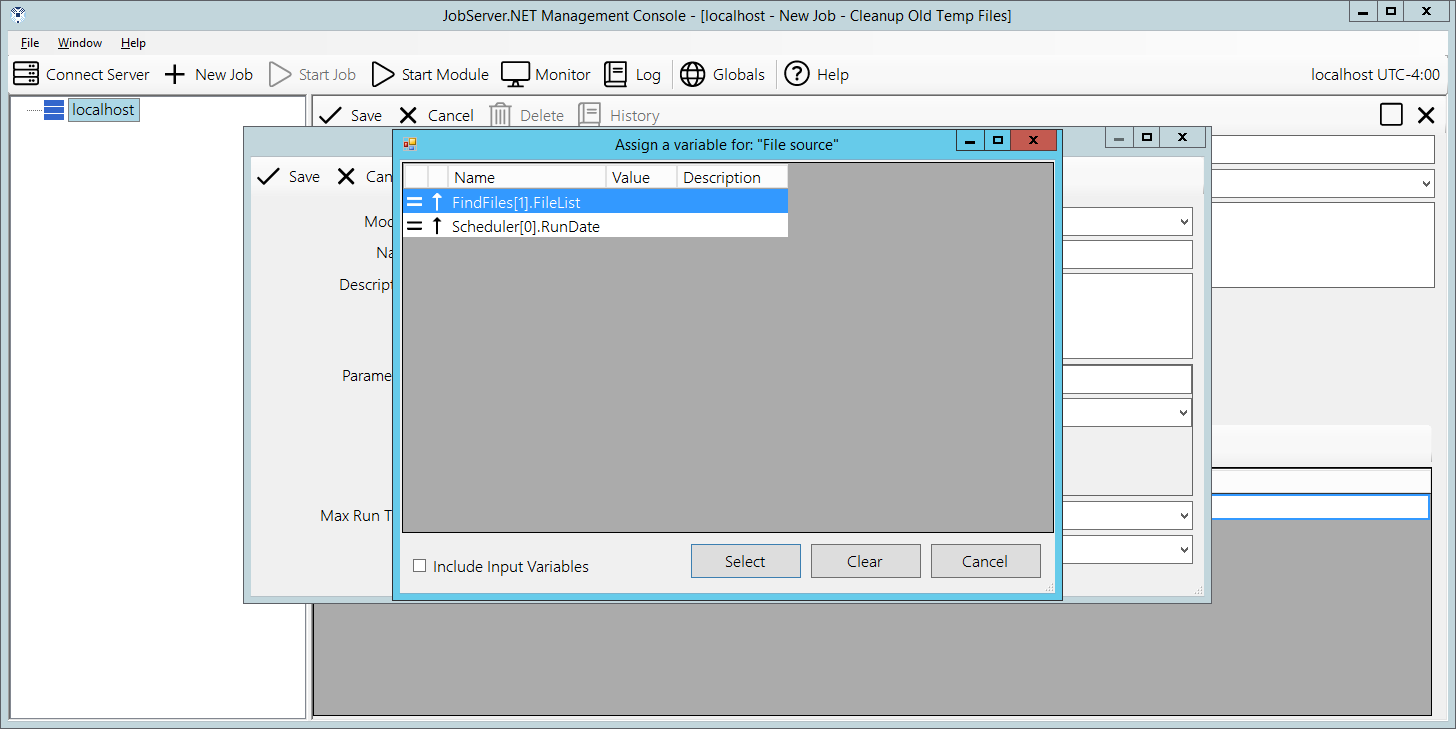

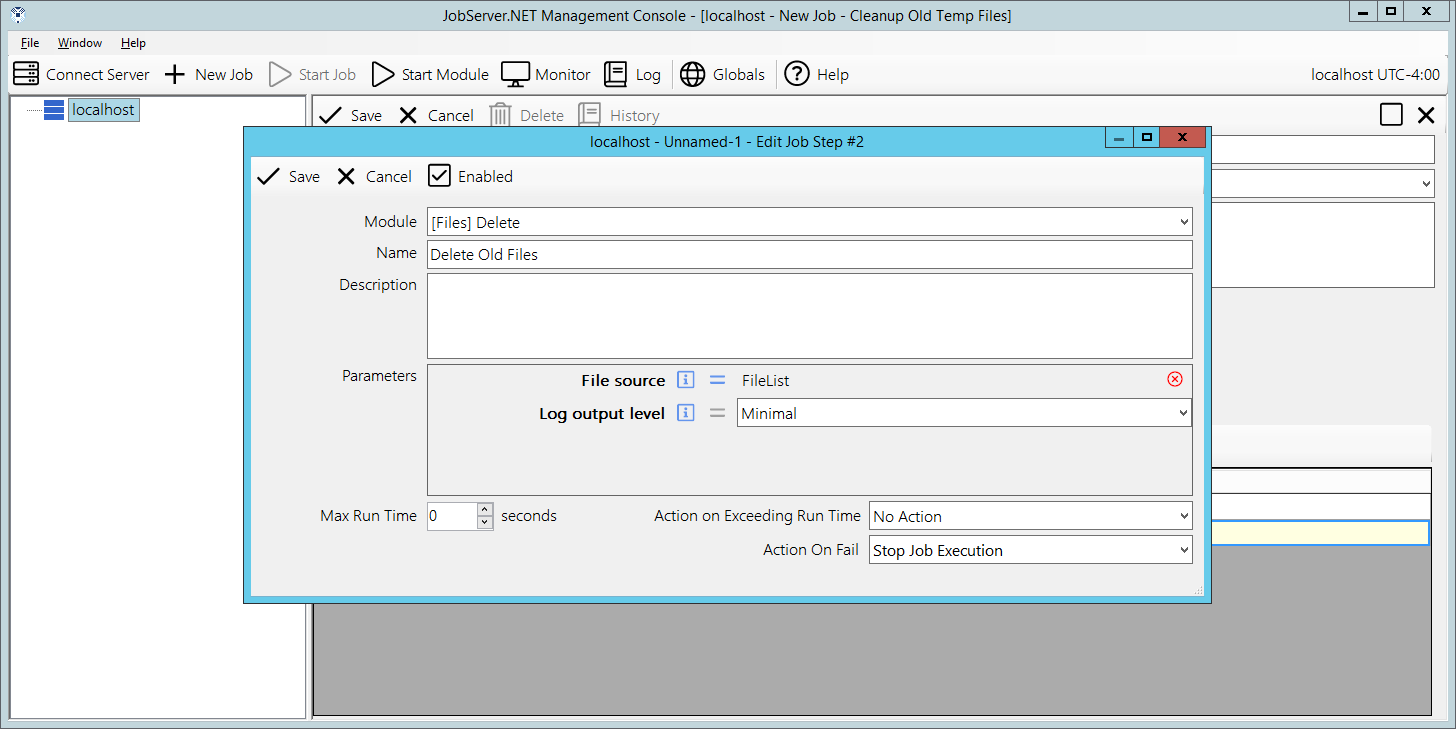

We now have a job definition with a single step defined which retrieves a list of files. Next we want to do something with that list of files. We do this by adding another step after the first one by again clicking the New Step button. This time when we get the new job step dialog, we want to select the [Files] Delete module. Update the Name field by entering “Delete Old Files” into it. The File source parameter listed here just needs to be connected to the list of files that the previous step has found for us. To do that, select the Assign button on the line for the File source parameter. This is the button that looks like an equal sign. Once you do that, you will see the assignment dialog with a list of all the possible variable and parameter options. You want to locate the one from the FindFiles module in Step 1 with the output parameter of FileList. The FileList parameter name is used by [Files] Find and some other modules to output a list of files that the module has worked on or found in this example.

You can select the FileList output from the [Files] Find module and click the Select button. This now connects the output of [Files] Find to the FileSource input for this module in this step. When a parameter’s value is assigned, you should notice that the value part of the control now changes from an input box to a display showing the linked parameter. When you are ready, you can click the Save button to complete the [Files] Delete step.

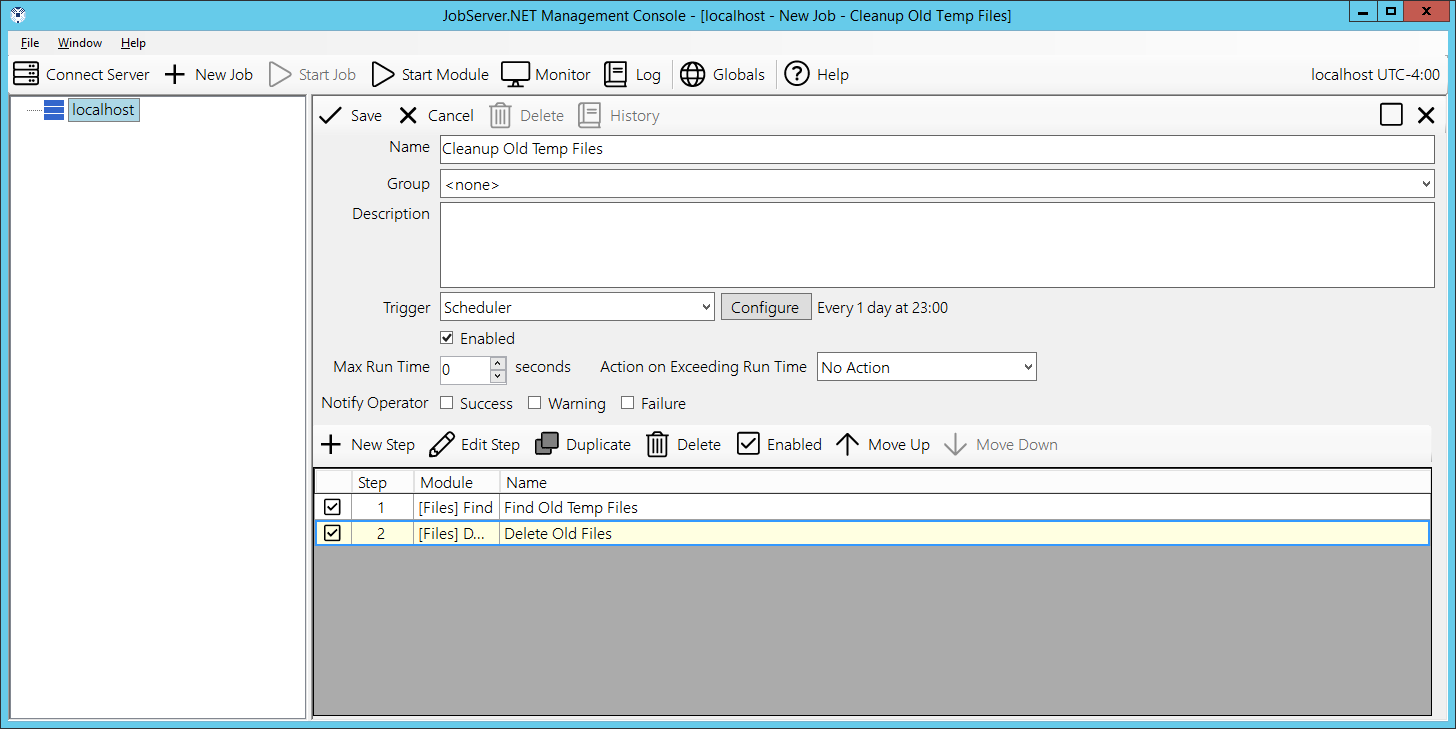

Now that you have created this second step, it takes all the files found from the first step and deletes them. This is a good example of how you can clean up folders of old log files and other outdated files you may no longer want to keep around.

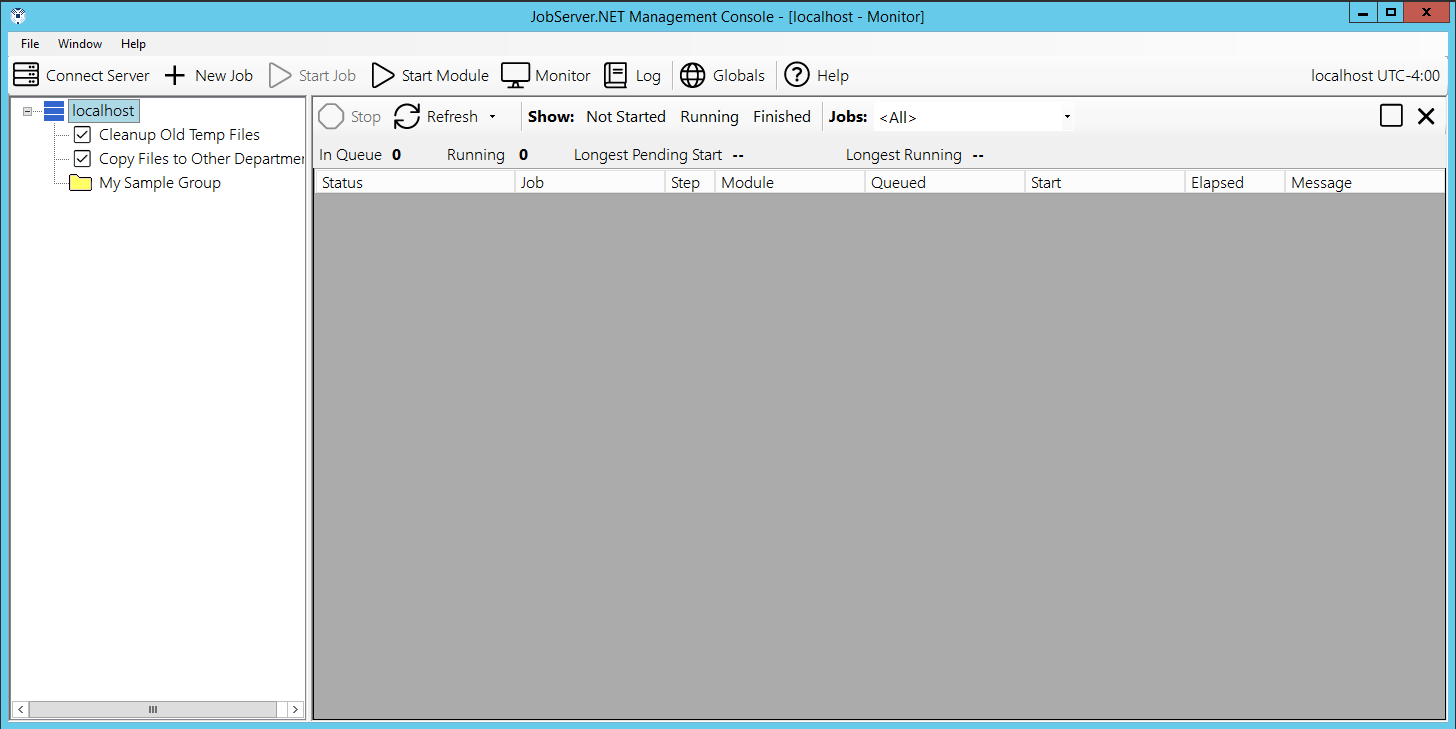

Once you click the Save button as the top of the job definition, you will see the job is added to the navigation panel under the server and the job is now active. It will clean up any of the older files it finds in the folder you specified at its scheduled time every day.

Running Ad-Hoc Jobs and Monitoring

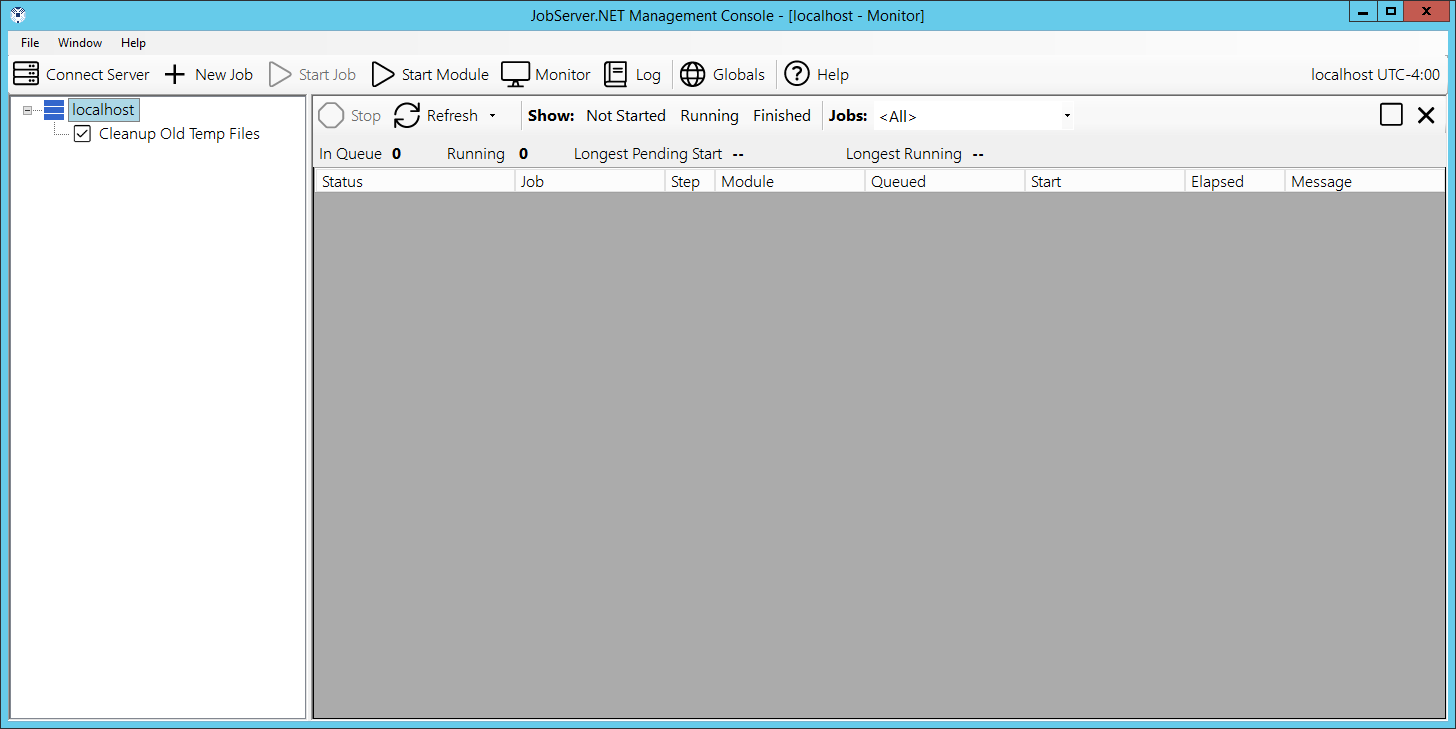

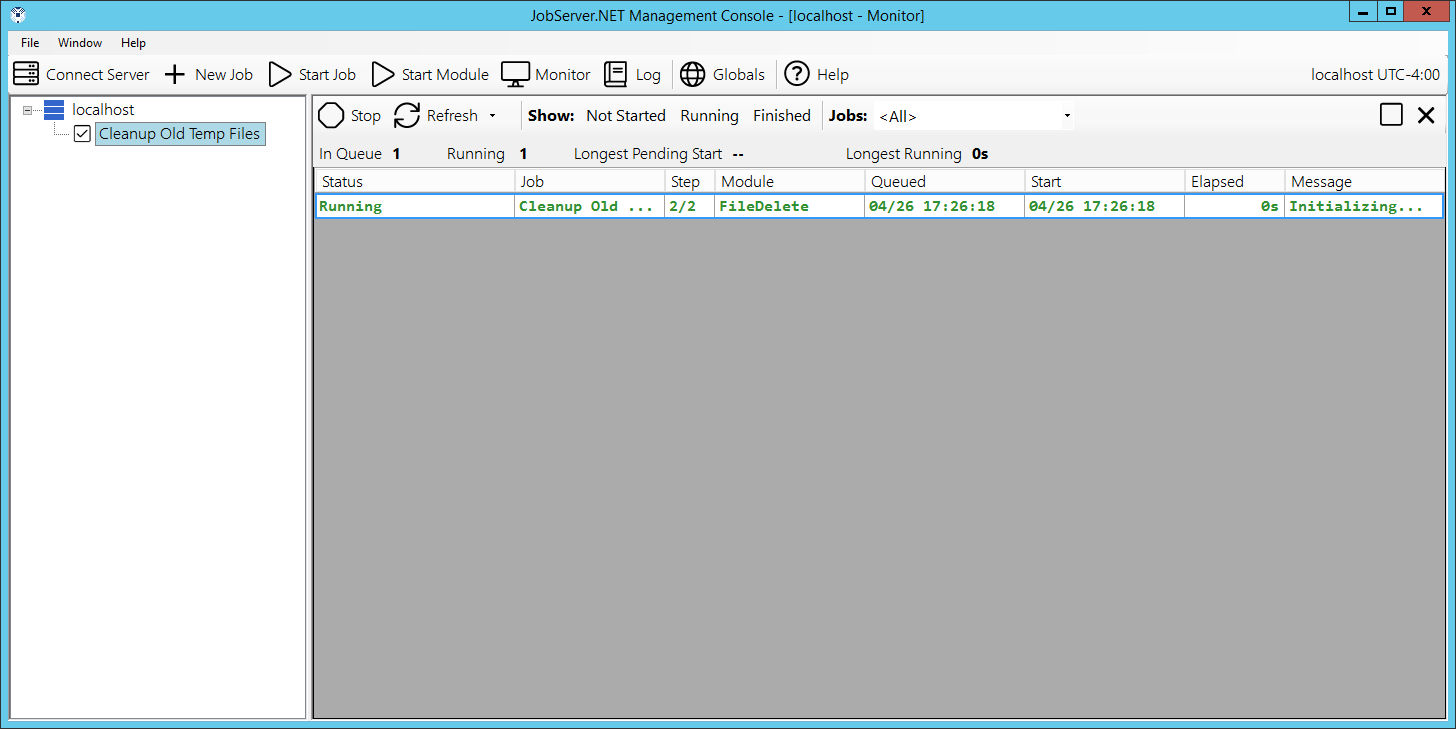

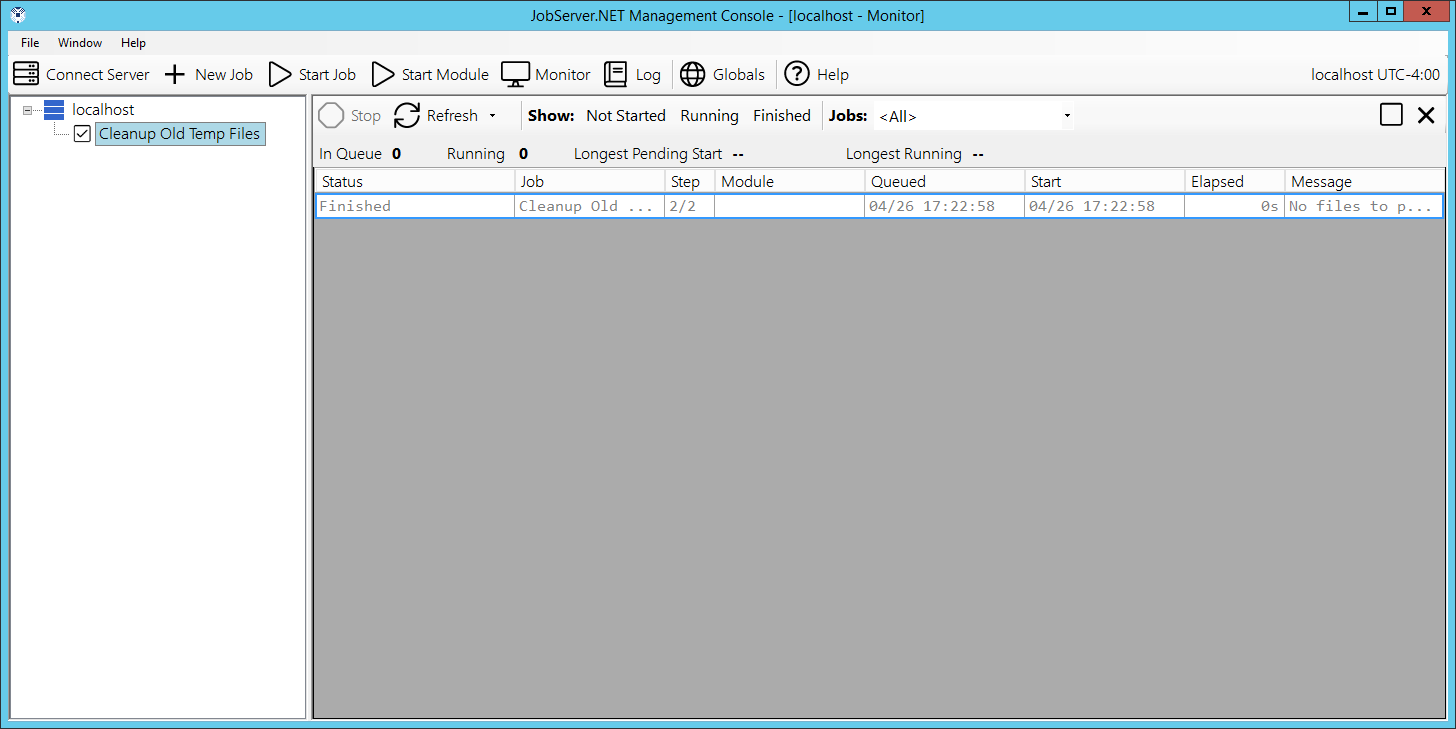

You now have your first job ready to run. To try it out be sure to add some files to the folder you specified when you created job step 1 in the above exercise. Make sure some are files older than your age setting (older than 90 days if you followed the example exactly). And be sure to include files that are newer. But once you have done that, you do not want to wait until late at night to see what happens when the job is executed. We will see how you do that in just a moment. First, you want to locate the Monitor button on the toolbar of the Manager application and click it. This opens the monitor which shows you what jobs are queued and executing on JobServer.NET right now.

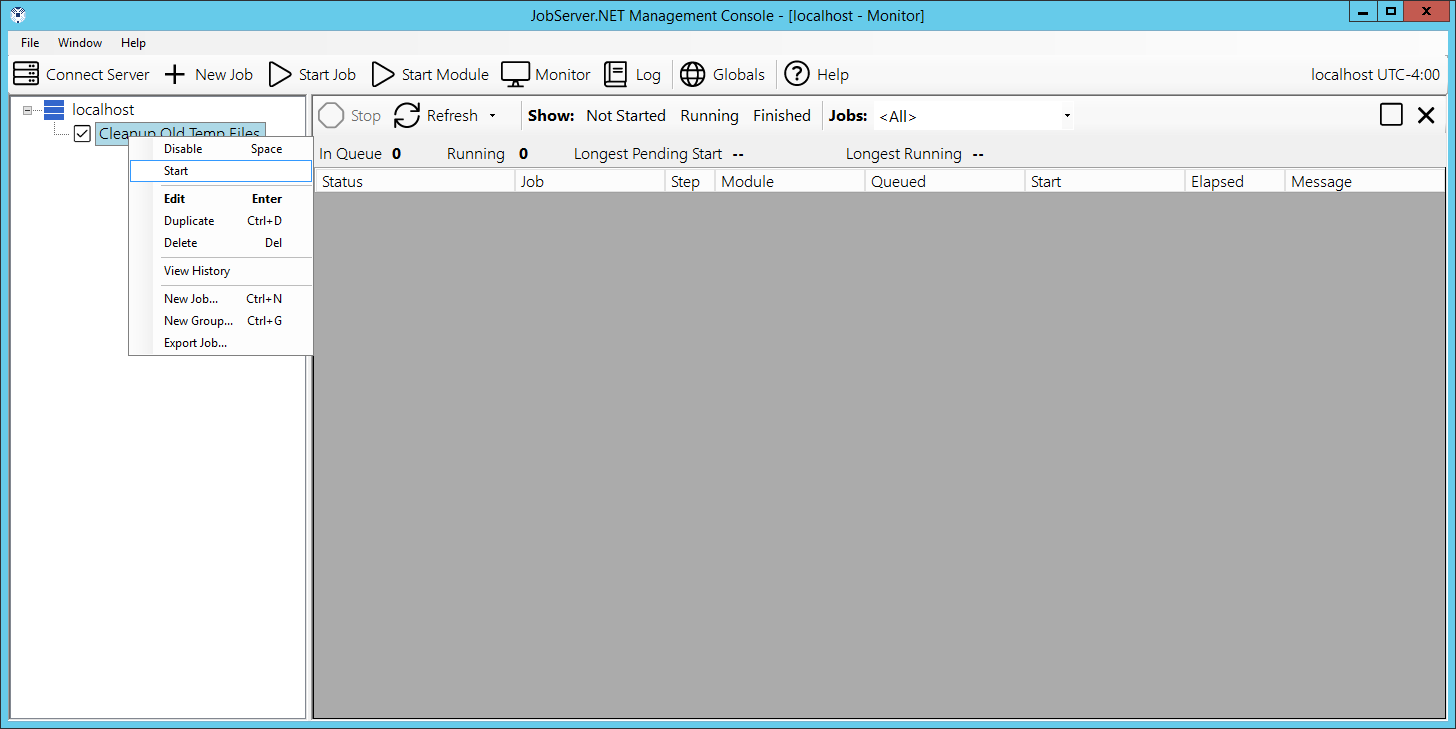

Now this next part will happen quickly, so you want to pay close attention to the monitor after this next step. You can start your job at any time (ad-hoc) and as soon as it starts, it will show up in the monitor and you will be able to see it run and finish. When you are ready to start your job, you want to right-click on your job name in the navigation panel and from the pop-up menu, pick the Start option. Your job will run right away when you click the start option.

Some job definitions, such as the one highlighted in these example screenshots, execute their job steps very quickly and you will have to watch carefully to see them display a running status. Most job definitions perform quite a bit more work and it would be easy to catch them in the running status.

After the job has finished running you will see it continues to display in the monitor for a few minutes afterwards. This is so you have a chance to view the very recently completed jobs in the monitor. Now review the files in your folder and you should be able to confirm that any files you placed there over the specified age have been successfully deleted.

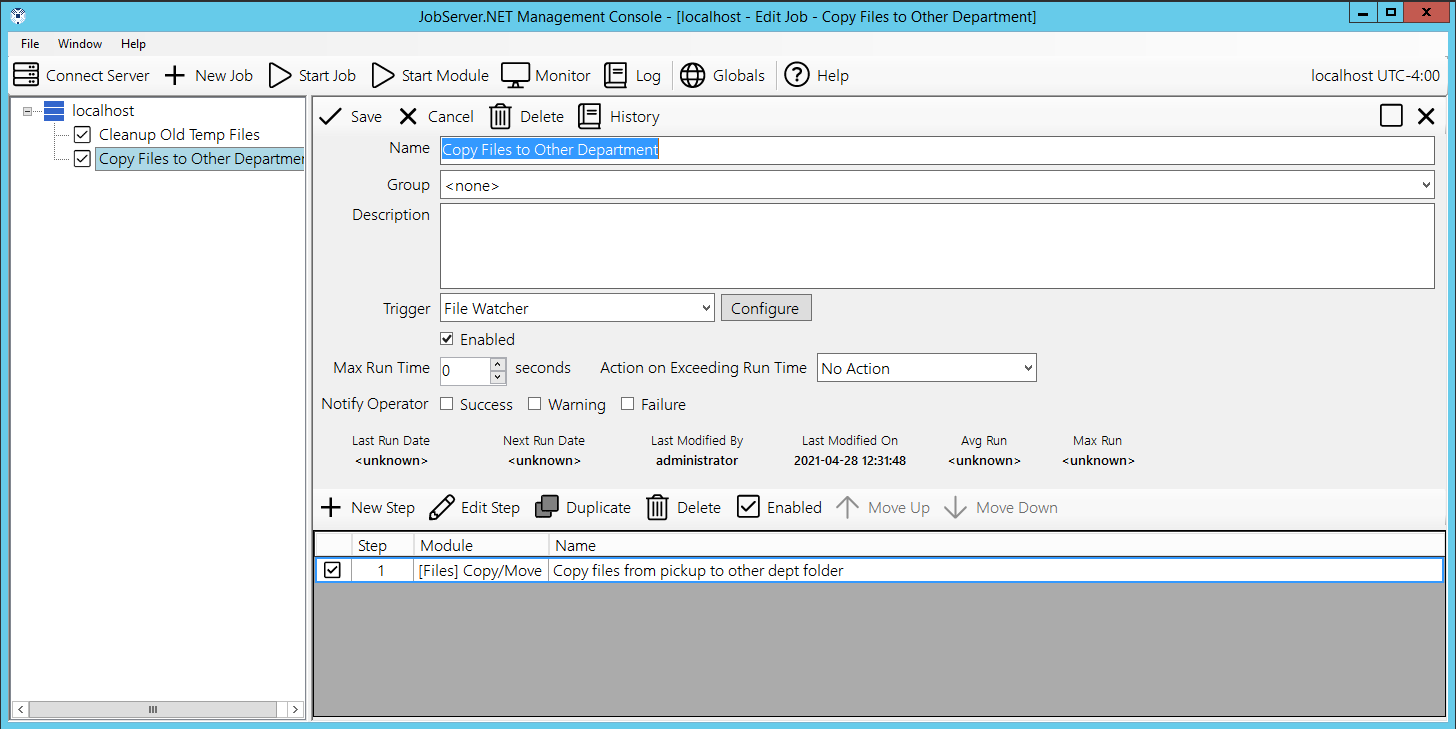

Creating an Event Based Job Definition

After looking at the scheduler trigger, the other triggers have something in common. They are all event-based triggers, which means they all trigger when something external to JobServer.NET occurs and the trigger detects this change. Thus, some external event causes these types of triggers to have a job start executing.

One of the most popular of the event-based triggers is the FileWatcher trigger. This trigger can watch for file activity in a specified folder and respond to file changes in it. This can be very handy for numerous situations. Maybe you want to take files that are dropped into a folder and automatically compress them into a ZIP file. Or, perhaps you have some legacy system that can generate a bunch of files in a folder, but you need a way to email them out automatically to a supplier or vendor for processing.

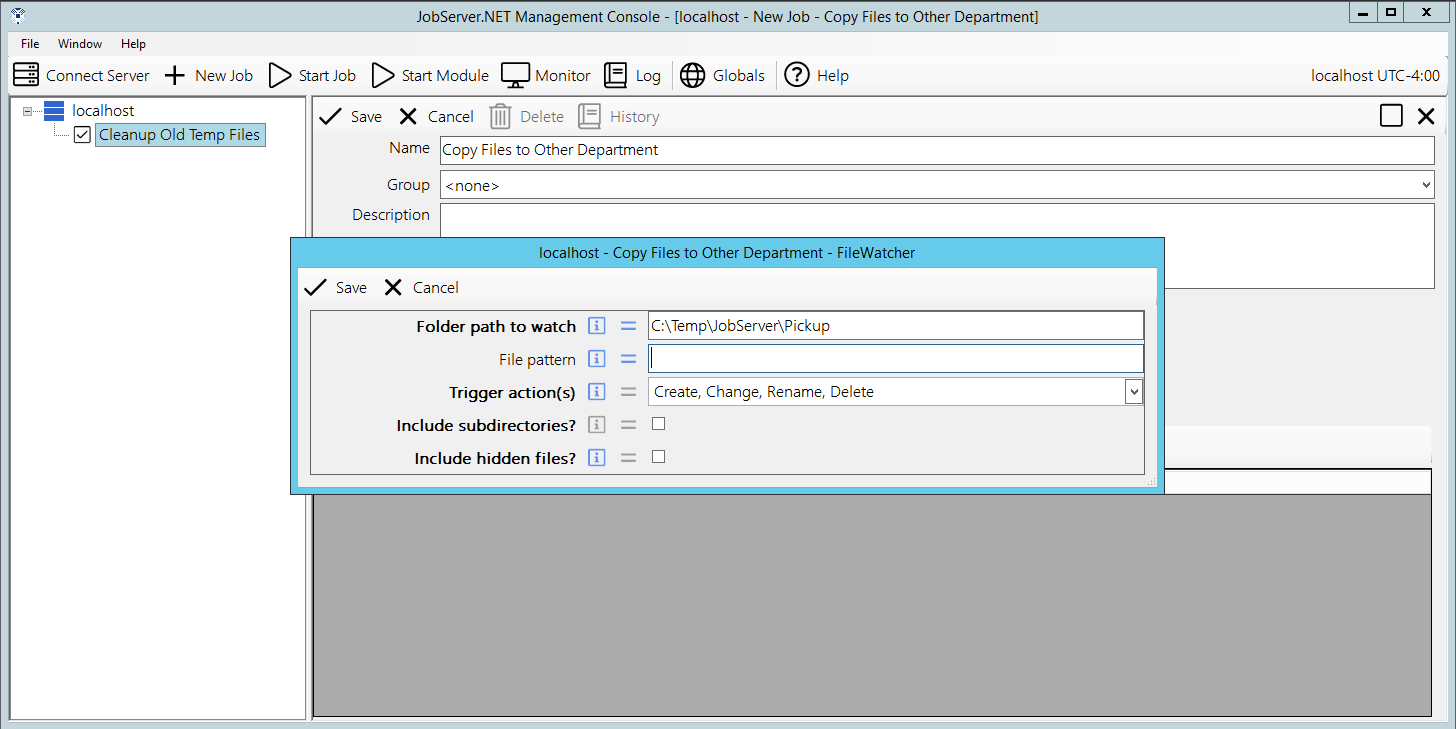

To start with creating an example FileWatcher, create a new job definition by right clicking your server and selecting New Job. When the new job form appears, change the Name to “Copy Files to Other Department”. Then go to the Trigger field and select FileWatcher. When the parameters dialog for FileWatcher appears, go to the Folder path to watch setting and add the value “C:\Temp\JobServer\Pickup”. Then in the Trigger actions field, select the All option. You can now click the Save button to finish creating the trigger.

Next create a step for this job by clicking the New Step button. In the Module field, select the [Files] Copy Move module. In the Name field, change the text to “Copy files from pickup to other dept”. Then in the parameters, we want to specify the File source as the example folder “C:\Temp\JobServer\Pickup”. Then select the Copy option for the Copy or Move parameter. And finally, you want to specify the Destination folder as “C:\Temp\JobServer\OtherDept” before you click the Save button to complete the settings for this step.

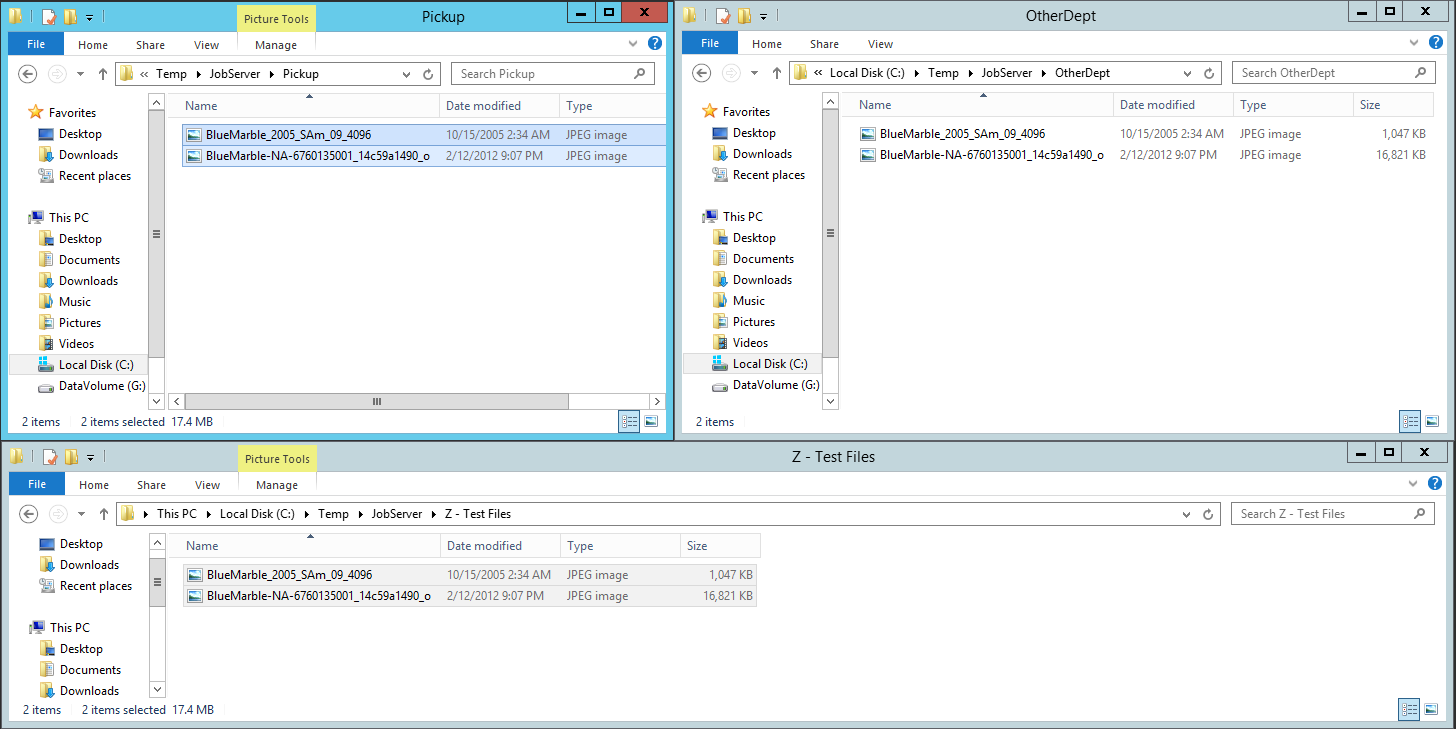

And finally, click the Save button on the job definition to create the new job. Once you do this, the job is now active and any files you place into the Pickup folder you created, will be immediately be copied to the OtherDept folder. This functionality works with files on local storage, or on network paths. The only requirement is that the JobServer.NET service must have permissions to the filesystem the files and folders are stored on.

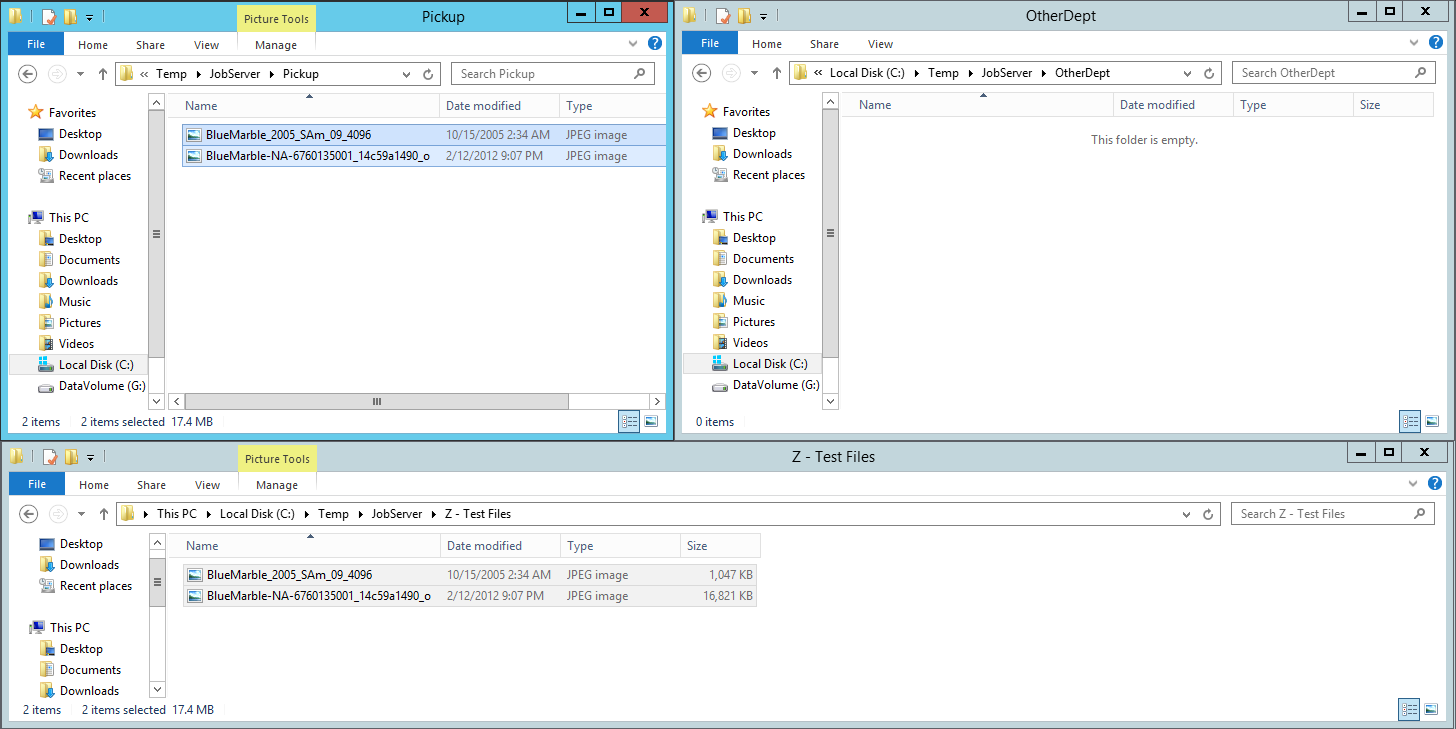

Now with the job defined and enabled, you can test out this event based job definition by putting some files into the folder it is watching, the source folder or Pickup folder as we have named it in the example steps above. Then we watch as the job gets triggered and executes by copying the files we send it to the configured target folder, named OtherDept in the example steps above. This will happen pretty fast, so you will have to pay close attention to see it happen. To try it, we arranged a few file explorer windows to make it easy to view all of them at once. The one on the bottom contains a few example files we are going to drag into the Pickup folder.

To test the newly configured job definition, you want to copy some files into the source folder you configured above. To do this, copy some files from another location and paste them into the “Pickup” folder that you defined as the source folder.

After the job executes, you see the files dropped into the source folder (Pickup) are automatically detected by the JobServer with the FileWatcher trigger and they are copied to the target folder (OtherDept).

Viewing Log History for Jobs

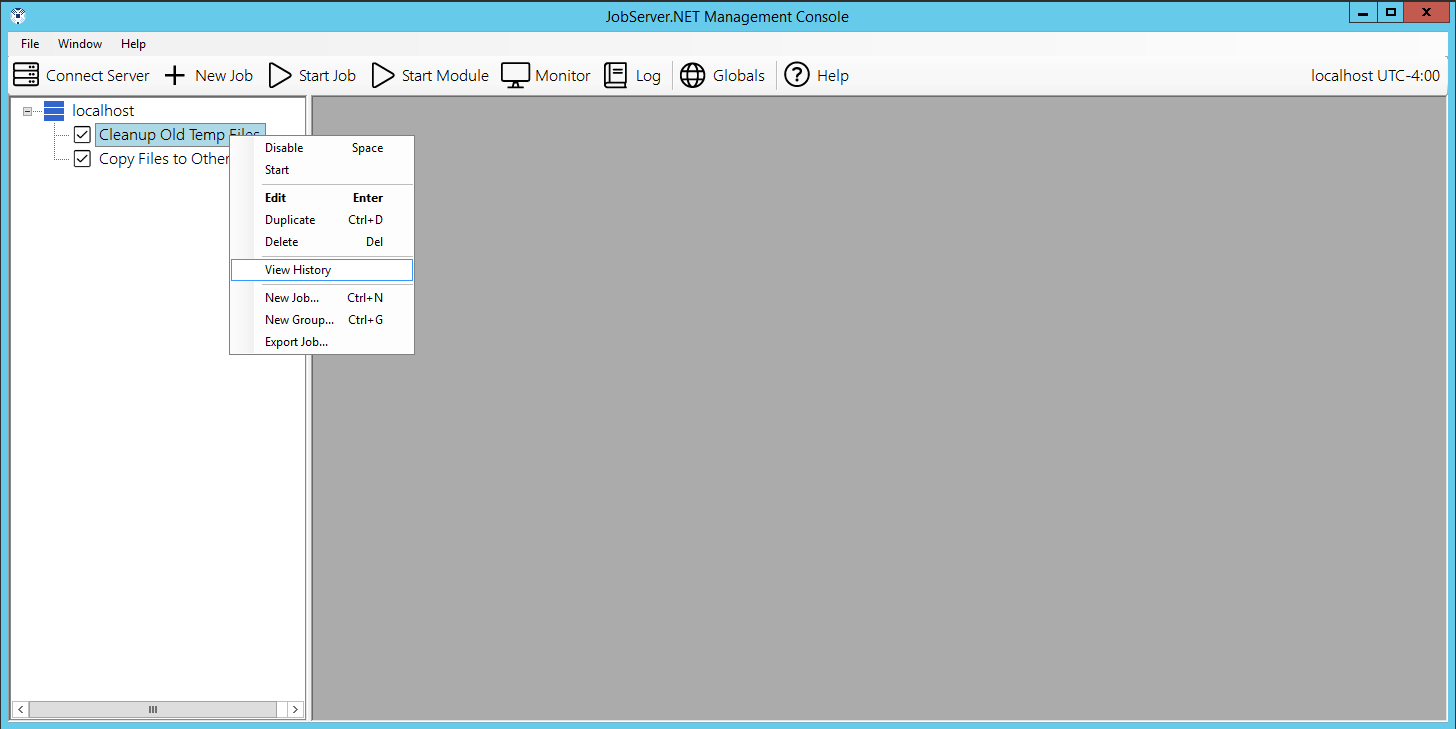

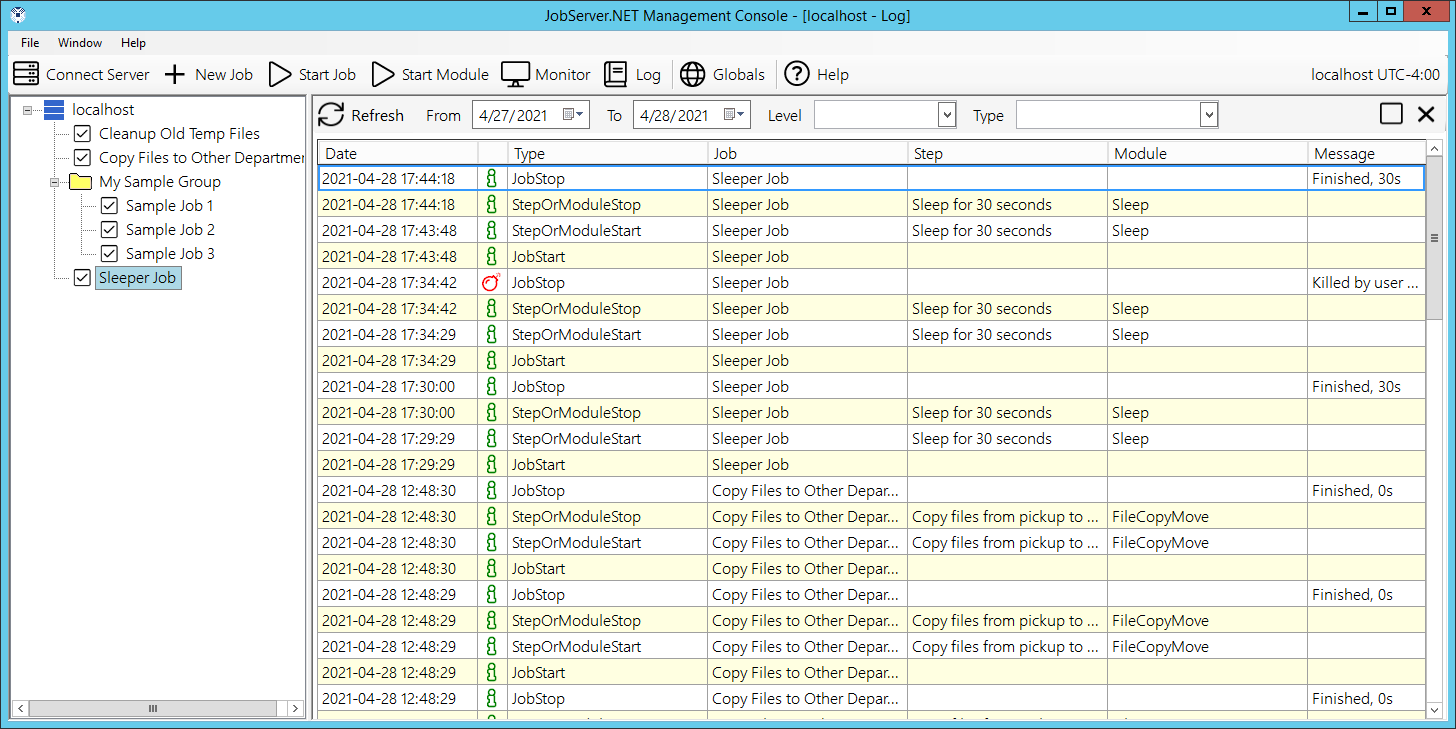

If you have followed through the steps above, you should now have some activity that JobServer.NET has performed recorded in the logs. You can now review this log activity to see what happened when these triggers launched the jobs and see their results. While the Log button on the main toolbar of the manager application allows you to see the entire log at once and filter down to any interesting data, there are other ways to quickly drill down to the relevant data you might want to see.

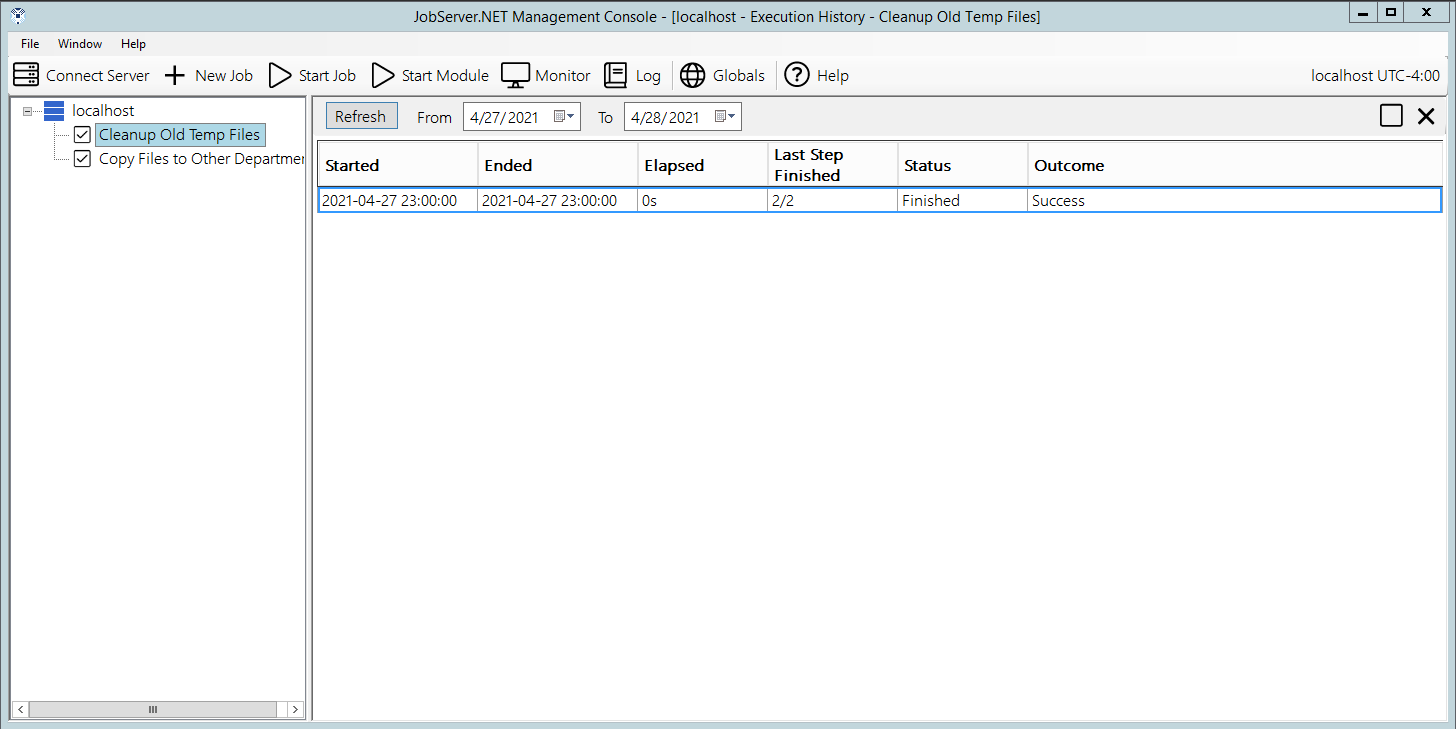

The best option for reviewing specific jobs is to right-click on the job in the navigation panel and pick the View History option.

Selecting this will open the Job History grid which shows all the times in which he job has been run.

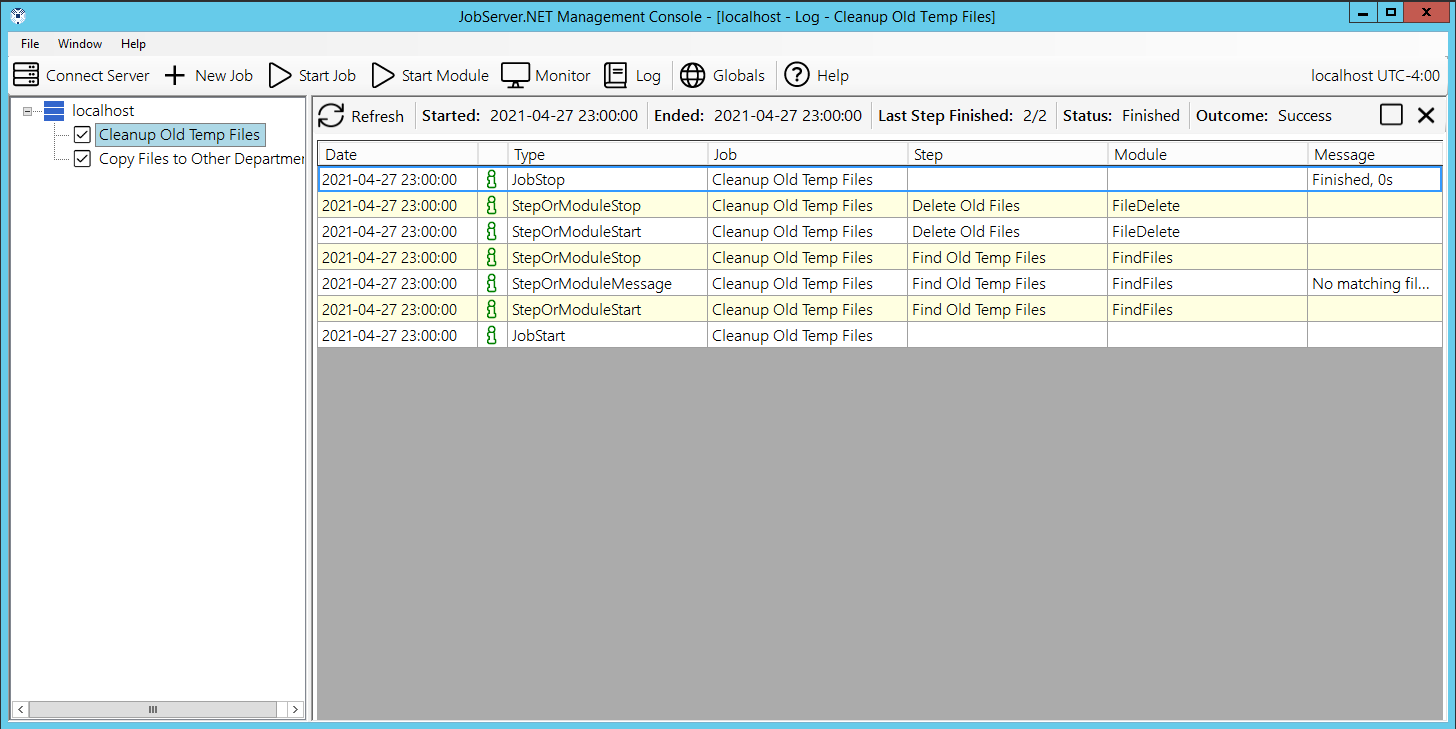

By double-clicking on any of the entries in the job history, you will be taken directly to a filtered list of just the log activity for that specific run of the job. This allows you to quickly drill down on a job’s activity and monitor what the job has done or to research any problems that might be recorded.

Job Definitions

If you followed along the step-by-step in the Getting Started section above, you should now have a basic familiarity with what a job definition is. To recap, a job definition is the combination of trigger and defined steps that are executed when the trigger has detected the date/time or event that it is expecting to see. The definition consists of several default settings which are fine for most job definitions. However, you have control over many settings which allow you to refine the behavior of a running job.

The first of these is the Name field, which should be set to a concise value that tells you what the purpose of the job is. The next field is Group which we will skip over for now as that is explained in the next section. Then we have the Description field. This is completely optional but is very useful when you have numerous jobs or jobs of some complexity, as it gives you a place to document the job in detail. When you have multiple people that may be managing the jobs, this can play a critical role in making sure your other team members understand the nuances of each job.

The Trigger field is a drop-down control which allows you to choose from any of the installed triggers. The first time you pick a trigger when creating a new job definition, or change the trigger, it automatically shows you the configuration options for the chosen trigger. Otherwise, when the trigger has already been chosen, you can update the configuration options of the trigger at any time by clicking the Configure button next to the trigger. Next to configure, the selected trigger will show a text legible description of the trigger’s current configuration.

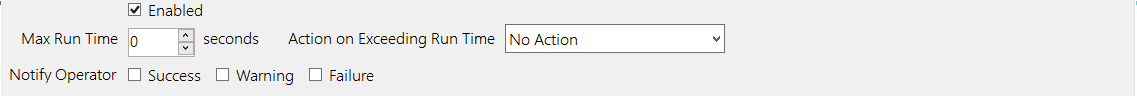

The Enabled checkbox shows if the job definition is currently disabled or enabled. When the job is disabled, it will not be executed regardless of the trigger. Jobs can also be disabled or enabled using the pop-up menu options in the navigation panel list. The Max Run Time field normally defaults to a value of zero, which means JobServer.NET will allow the job to run for as long as it attempts to. If a specific job needs to be limited to a specific amount of time, this limit can be specified here in the total number of seconds for the running job. If the max run time is exceeded, then the next field Action On Exceeding Run Time will allow you to specify the action that JobServer.NET will take when this happens. The option to Notify DevOps will use the configured Notification Settings to send a message about the job’s condition. The option to Terminate the Job will cause JobServer.NET to force the running job to be stopped. Most jobs should stop safely and will report their status in the log activity.

The next set of fields is named Notify Operator and provides a checkbox option for each of the conditions a job can end with. If you want a notification to be sent on any of these conditions, just check the box or combination of boxes you want to see a notification for. The final set of fields in this area of the job definition show some statistics on the history for this job. For new jobs, most of these will be blank of course. When jobs have run one or more times, you will see the stats will be updated to reflect what has occurred during previous job executions. Note that for jobs that have been in existence for a long time, the Average and Max run time statistics are based on the recorded log activity JobServer.NET has on hand. Depending on the frequency and amount of log activity all the jobs on a machine generate, the logs will automatically be pruned over time. Therefore, these values reflect the statistics for the log data the system has on hand.

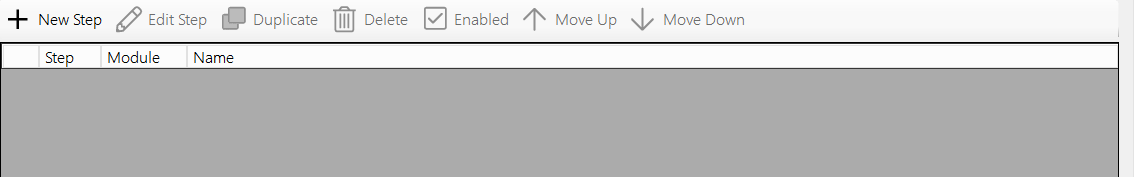

The bottom section of the job definition is the Step Editor. Each step consists of one module that may perform one or more actions with the parameters passed into them. Steps are performed in sequence and can be chained together with data or parameter values that pass from one to the next. The toolbar at the top of the step editor allows you to create and modify all the steps. The New and Edit step buttons should be self-explanatory with the caveat that new steps are always added to the bottom of the list. Double-clicking on a step in the grid is the same as clicking edit for that step. Other than the New button, all the other buttons expect to work on a specific step in the grid. Thus, to use any of the others, first make sure your desired step is highlighted in the grid before selecting the button. The Duplicate button will make a new copy of the currently selected step. The Delete button will permanently remove a step from the job definition. Sometimes you might not want a specific step to be executed, but maybe you do not want to delete it, so you can use the Enabled button to either disable or enable a specific step. And finally, the Move Up and Move Down buttons allow you to alter the order of the steps by moving the currently selected step up or down in the list.

While a job definition is being edited, it is locked from modification from any other sources. Therefore, it is recommended to not leave your job definition editor open for longer than needed in order to make any changes to it. This also means that if someone else has the manager application installed and has a job definition open, you cannot edit the same job definition until they either close (and optionally save) the job definition.

Managing Related Jobs Using Groups

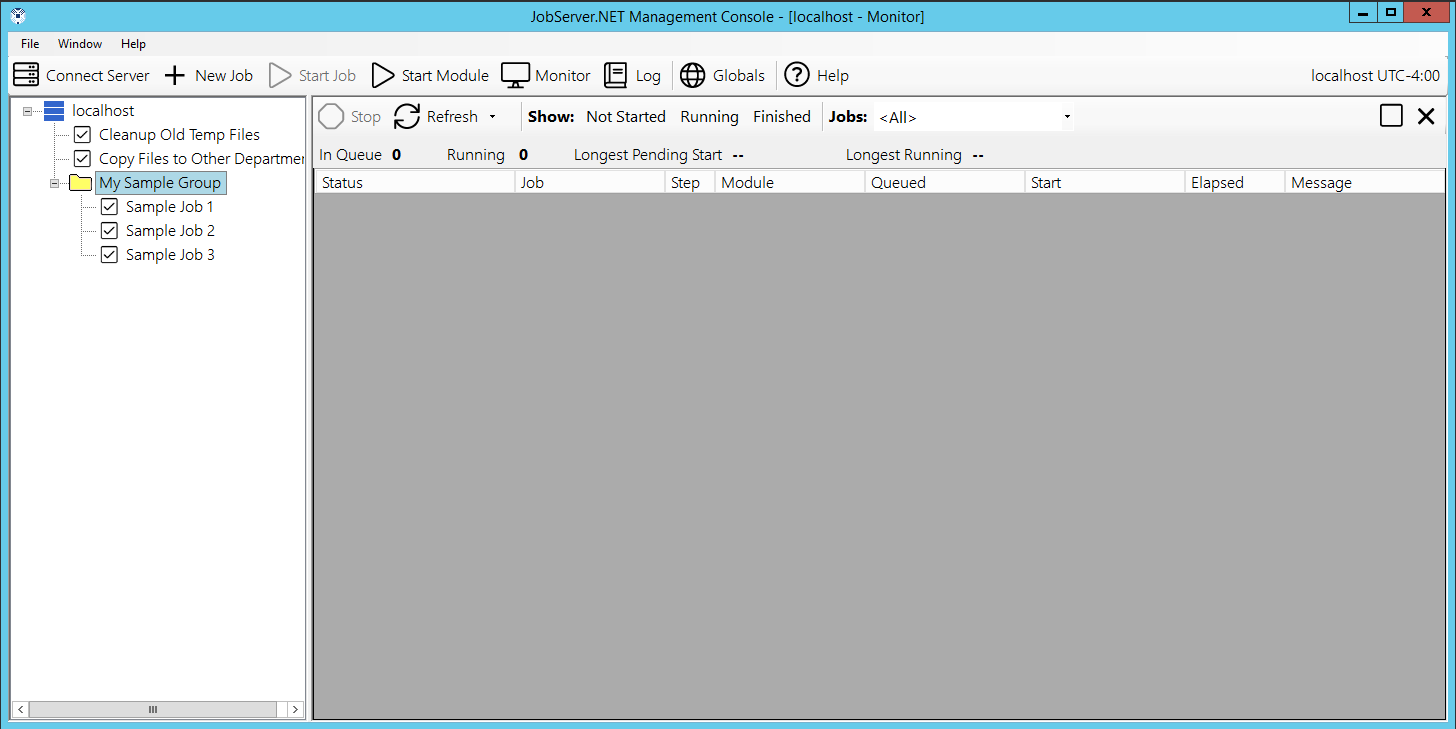

The obvious way to manage the job definitions on your server is to give them good and accurate names. Although over time, as you begin to create more jobs and find more ways for JobServer.NET to automate and enable various processes, it may become necessary to take advantage of another way to manage related jobs. You can use the Groups feature to organize all the jobs that have some common element. Groups appear as folders in the navigation panel view in the manager application. To create your first group, right click on the server and select New Group from the pop-up menu. Type in a name for the group and you will see it added to the list under the server.

Once you have created a group, you can now create new job definitions in that group by right-clicking on the folder icon for the group and selecting New Job from the pop-up menu. You can also drag and drop job definitions in the server list to add or remove them from the group. Additionally, now that you have at least one group defined, you will now see that you can also move a job definition you are editing by selecting or changing the group option from the list of available groups.

Group membership does not affect any of the operating conditions or parameters of the job. So, you can add, rename, or delete a group at any time without any effect to the running or pending job. If you attempt to delete a group that has existing job definitions in it, you are given the option to move the definitions out of the group before deleting it, or to delete all the definitions in it along with the group.

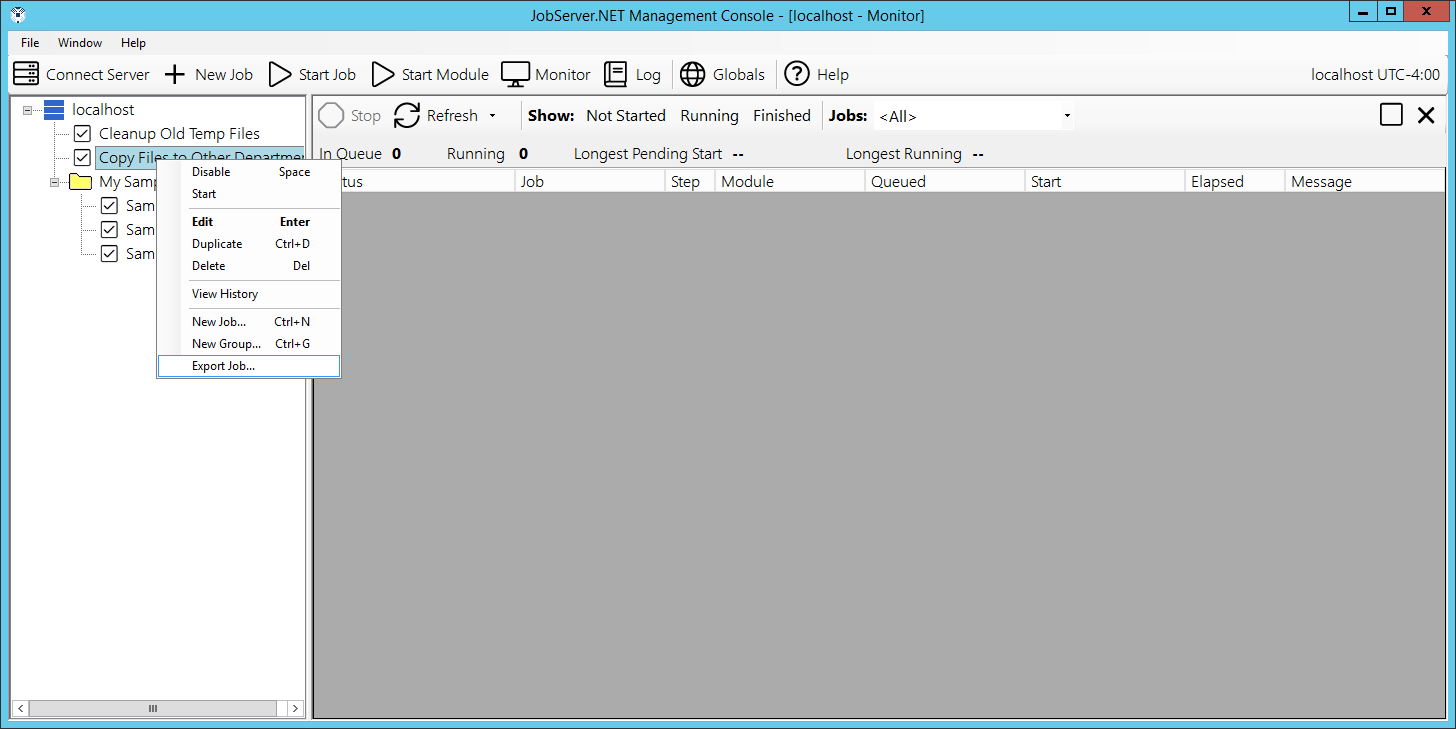

Exporting Jobs

A job definition can be exported to an external file. This export option can be used for a variety of purposes. One reason you might want to export a job is to preserve a job definition before making any extensive changes to it. Or, you can export the definition and then copy the exported job definition file (.job) to another server to import, thus replicating the job from one server to another. To export a single job definition, just right-click on the job in the server list and select the Export Job option.

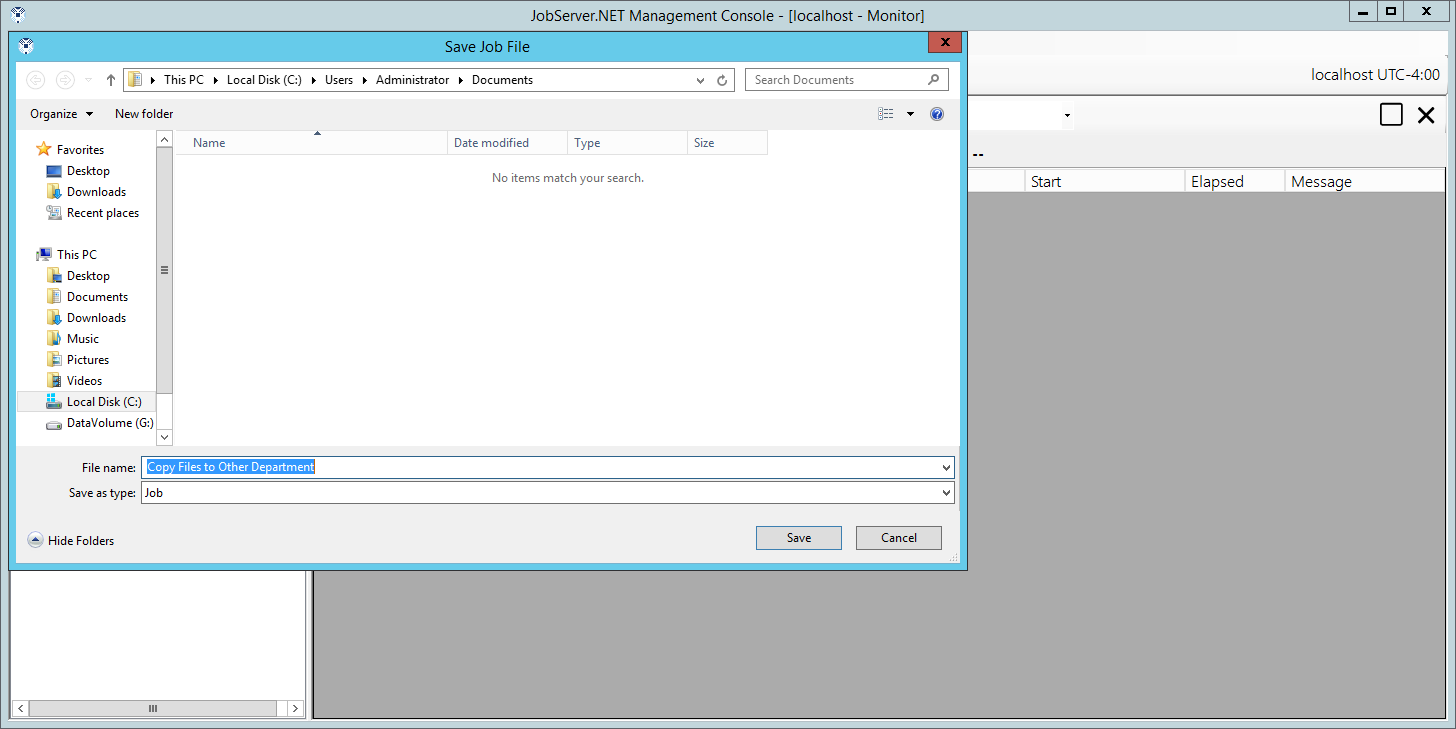

It will default to the same name as the job definition, but you can change it if desired before clicking the save option.

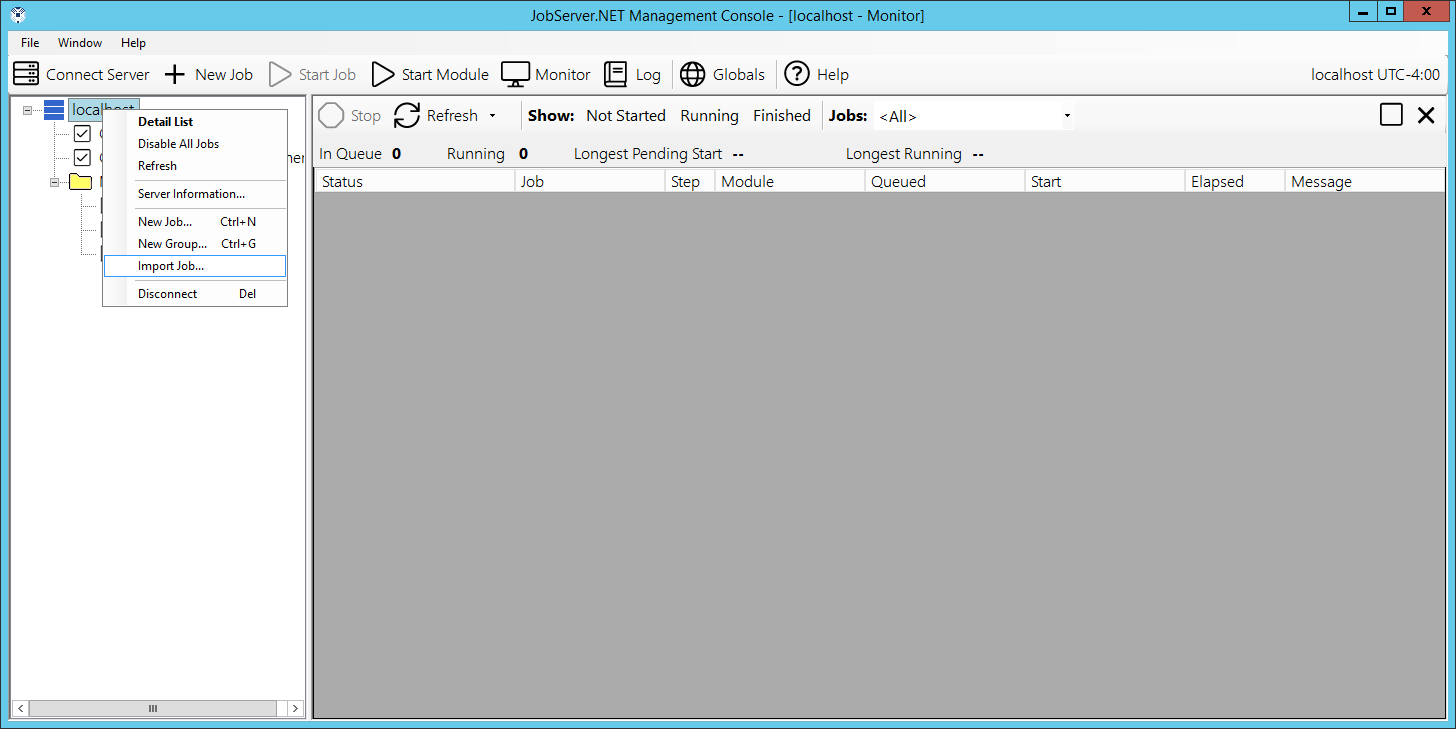

Importing Jobs

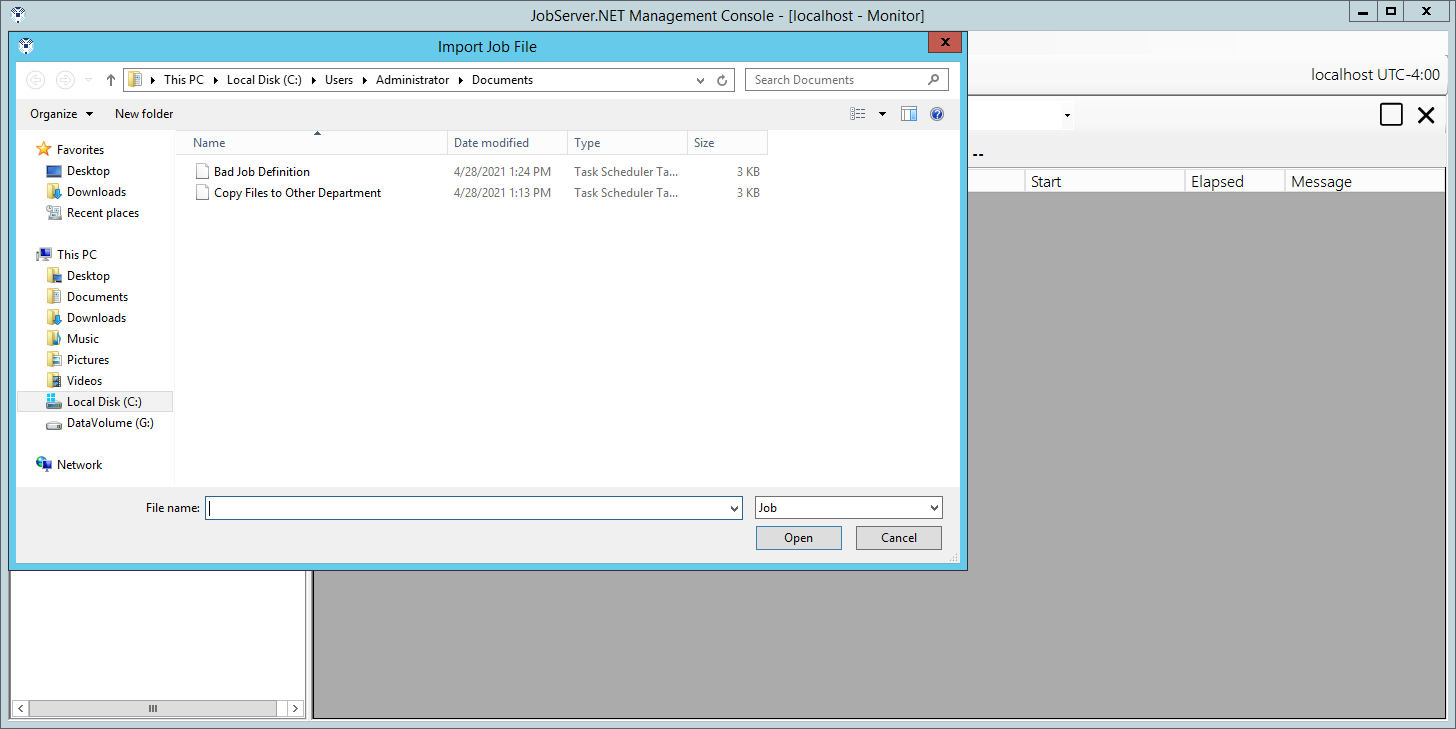

Importing a job definition is as simple as exporting. To import a job to the JobServer, right-click on the server if you want to import the job to the general list of job definitions and pick the Import Job option. If you want to import the job to a specific group, then right-click on the group folder before selecting the import option.

The first step in the import process will provide you with a local file dialog to allow you to choose a job definition that you would like to import.

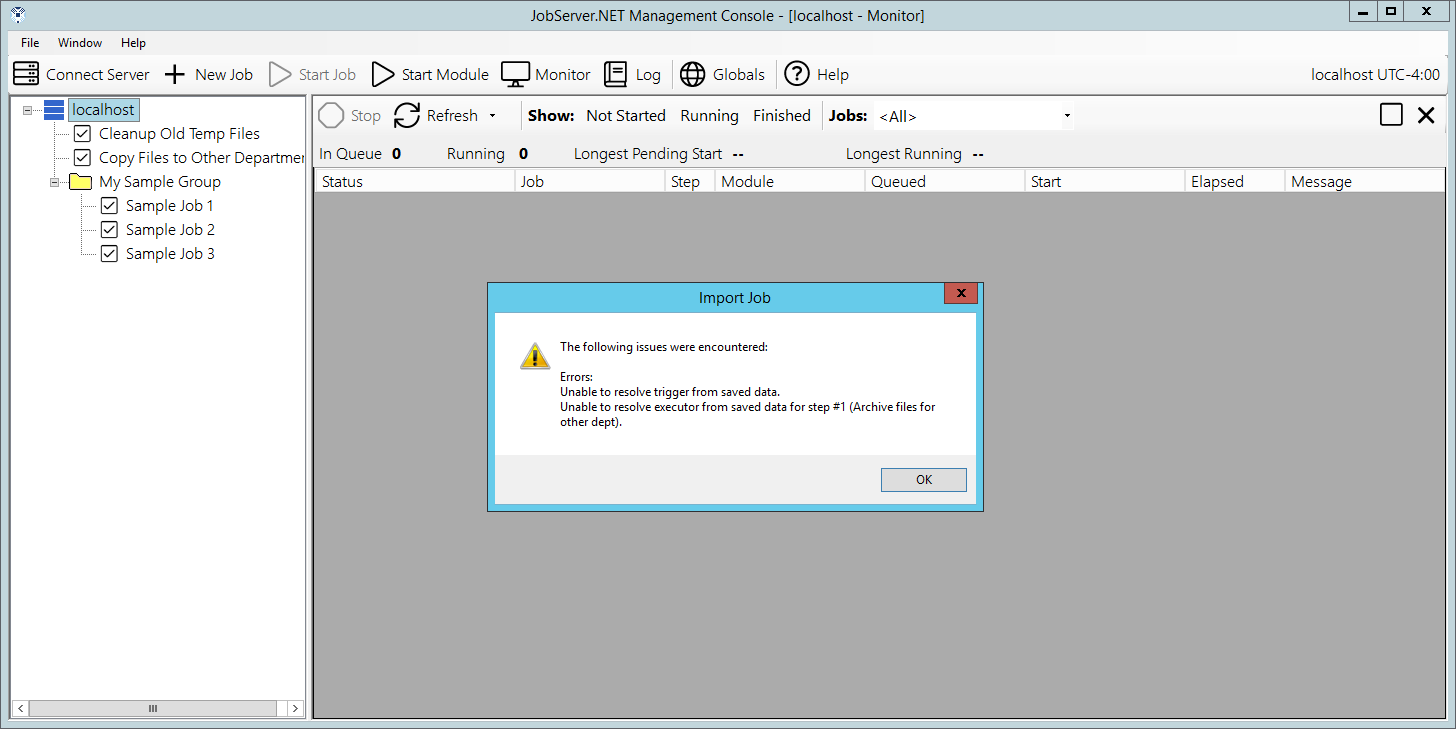

Once you import a job definition, you should open the job definition and make certain there are no detected problems with it. If a problem is detected with a job, you should see any error conditions that need to be resolved with any triggers or modules before the job will be able to become active. Possible problems with an imported job could be that the parameter name for a module has changed or a new required parameter has been added. In cases like this, you would just need to check the list of errors shown and fix the parameter(s) that are showing an error condition. If there is a problem importing the job definition, it will normally be detected immediately and will show one or more error messages from the import process.

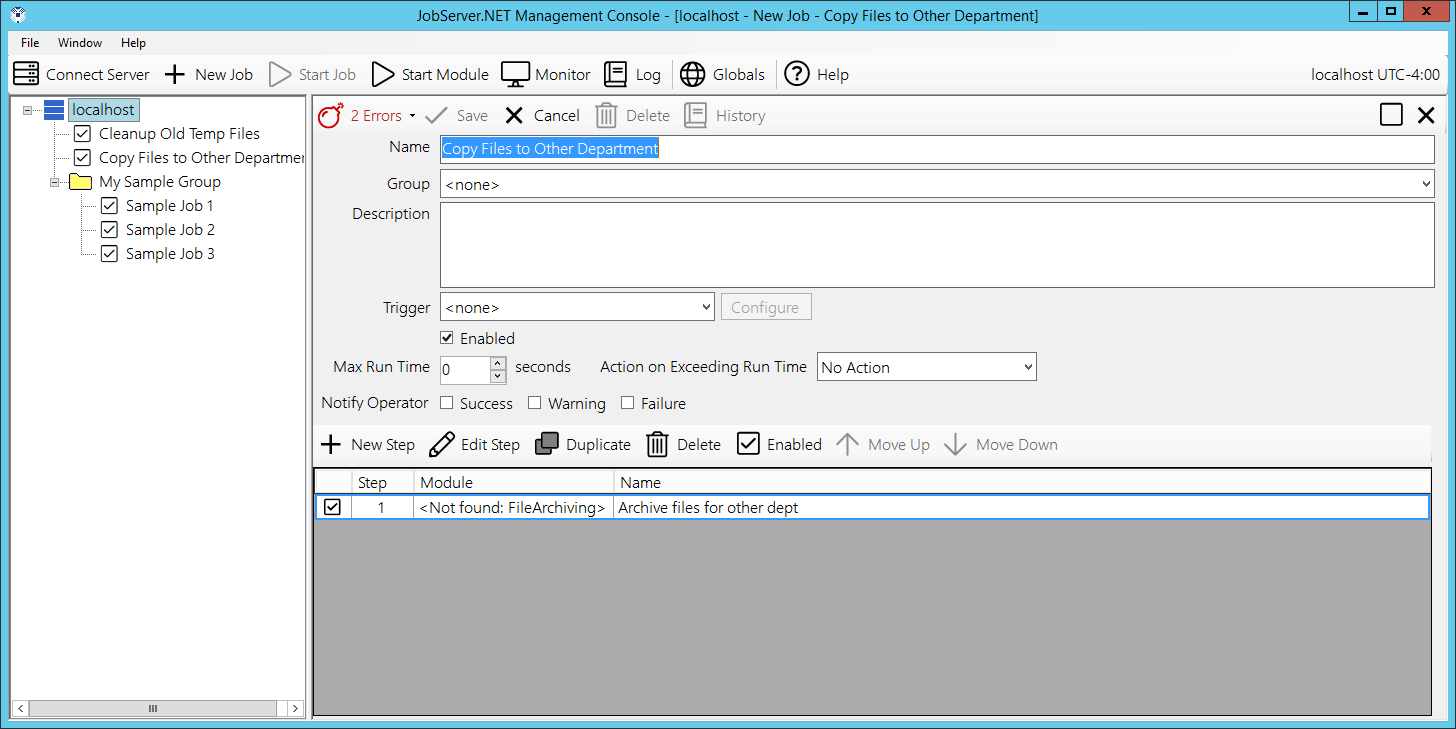

In cases when a JobServer is being re-installed on a new server, or existing job definitions are imported from a different JobServer, it is possible that the server where the definition is being imported does not have a module installed that existed on the previous server. Thus when running multiple JobServers, you would want to make sure you have all the same modules installed on any servers you may be importing/exporting the same job definitions to. When an import fails on a JobServer which does not have the module used in the imported job definition, an import error will occur which looks like the following screen. Note how the module name is displayed with a “not found” prefix in the steps.

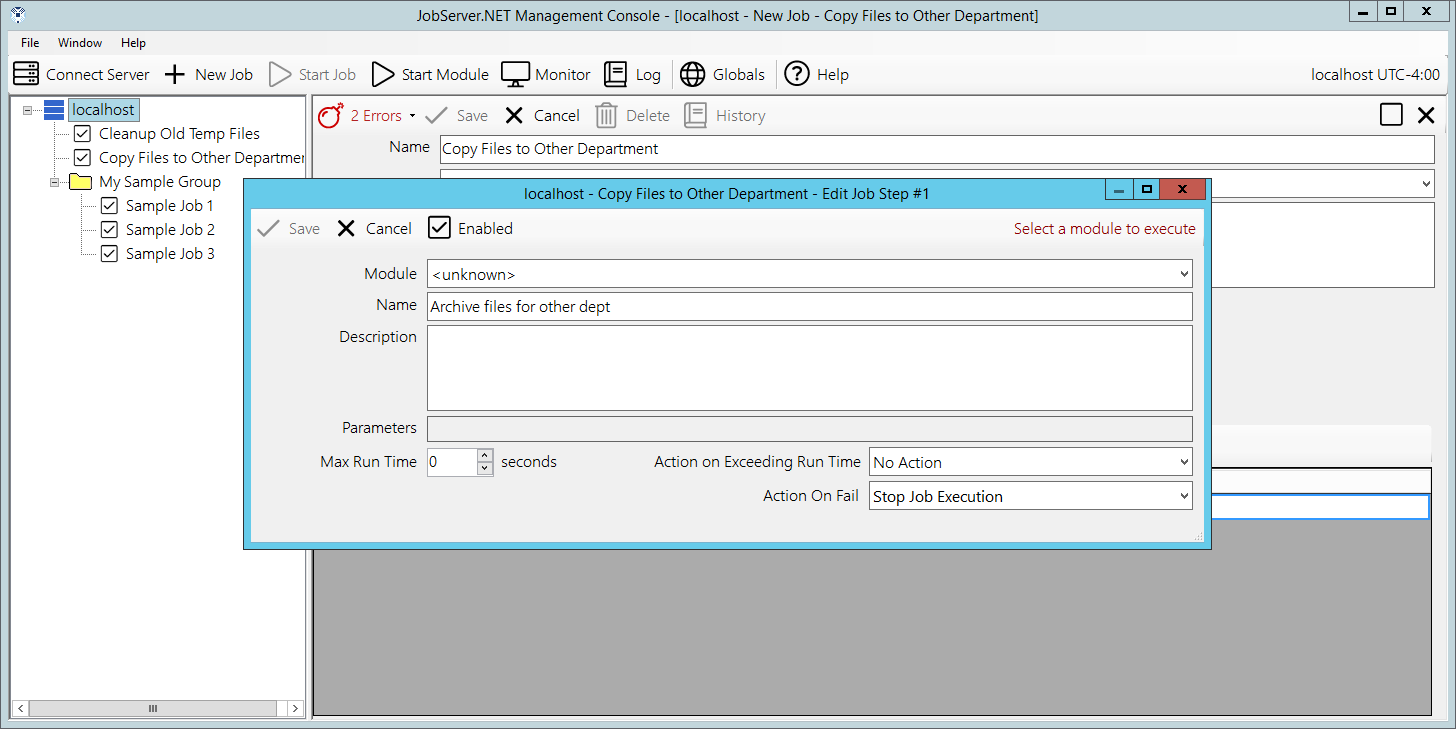

When such a condition occurs, most likely you need to cancel editing the imported job, and install the missing module(s) before proceeding any further. Otherwise if this is due to an old module that is no longer needed, or has been replaced by one of more newer modules, the job definition will simply need to be edited and updated as appropriate, or removed and replaced with the new modules and parameters. If you edit the step showing a “not found” module, the module field will show the value of “unknown” where the module name would normally be as illustrated in the following example.

Job Triggers

Now that you have walked through the example in the prior sections, a little more formal introduction to job triggers may begin to clarify the various components you have available to you. As you might have already determined, the trigger defines how a given job definition will cause the JobServer to start up and begin executing a new job. A job definition may not even assign a trigger. As we will see later, you may want to be able to define a job that you may choose to run only on a manual basis. By not assigning a trigger, the JobServer will not start this type of job definition unless it is launched manually through the manager application. Otherwise, a job definition will only ever have one trigger defined for it at any one time. We will be reviewing more about triggers and the distinct types of triggers in later sections.

Job Steps

Job Steps are performed in sequence once a new job begins executing. Any one job definition can have nearly any number of steps defined. Each step is used as both a container for and controls settings for Job Modules. The steps and the modules used within each are what perform the actual work of any given job.

Once you begin defining steps for a job, you will have the ability to arrange the sequence in which they are executed as well as the ability to enable/disable specific steps, and duplicate steps when certain ones might be very similar to preceding steps with some configuration changes.

Job Modules

Job Modules are premade components which perform some specific task or function. You can piece these modules together in various ways to complete a more complex overall operation. The modules perform work based on the parameters and other input you may supply them with. These are defined using the steps previously mentioned. In the examples we have seen in prior sections, we have only shown a few modules and basic ways they can be used. We will see more detail about all the included modules in further detail in later sections.

Connections

Up to now, you have seen a few different types of input parameters that the various modules may use. One type of parameter some modules can take is a Connection. These are usually a group of multiple settings which defines how a module might need to communicate with some other device or service. For example, one of the first types of connections we want to look at is the definition for how you can set up the connection that defines how modules can send email messages using an SMTP Connection.

What makes connections so useful is that they are generally used across multiple modules and are securely contained and managed in one place. What make this more important in terms of security is that connections are not stored in JobServer in plain text format. They are encrypted so that only authorized users of JobServer and the job definitions which use them have any access to this more sensitive information. Then if the credentials for a connection change, you can quickly update them in an easy centralized fashion.

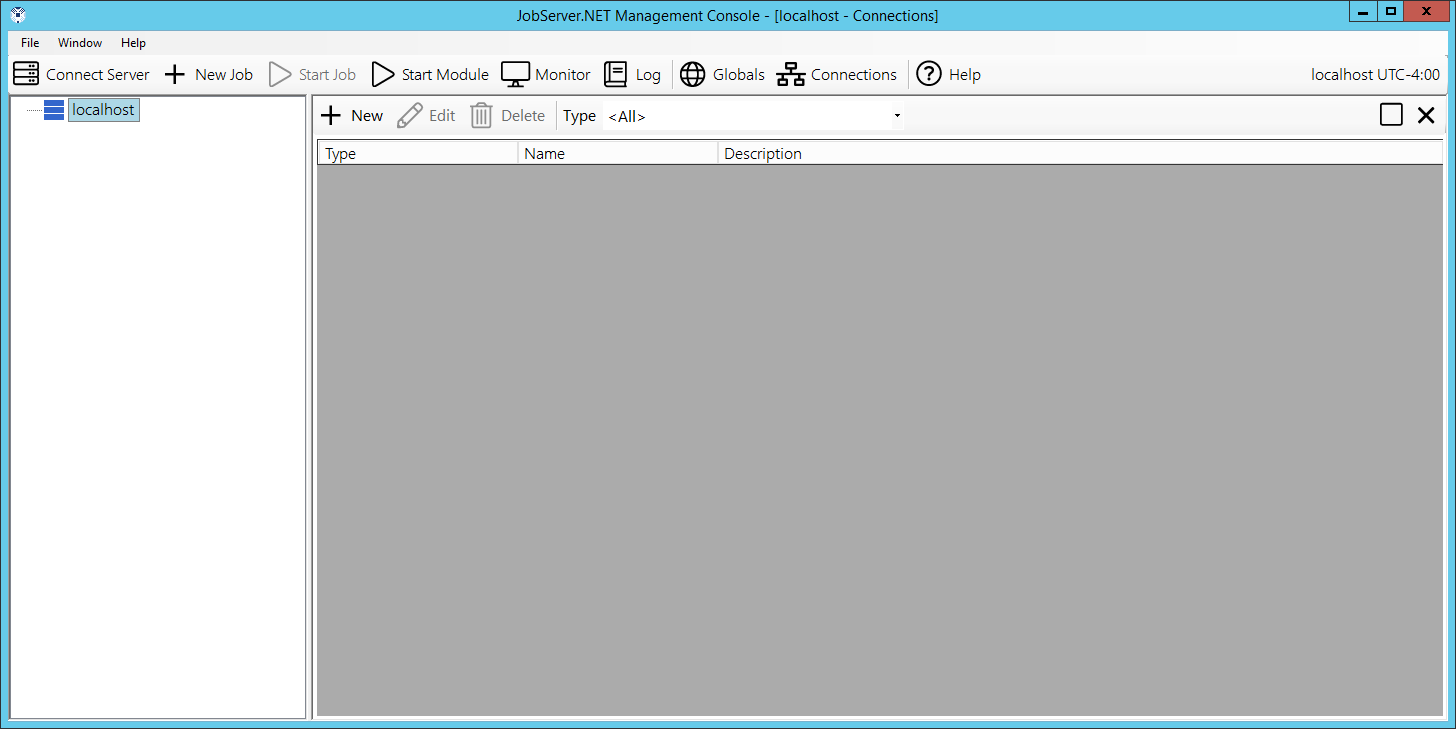

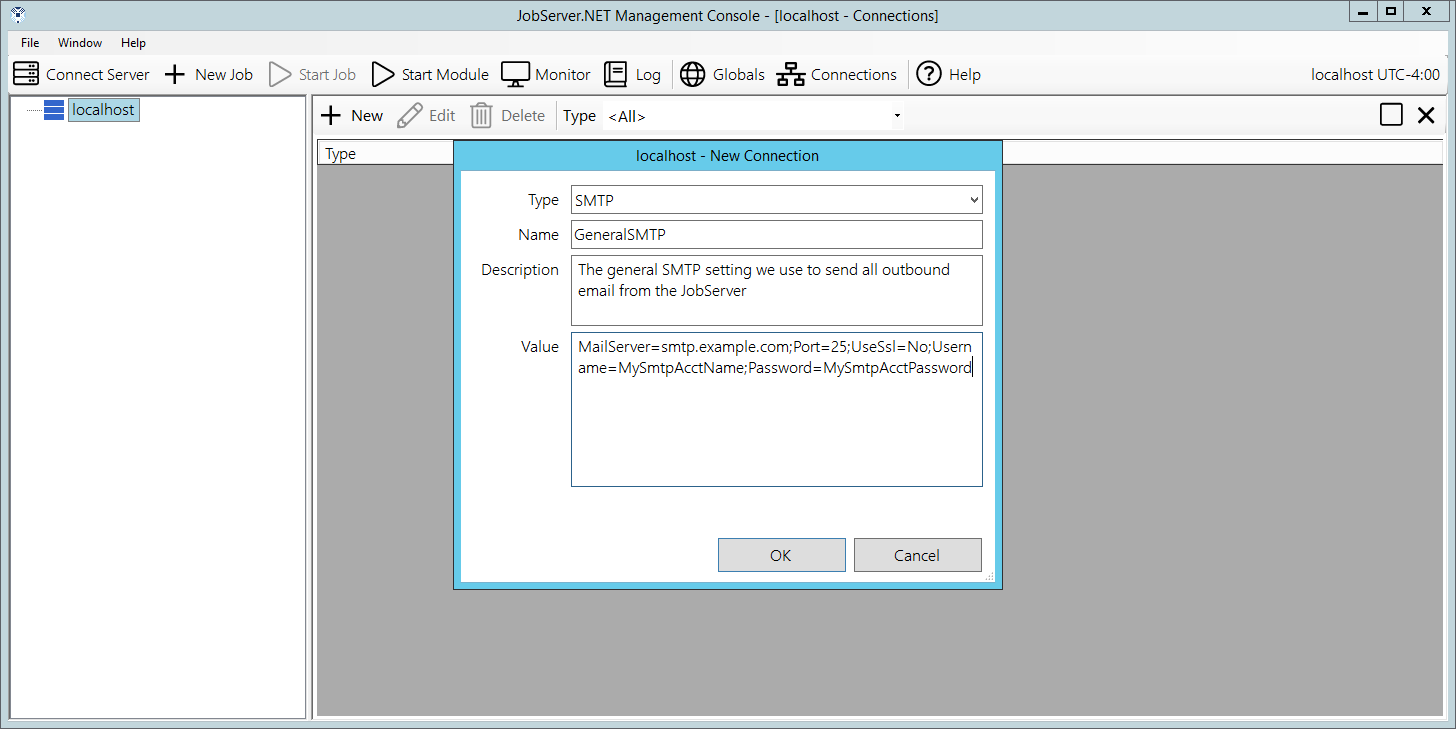

To edit your connections, click the Connections button on the right side of the main toolbar in the application manager and it will open the Connections window. Initially the connections window will not list any entries, so you will want to add your first entry by clicking on the New button at the top of the window. This will open the Connection Item Editor and will allow you to create a new connection entry. Start by configuring an email (SMTP) connection that is appropriate for sending email from your network.

Start off by selecting the SMTP option from the Type option list. Give it an appropriate name such as GeneralSMTP and then you can optionally fill in a description for this connection. Finally, you should notice that the Value field has been pre-populated with a text template of values which is valid for the selected connection type. Fill in the values for this SMTP Connection using the following description as a guide.

The settings for the SMTP Connection are described in the following table. These values should be changed to a valid email server for your network.

| Name | Description |

|---|---|

| MailServer | The server name or IP address of the sending email server. |

| Port | The port to use when connecting to the email server. If this setting is not specified, it defaults to port 25 |

| Username | If the email server requires authentication, this should be the username for the account to use. |

| Password | If the email server requires authentication, this should be the password for the account specified. |

| UseEncryption | If the email server supports or requires secure transport using SSL/TLS encryption, this should be set to Yes, otherwise No. |

| FromAddress | The email address an outbound message should be sent on behalf of or appear to come from. |

Global Variables

If you have been going through the various sections of the documentation and following along with some of the examples shown, you should now be familiar with the idea that both triggers and modules can take a variety of input parameters. For those with many job definitions, some that have some type of close or direct relationship to each other, you will find that you may be using the same settings for various parameters repeatedly. If so, this is one of the keys ways you want to take advantage of the Global Variables feature.

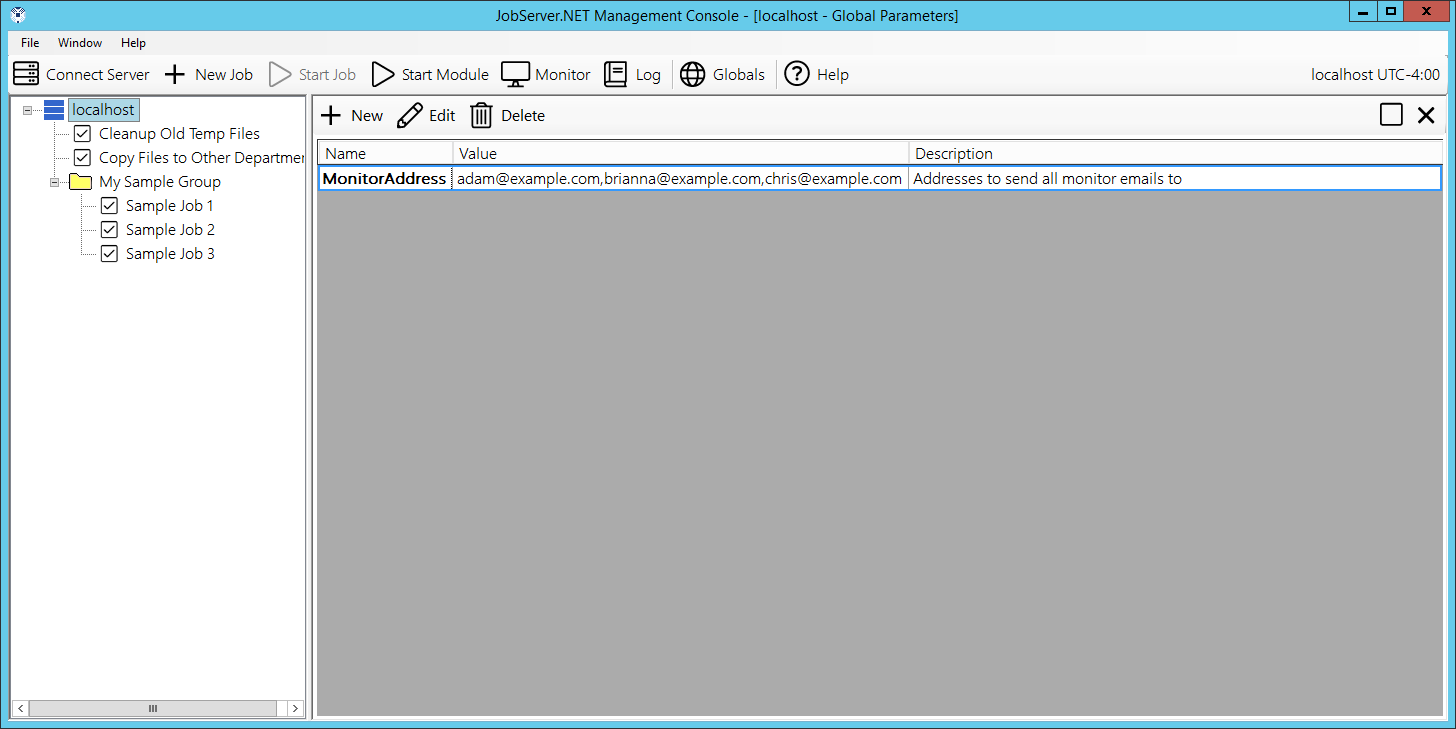

The global-variables feature can be managed by clicked on the Globals button you can see right on the main toolbar of the manager application. The first time you open this, you will see an empty looking screen. To try this feature out, click the Add button you see at the top of the globals window. You will now be prompted to create a new global variable. The first step to defining a global variable is to give it a unique name. Enter a name such as MonitorAddress. In the value field, enter one or more email addresses you have access to. If you enter more than one email address, just separate each address with a comma before adding the next in the value field. And finally, we recommend always putting a short description of what this will be used for. In our example we might put the text “All of the email addresses we want to send monitor messages to”. Click the OK button to save this and you should now see it in the list of defined global variables.

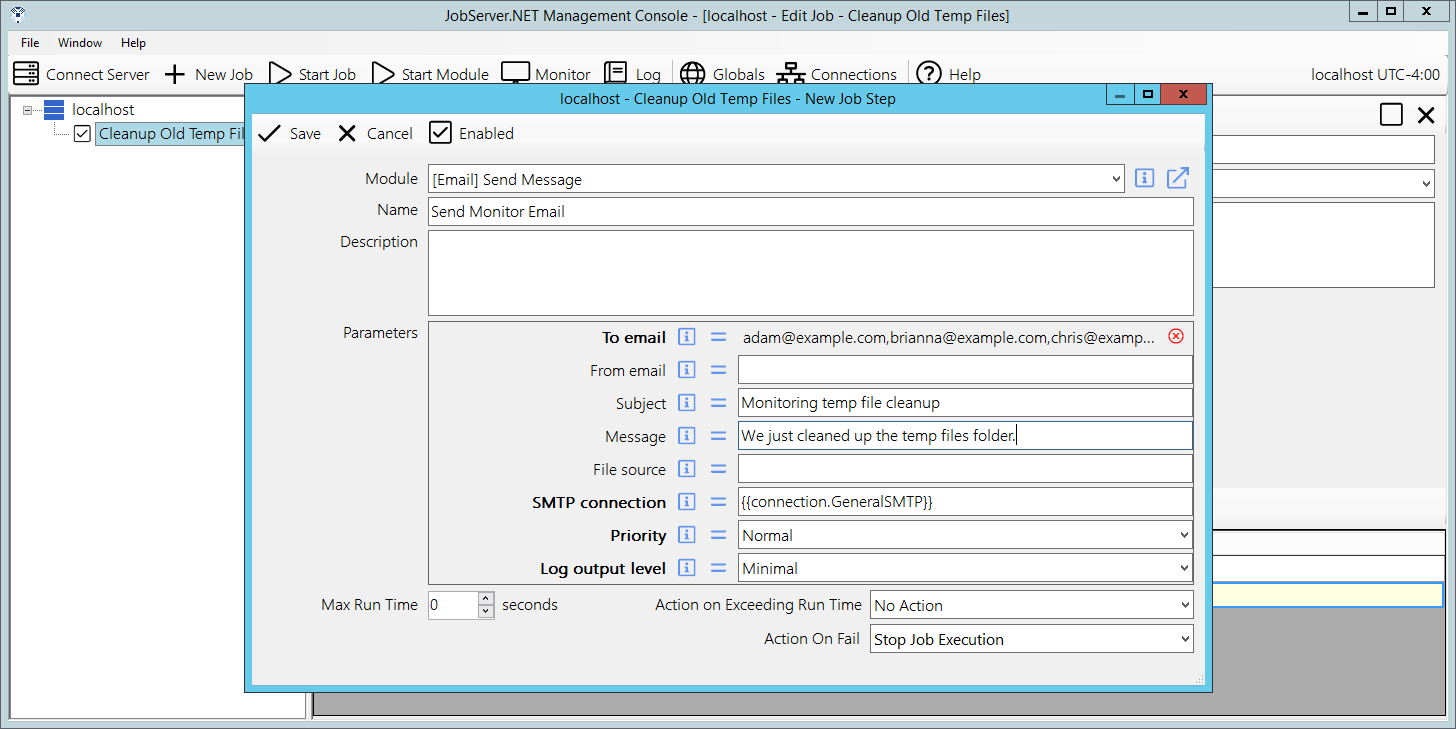

Now we can try out using our globally defined value. Previously we created an example job called “Cleanup old temp files”. Locate that job and open its job definition. Once you have done that, we want to add a new step to the job by clicking the New Step button on the toolbar above the steps grid. For the module, pick [Email] Send and set the name to “Send monitor email”. When you get to the list of parameters, notice how there are two small buttons between the parameter name and the control where you can change the parameters value. The first button is the information button. When you click on this, a more detailed description of what that parameter is for is shown. The next button is the assignment button. Clicking on this, you will see the window for selecting a global variable assignment.

To do this, first assign the SMTP Connection to the connection you defined in the previous section. If you followed the steps, it should be named GeneralSMTP. Then go to the To Email parameter, which is the first listed entry for the send email module. Click the assignment button and you will see the list of global variables you have created so far. There will be some other items after your global variables, but we are going to skip over that for now. Find the global variable you named as MonitorAddress and double-click on it to select and assign it. Now you see that the appearance of the value for your first parameter has changed a bit and is showing the value that you entered into the global variable. Next assign your Subject parameter, set it to “Monitoring temp file cleanup”. For your Message parameter, set it to “We just cleaned up the temp files folder.”. The remaining parameters you can leave blank and click the Save button on the step editor.

Now if you have defined all your settings correctly, try running the Cleanup old temp files job manually and when it is finished, it should send you the email message you just defined in the new step you just created.

Job Monitor

The monitor can be used to interactively watch job activity on the server. The monitor displays jobs that are currently running and continues to display recently completed jobs for a few minutes after they finish, for improved legibility. To open the job monitor, just click on the Monitor button on the main toolbar. The monitor window will open, and you will see any jobs that are actively running or recently completed. For a new installation, you will not see much yet, so we will try an exercise to see how the monitor can be used.

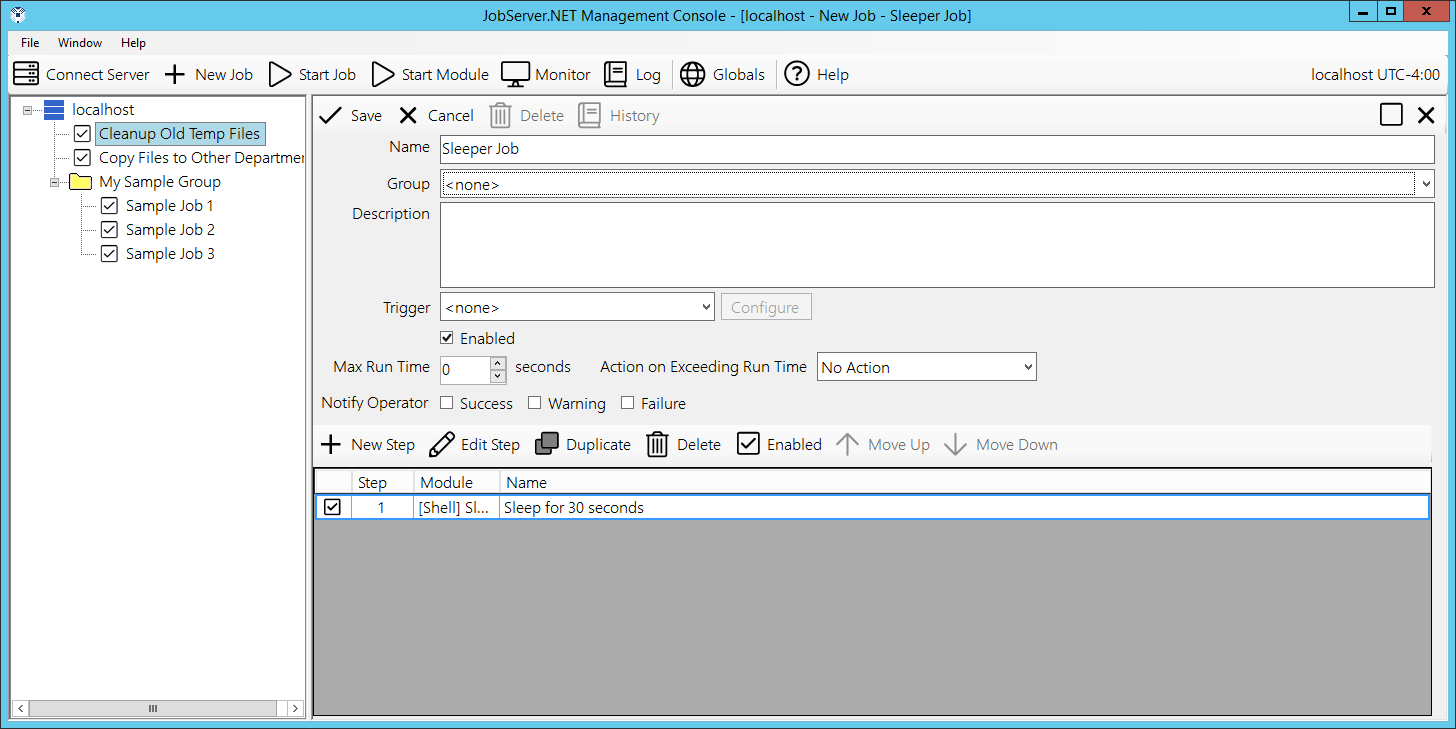

Click on the New Job button on the main toolbar and give the new job the name “Sleeper Job”. Jump down to the steps grid and click the New Step button just above the grid. First select the [Shell] Sleep module, then update the name to “Sleep for 30 seconds”, and finally in the parameters list, the Sleep Seconds parameter should be set to a value of 30. When ready, click Save to complete this step returning to the job definition. You may have noticed we did not create a trigger this time and it is set on None. This is fine as we do not want this job to run automatically. We are only ever going to run this job definition manually. Finish this job definition by clicking the Save button and you should be back to the monitor in the manager application.

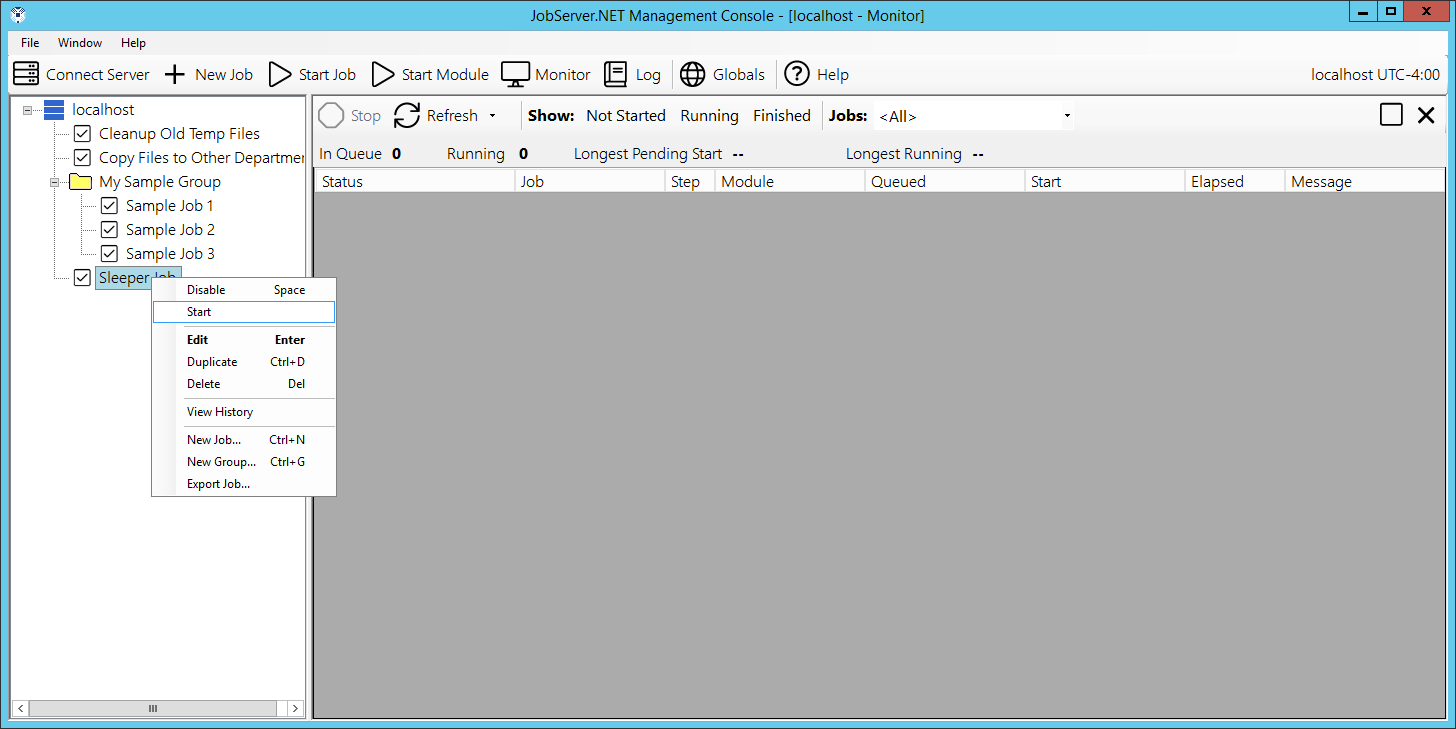

With the monitor window still open, find the “Sleeper Job” you just created in the navigation panel and right click on it to get the pop-up context menu. Select the Start option on the menu and you should see the job appear in the Running status in the monitor window.

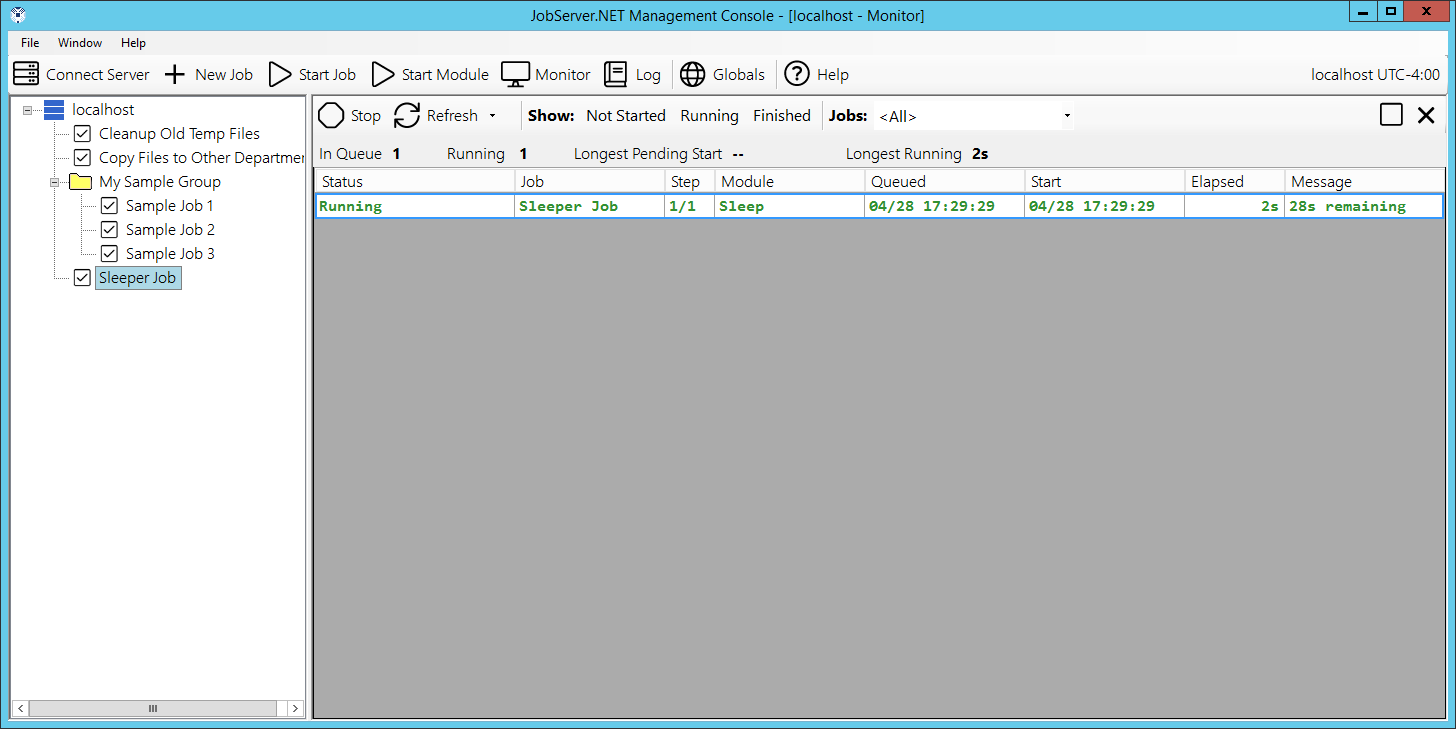

Note while this job is running that the other fields in the monitor grid show the steps and progress of the job. If the job is progressing through multiple steps, you will be able to see which step the job is currently working on. Since this is a simple one step job, we will focus on the Message column. You should notice that the message column is giving us real-time feedback on what is happening within the specific step, or module, that is currently executing. Modules generally are built to try to provide useful feedback on long running processes via this status message, but this can vary for distinct types of modules. With the sleep module we use in the example in this section, you can see it counting down the time remaining.

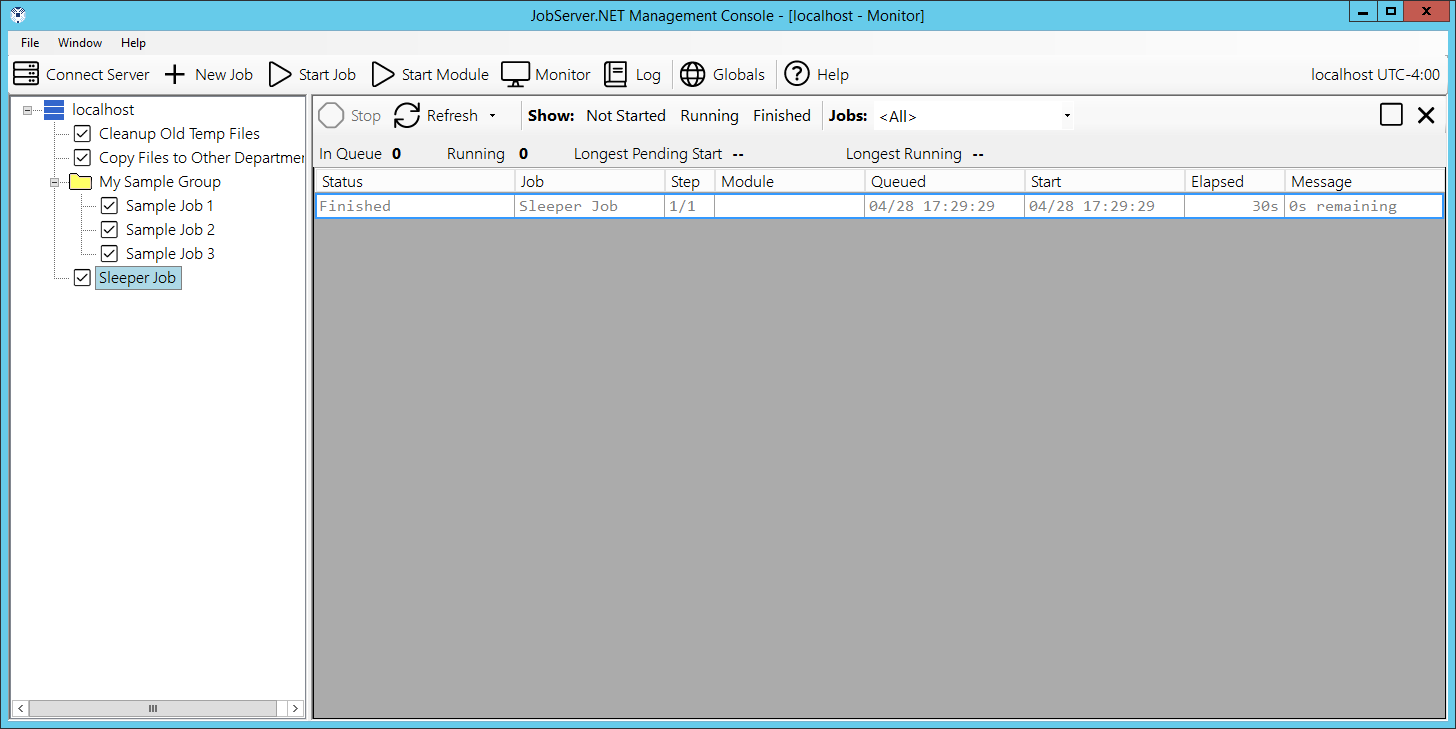

If by this time the example sleeper job is still running, allow it to finish and you will notice how when the job completes, the status changes to Finished and the job is still temporarily displayed in the monitor. This should allow you to continue viewing the status of recently completed jobs in the case where your job server may have many small jobs starting and completing rapidly. After about ten minutes, the finished jobs should automatically drop off the view from the monitor.

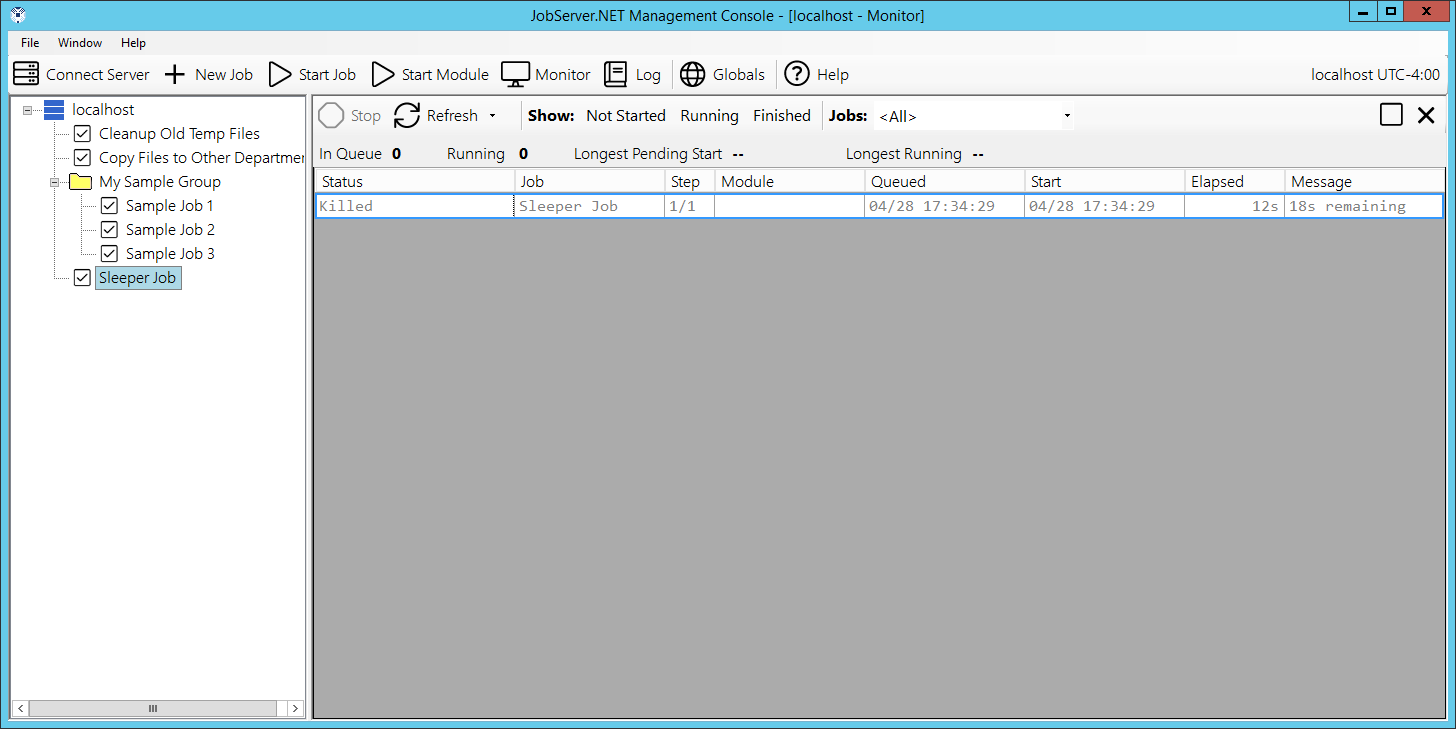

For a next step, start “Sleeper Job”, and while it is still running, click on it in the job monitor window and find the Stop button on the monitor toolbar and click it. You will be asked to confirm if you want to stop the job. Go ahead and stop it and you should notice that this job will be stopped almost immediately, and the status of the job is now changed to Killed. You can use the stop feature to attempt to kill any running job. Just be aware that the server will try to nicely request the job to stop and depending on the step being executed in the job, it should comply and shutdown as nicely as it can. Some modules might not behave as well when being requested to stop while processing, and the outcome of a job that has been killed may result in incomplete data someplace. Thus, be careful when using this feature and when implementing custom modules, and be certain to try to respond to stop requests and leave any data in a known state.

An important consideration when you are using the job monitor is it affects the performance of the manager application. For security purposes, the manager application will normally time out and disconnect from the JobServer when there has been no activity after a prescribed amount of time. If the monitor is left open, then this behavior is affected because the monitor will keep getting updates from the JobServer on its current activity. Therefore, while the monitor is open, the manager application will not time out for the given server. Please keep aware of this and do not leave monitor open on an unattended machine.

Job History and Log

The job server keeps a historic log of all the activity from each running job. We can look at this from a few different points of view. The job history is a view which shows us from the log data, each time a specific job was started. Let us use the example from the previous section and locate “Sleeper Job” and right click on in and select View History from the pop-up menu. When you do this, you will see a nice concise set of information taken from the log which shows all the times it has recorded the job being started. It provides some of the basic overall statistics for the job such as the total elapsed time, the completion status and outcome.

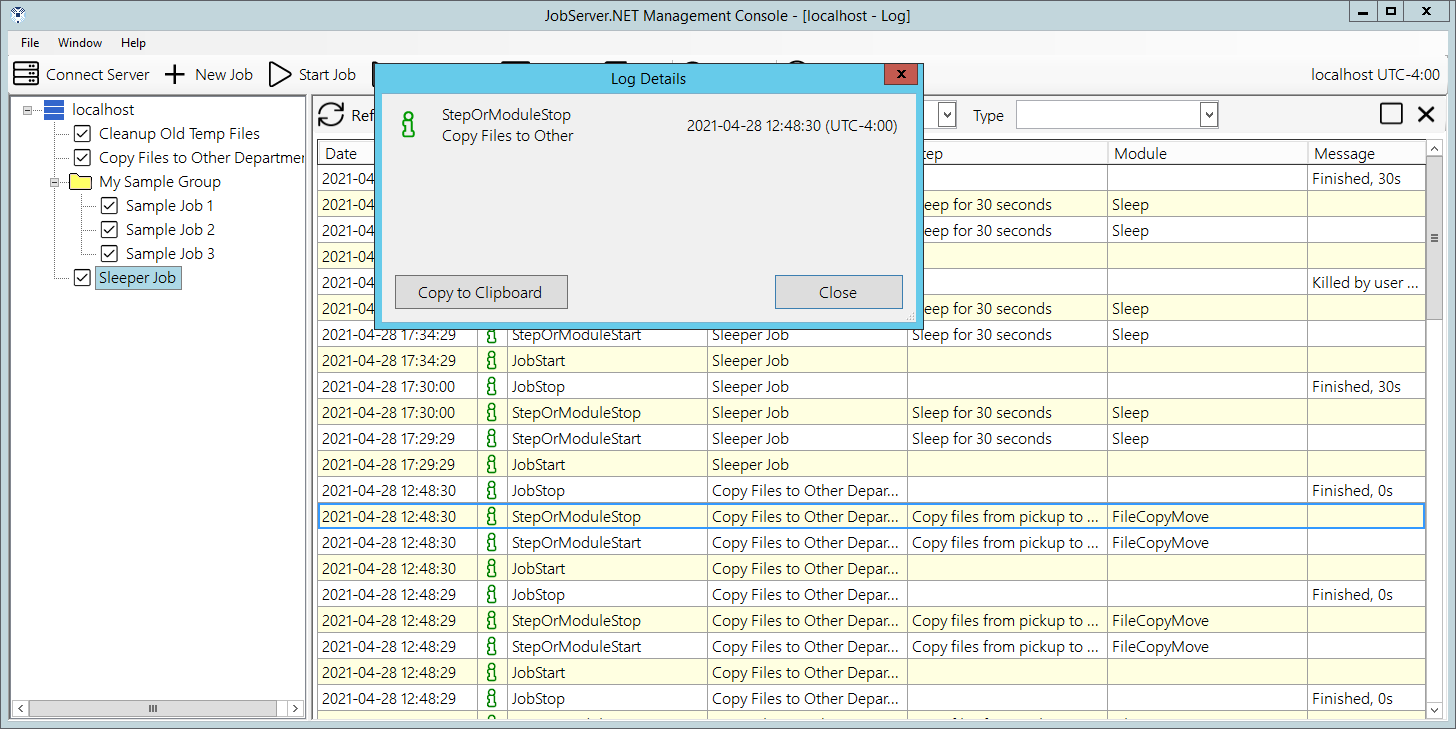

To delve into even more precise data, you can see the full log detail by double-clicking on any one of the run entries in the history grid. That will bring up the full log detail for just this individual run of the job. In the log detail, you will see step by step entries of what happened during the job process from start to finish. Double-clicking on any of the individual log entries will show the log detail record which for any entries that might contain a much lengthier message or set of messages than can be displayed in the grid, will get shown in a full view. It also provides you with a method to copy the log entry information to the clipboard if needed.

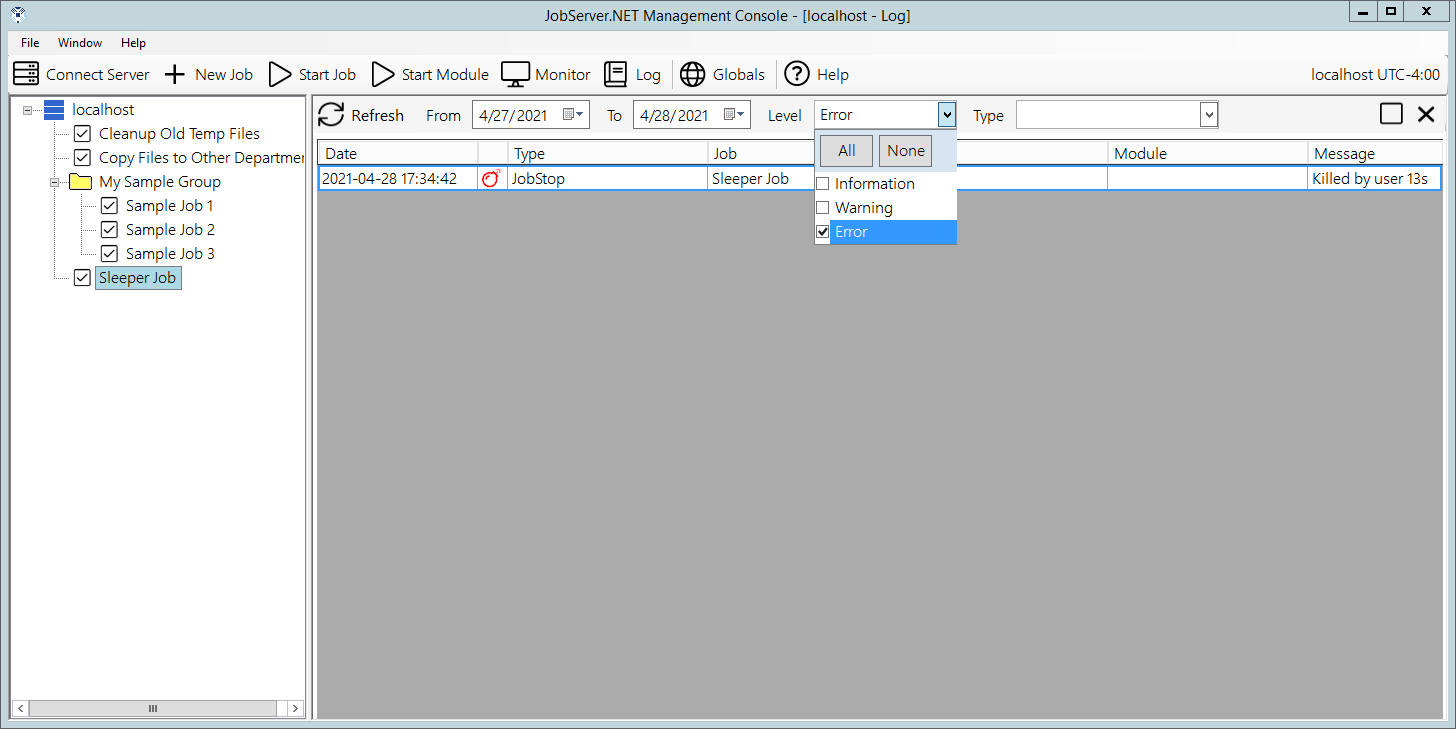

This may be the most useful way to look at specific activity that occurred during a known run of a job definition. However, you also can view and search the entire raw log for the entire JobServer. To open the whole server log, just click on the Log button that is found on the main toolbar. This opens the whole log viewer which you can scroll through or use the assorted options to filter out entries to locate specific events that might be occurring across multiple jobs. An example of one thing you might use this for is to click on the dropdown for the Level filter at the top of the log window and select (check) the Error option. Once you do that, you should see a list of all log entries for all runs of every job on the server that might have recorded an error event. By reviewing each one, you can assess if the recorded errors might be due to normal environmental issues that might happen, or if there is some intervention needed to correct an issue somewhere.

The log system in JobServer.NET is self-managing and you do not need to take any specific actions to maintain it. It automatically keeps itself pruned to a reasonable size by purging data older than 90 days (about 3 months) or over one hundred thousand entries.

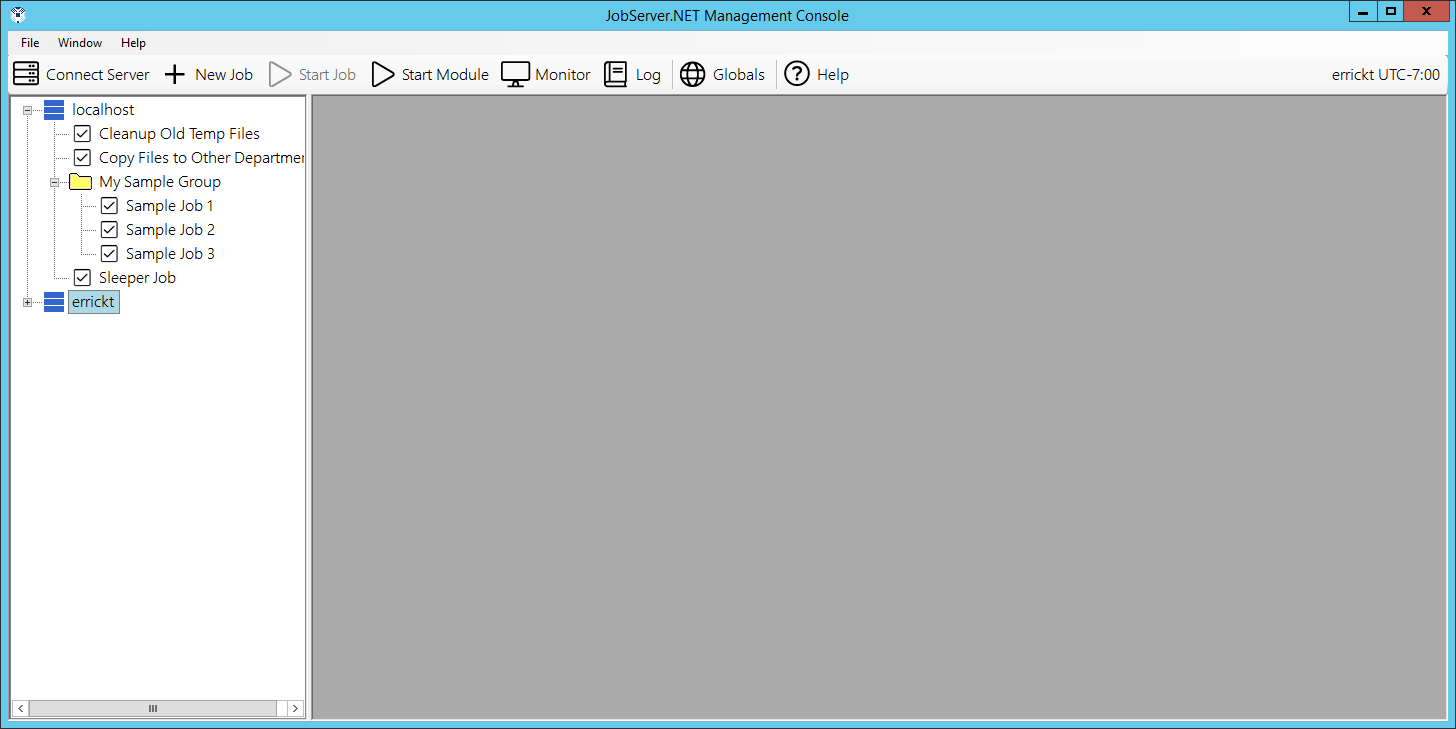

Managing Multiple Job Servers

The manager application can manage multiple servers at the same time. If you have an additional installation of the server running on another machine, you connect to the other server by clicking on the Connect Server button on the main toolbar. You can open as many server connections as needed. The only limitation is that each job server connection has its own inactivity timeout duration. So, if you open a connection, but do not continue using it in favor of another connection, it will eventually timeout and disconnect.

When you have multiple server connections open, most of the main toolbar buttons perform the desired action on the server within the branch in the connection panel that has a current node selected. As you click on folders or jobs under a specific server, its node in the list remains selected. So for example, when you click the Log button in the main toolbar, you will be looking at the main server log for the branch the selection is currently located in.

Pre-Installed Triggers

This section details the trigger plugins which are included with JobServer.NET when it is initially installed. Additional triggers may become available as downloadable add-ons or added in future updates.

Scheduler Trigger

The scheduler trigger is used when a specific job needs to be started at a particular date and time and may have a variety of conditions to which the job should recur. This recurring pattern can be indefinite or limited to a certain window per period or in total. We will cover a variety of examples in this section due to the flexibility of the JobServer.NET scheduling engine. The first thing to note is that there are multiple types of recurring options that vary based on the type of recurrence it is. You see this reflected in the fact that until you specify a type of recurrence, there are no visible options yet when editing a new trigger. So, we will review each type here.

More Than Once A Day

The more than once a day recurrence type is used when you have a job that needs to run multiple times during a day but can either be every day or only on certain days of the week. We are first going to skip over the first option for Skip Missed Execution Times and come back to that a little later. All the options for this are completely contained by the Start Date and an Ending if one is set. The start date defaults to the current date for a new job definition but can be set into the future if a job should not begin until a later date. The default for an ending date is the Never option, which of course means the job will run indefinitely. The other options allow you to provide a specific date they will run through, as well as an option that allows the job to run only a specific number of iterations. Once an ending is reached, then the job will no longer become active, unless you modify the schedule to change the starting date to be in the future.

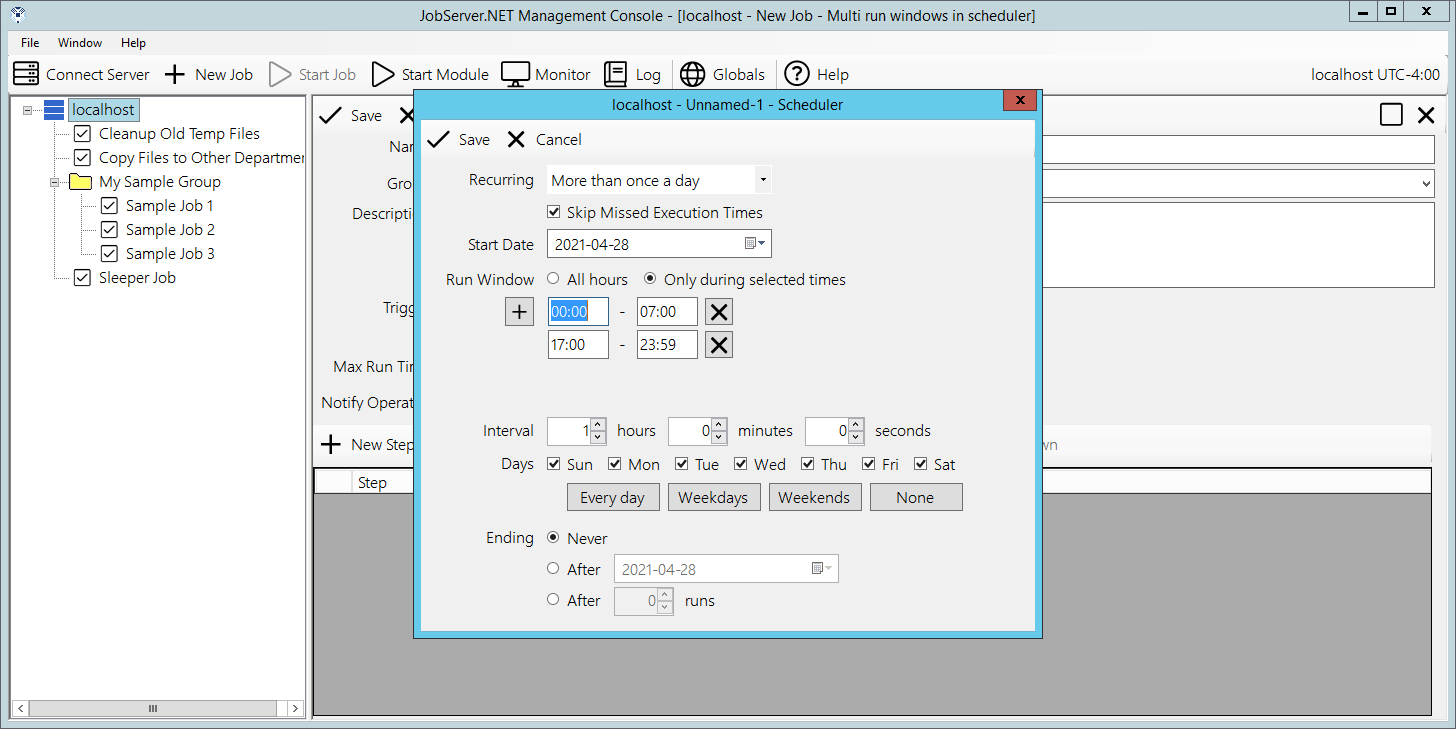

The default option for Run Window is All Hours, or in other words the run windows is the entire day. If you want to constrain the job to run only within one, or more time-windows per day, then you can select the Only during selected times option. Once you choose this option, you can define one or more time-windows per day that the job can be active. An example for using this would be if you needed to run a job every hour except during normal office hours. In this case you might define a window from 00:00 to 07:00, and another from 17:00 to 23:59. This would define the time-windows that job can execute, thus excluding the time between these from 07:00 to 17:00 when you might have people in your office. Then you specify the Interval at which you want the schedule to repeat within the defined window(s). For example, to run hourly, set the Hours interval to 1.

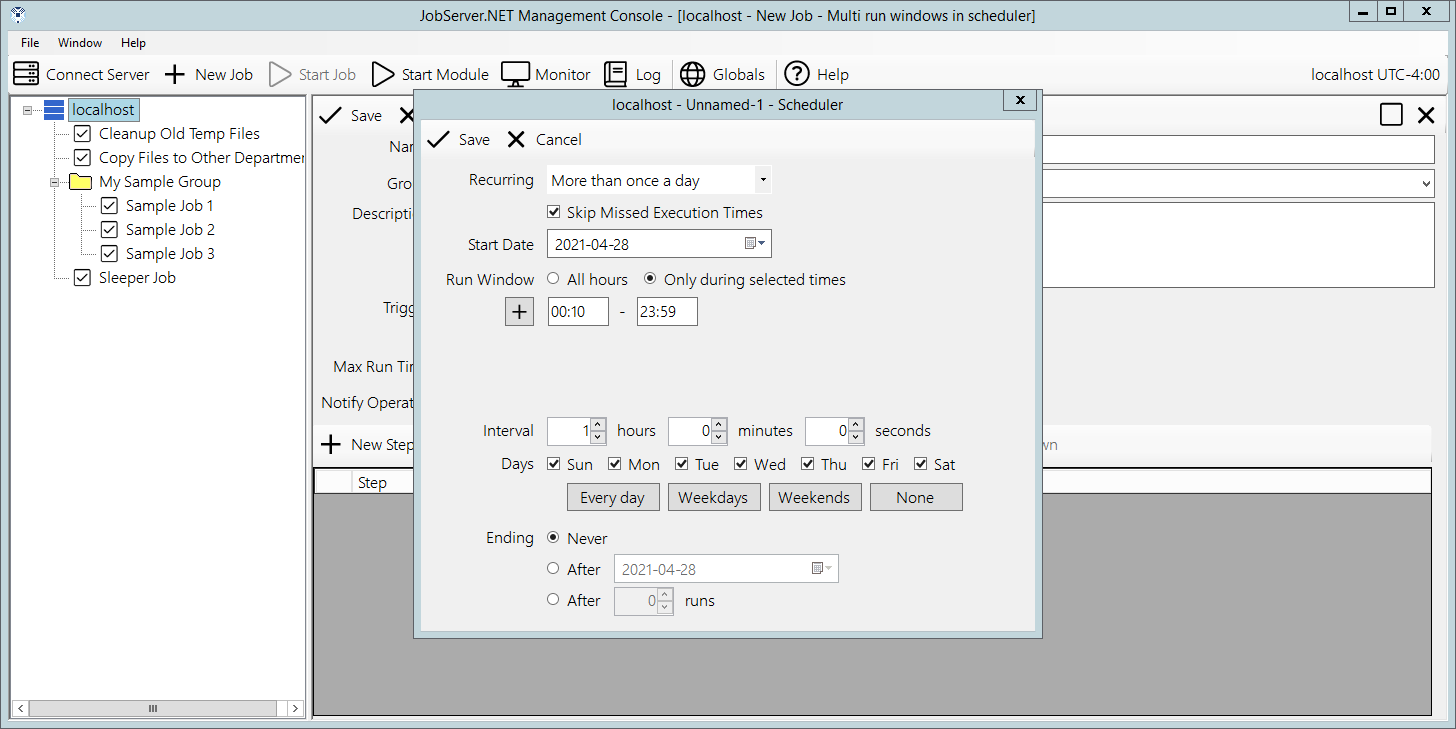

Another aspect to defining run windows is that the starting time for each window becomes the anchoring time of day that the next runtime is calculated from. For example, if you wanted a job definition to run every hour, but you only want it to execute at 10 minutes after the hour, then define the time window for your schedule to align to this. For a job that would run all day long at 10 minutes after the hour, just define a time window of 00:10 to 23:59. Then you will have a job that executes at 00:10, 01:10, 02:10, 03:10, and so on up to 23:10.

This concept of the anchoring time applies to the Interval no matter if you set it to whole hours or not. For example, if instead of running hourly, you want the job to execute every 15 minutes, but instead set a time window of 7:10 to 23:59, then you will see job executions occur at 07:10, 07:25, 07:40, 07:55, 08:10, 08:25, and so on. When defining an interval, you can combine the options for Hours, Minutes and Seconds if needed. The JobServer.NET engine will calculate the correct run times to fit within and anchored by the start date and run window(s) you have defined.

The Days option defaults to allowing the job to execute every day of the week. If the job only needs to run on a specific day of the week, then unchecking all the days except the desired day of the week will limit the execution of the job to only those days. You can select any combination of the days of the week and there are shortcut buttons for quickly selecting Every Day, only Weekdays, only Weekends, and deselecting all days.

All these combinations allow for a very flexible way to schedule jobs that occur multiple times through each or certain days. Now that you have seen how these available options can be used together to create a schedule that suits your job, we will go back to that option we jumped over at the start, the Skip Missed Execution Times option. This skip option is enabled by default and for most jobs this is likely going to be the preferred method of working for most jobs. However, there may be an occasion where you may have a complex custom module, or you use the SQL Execute module to run a SQL database procedure that modifies the current state of something, and it depends on running the specific number of times per day that the schedule you have defined is set up for. In cases like this, you can turn off the skip option, and if JobServer.NET detects that a defined job did not execute at a time it should have according to its schedule, this will trigger it to be executed the required number of times to “catch up” to the most current run time.

We can look at an example to see how this works. Let us assume you have defined a job that executes hourly all day long exactly on the hour. This job will execute 24 times every day. And this example job updates internal counters and other statistics that rely on the results of the previous time it was run. Now let us says you must schedule some time to take your machine offline for some needed maintenance, and it winds up being powered off over a little over an hour causing it to miss the scheduled time it would have normally run at. If your machine was offline before 14:00 and through 15:00, the JobServer.NET scheduling engine can detect these missed run times. If the skip option is turned off (unchecked), then the job will be run two times to catch back up to the current schedule as soon as it is able to.

Once A Day

The once-a-day recurrence type is used when you have a job that needs to run only one time per day, either every day or every few days. Thus, you will notice that the number of additional settings that are available under this option are much simpler. Skip Missed Execution Times is the first option as it is with all the scheduler-based triggers. This option works the same way for all types of recurrence and therefore behaves in the same fashion as described in the previous section.

The Start Date and Start Time fields specify the starting date and time as would be expected. Then the next field is the repeating interval option which appears as the Every X Days option. With this set as the default value of 1, the trigger will fire every day at the specified time. When you set this option to a different value, then you want to keep aware of the fact that the starting date and time serves as the anchoring point for when the next run will be triggered.

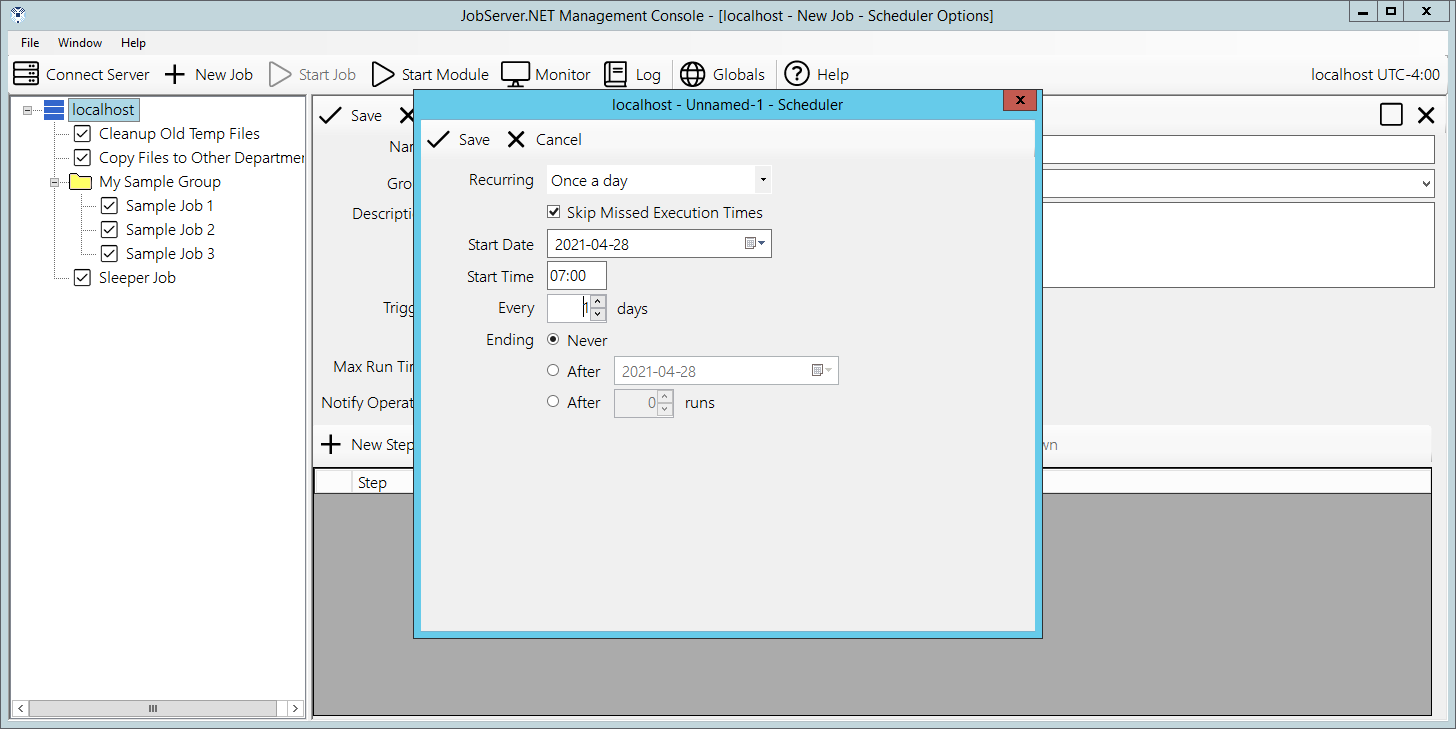

Finally, the Ending option also is present here as was described in the previous section. This also is true of all the various recurrence types. An example of the once-a-day recurrence type might have us set the Start Time to 07:00 with all the other defaults. This would give us a job that run at 07:00 exactly, every single day.

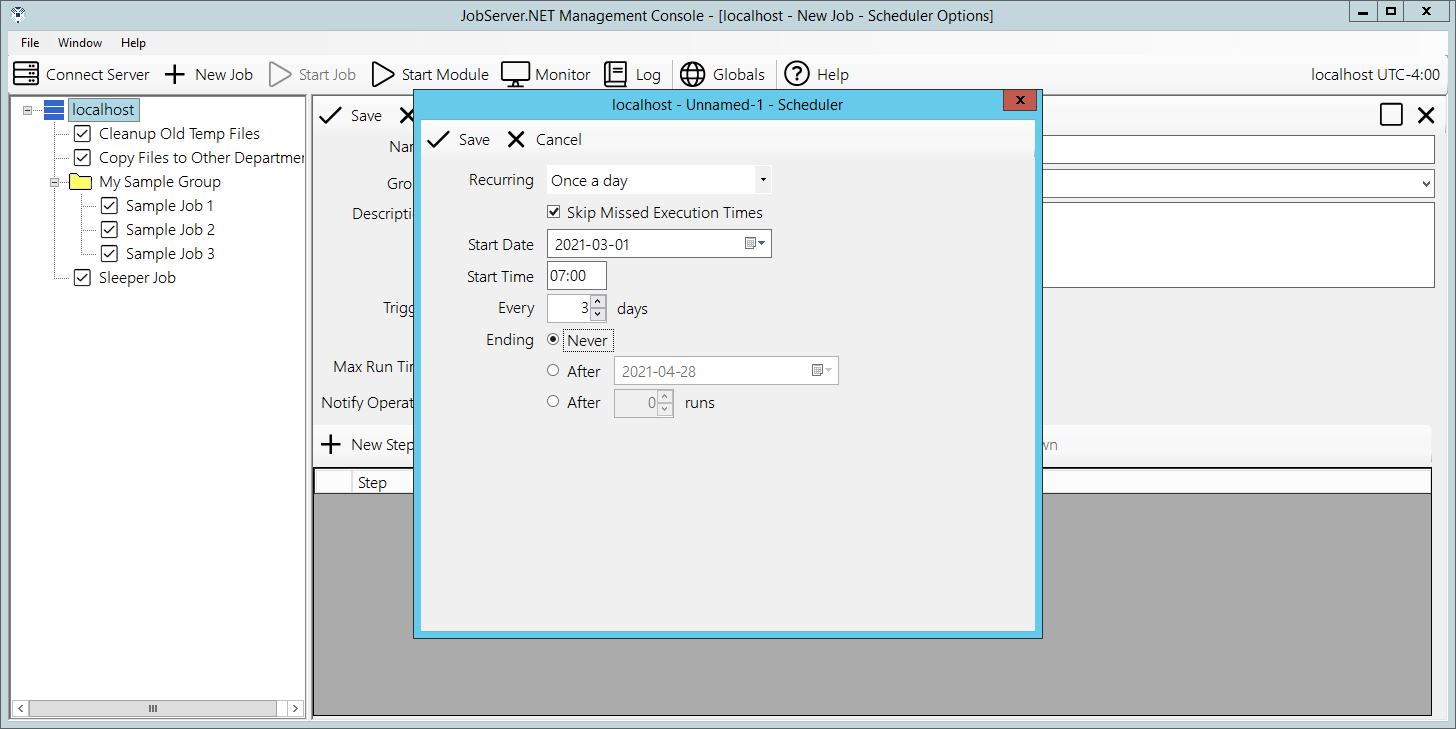

For another variation, change Start Date to 2021-03-01 and the field Every 1 Days, to a value of Every 3 Days. Now this job will execute at 07:00 on each of the days it should run. But note that with this specified date, the anchoring of the starting date and time means this job will run 2 times on the first week (Monday March 1, and Thursday March 4). Then we will see the job run 3 times the second week (Sunday March 7, Wednesday March 10, and Saturday March 13). And on the third week we see the job run 2 times for this week (Tuesday March 16 and Friday March 19). Then on the fourth week, we see the job will again start on a Monday, March 22. So, in this scenario, the days of the week that the job runs on is based on the anchoring of the Start Date and the number of days it is repeating on.

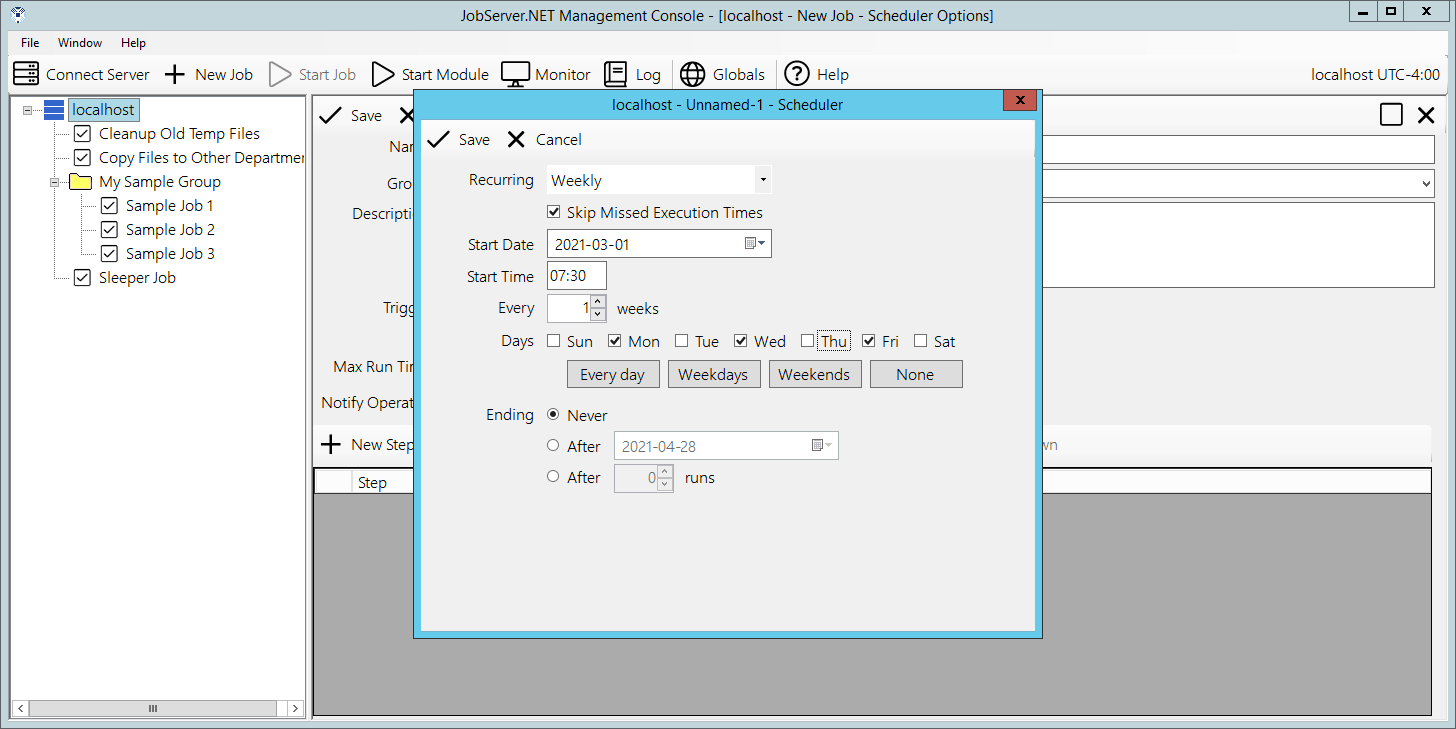

Weekly

The weekly recurrence type is used when you have a job that needs to run one or more days every week or on an Every X Weeks basis. Or, the weekly recurrence type can also be used when you have a job that needs to run on specific days of the week. As usual, we start with the Skip Missed Execution Times option that was outlined at the start of this section.

Next, we again see the Start Date and Start Time fields to specify the starting date and time. This time we see the repeating interval option appears as Every X Weeks and is based on the number of weeks instead of days as in the once-a-day recurrence. The next field, Days of the Week is what this recurrence type really excels at. Notice how this option has a checkbox for every single day of the week. They normally default to all of them being enabled, but you can toggle any of the off or on in any combination needed. Underneath of them, you see buttons that help quickly set all the days of the week settings to commonly used values, or to quickly select or deselect all the days-of-the-week options.

One example for using this would be to select only the weekdays and use that to trigger the creation of a daily report that goes out to all office workers. Or, select weekends and use that to trigger the creation of weekend promotion emails.

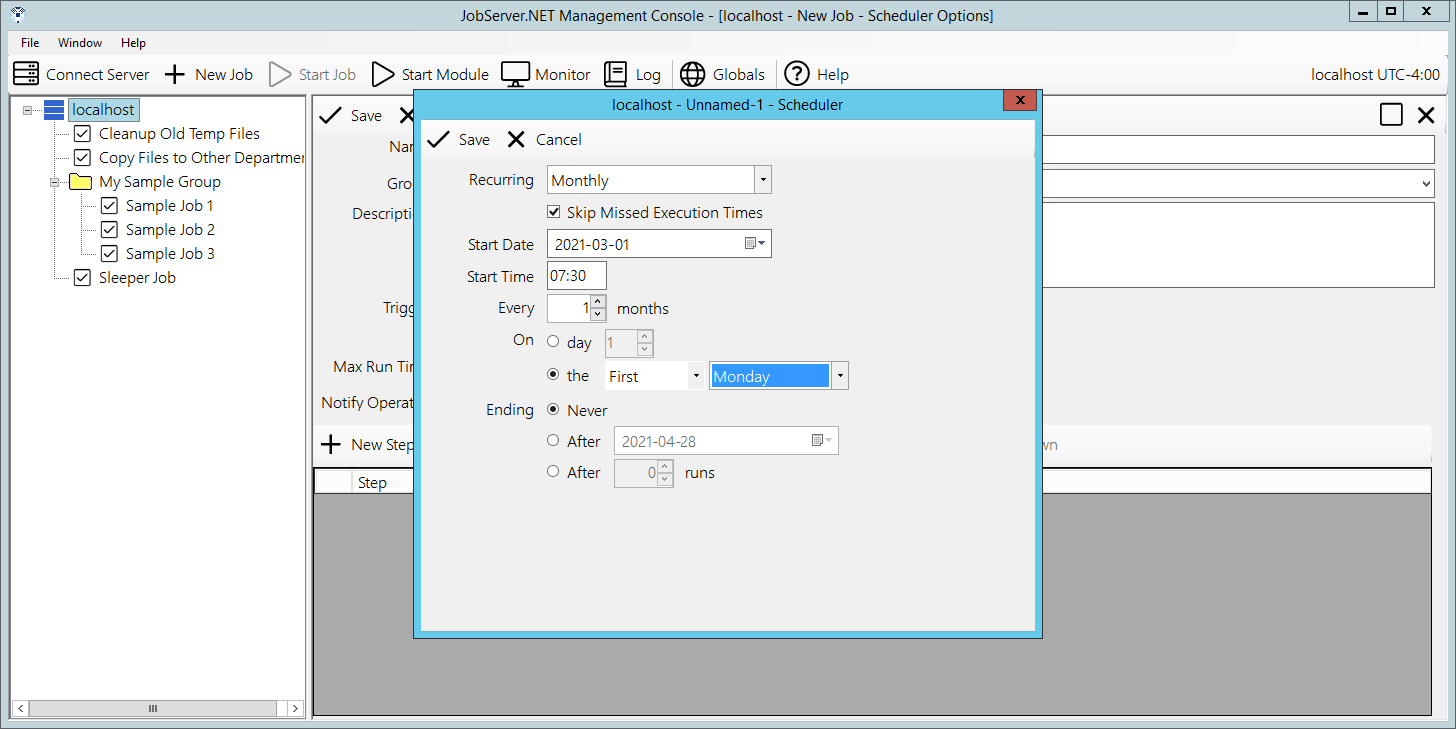

Monthly

The monthly recurrence type is used when you have a job that needs to run once during the month. The options here allow you to have it run on a specific day of the month, or a relative day within the month. Again, we see the Skip Missed Execution Times as has been detailed in the section at the top. We also see the Start Date and Start Time fields which we should now be familiar with as well as the repeating interval option, now appearing as the Every X Months option.

Now the part that makes the Monthly recurrence type distinct: the On field and its options. The first option for it is the day of the month and this is exactly what it sounds like. When you want the job to start on a specific day of the month, you just put that day in this option. So, putting a value of 5 in this option will give us a job that runs on the 5th day of every month. The second option allows you to select a relative day within the month, providing you a way to specify the First Weekday of the month, Fourth Thursday of the month, Last Weekend of the month, or various other such combinations. Finally, we again see the Ending field options.

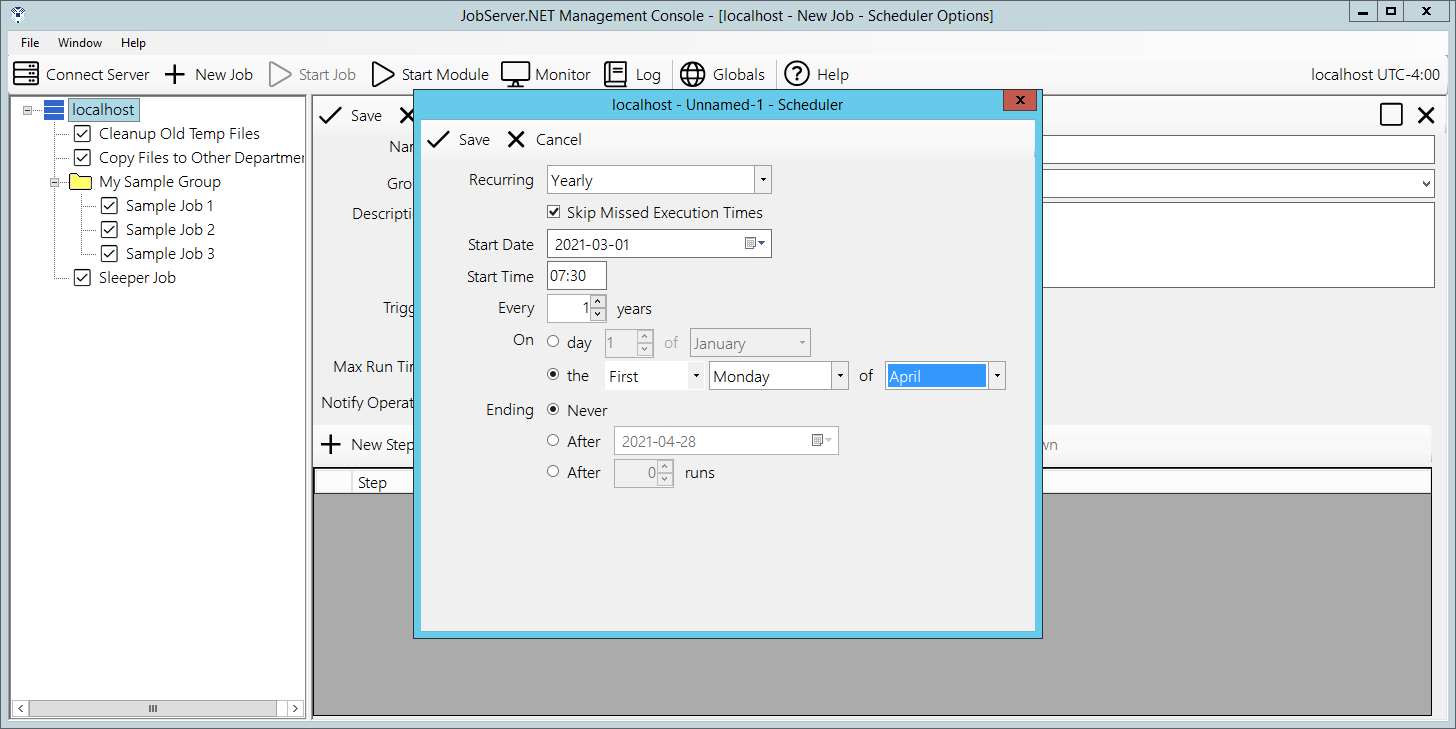

Yearly

We now reach the last of the recurrence types with the yearly option. This should look familiar as the options are almost identical to the monthly type. The only difference being the option for the relative day is mapped to options within the year, as opposed to within the month as before. So let us look at just that relative day option by changing current option from On Day to On The if not already set. Once you do that you will be able to try the different options within the relative date to see that combinations such as the Second Friday Of June can be selected. Using the combinations of either the specific date or relative day in the year, you would be able to schedule things that might align to certain business processes, such as running a Quarterly Report, or exporting a Year End Inventory Positions file.

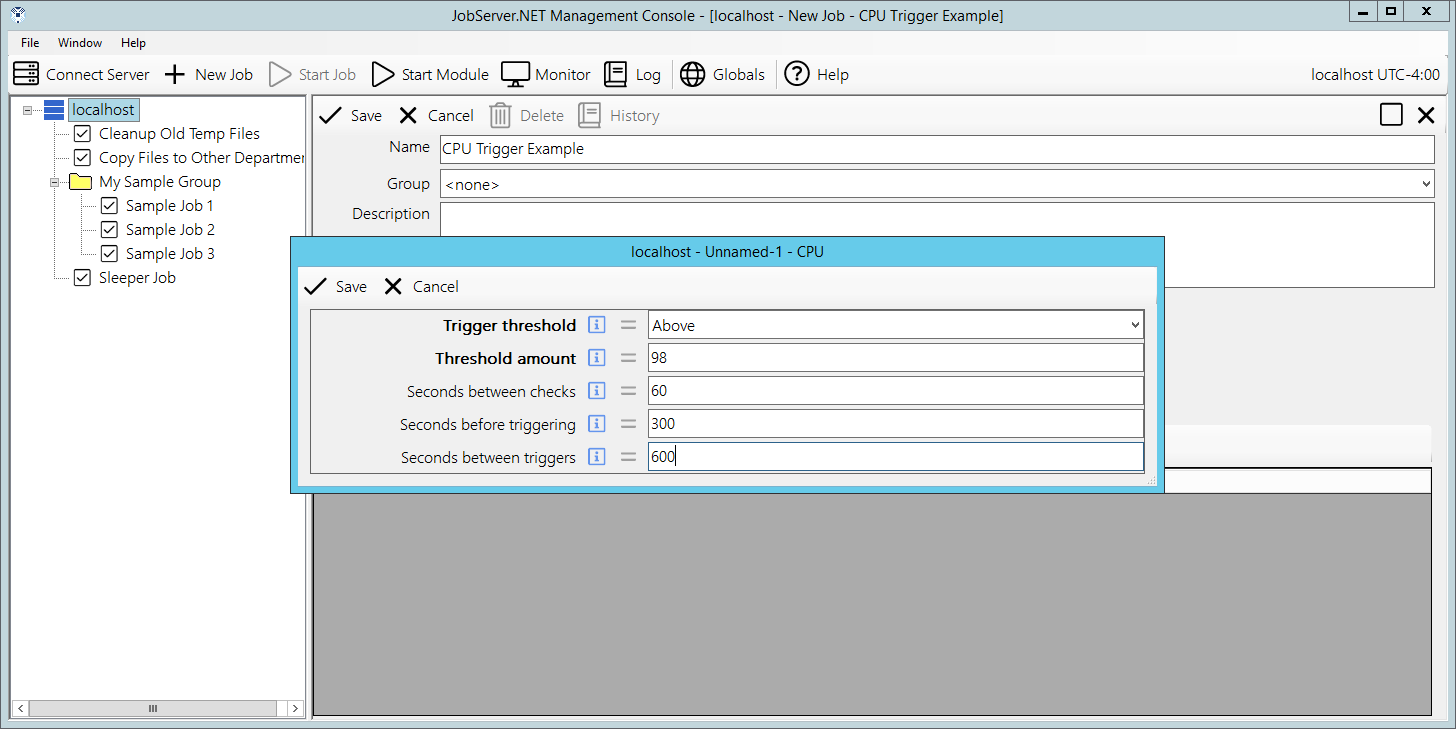

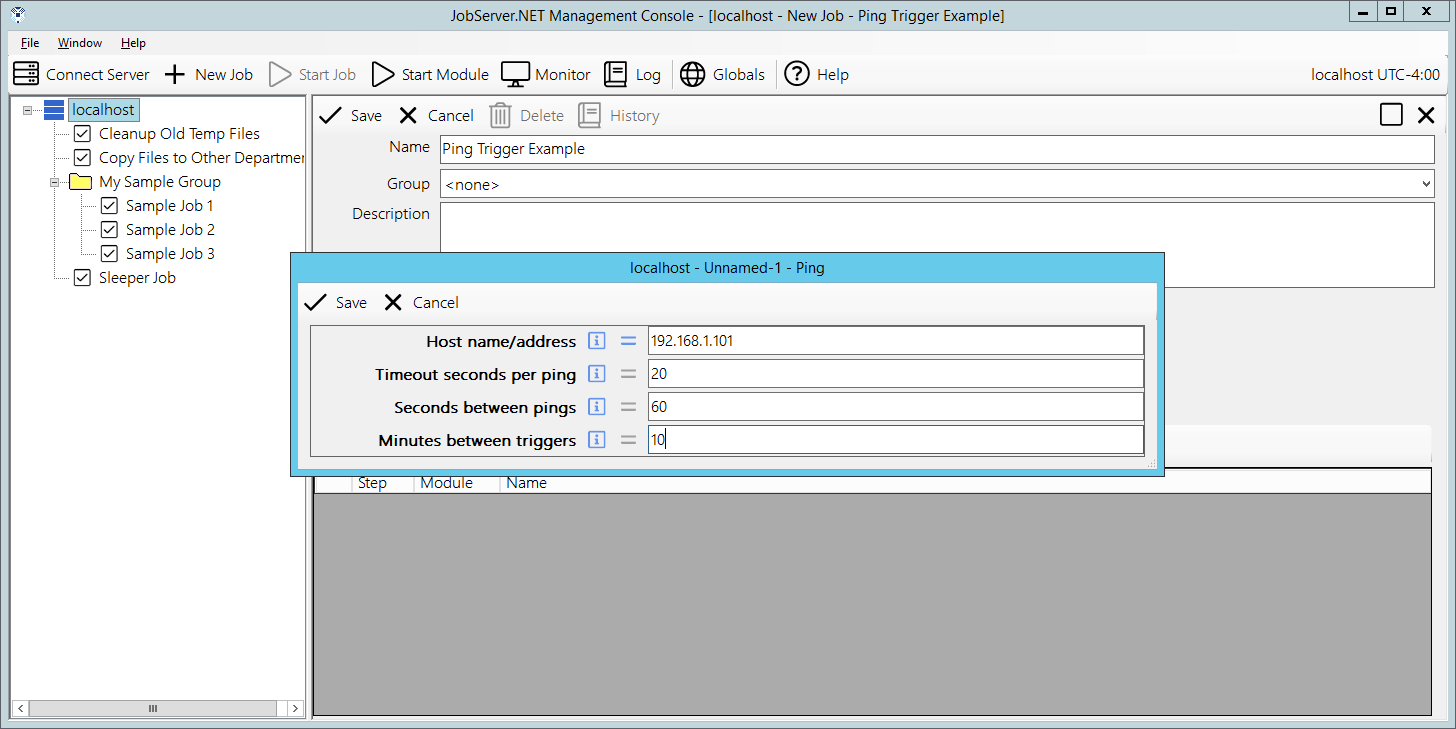

CPU Trigger

The CPU trigger can be used when a specific job needs to be executed when the amount of aggregated processor activity on the machine has stayed above or below a determined threshold. We configure this trigger using a few options with the first being the Trigger Threshold. The Trigger Threshold can be set to a value of either Above or Below. As might be expected, if we choose the Above value, then the machine will need to maintain processor utilization above the Threshold Amount. And of course, as you may guess, choosing the Below value would mean that the machine will need to maintain processor utilization below the threshold amount. The Threshold Amount is a percentage of aggregated processor utilization.

The option Seconds Between Checks is a value which controls the overall granularity of how soon the trigger may respond to changes in the processor utilization. The minimum or default value for this setting is 5 seconds. The option Seconds Before Triggering controls the period in which the aggregated processor activity must sustain the level of activity above or below the threshold before the trigger will respond. The requirements for this value will vary significantly based on your intended usage. For example, if your server regularly executes many long running jobs which may be processor intensive, then these may cause the trigger to respond prematurely to what you intend. Thus, successful usage of this trigger may require careful planning and tuning of these values.

Finally, the option for Seconds Between Triggers controls how often the trigger responds to the processor activity. Some jobs you may need to have execute every time your machine utilization is above 95 percent. An example might be if you created a custom module to check for one of your processes that is taking longer than it should and can be detected by high processor utilization. Other times you may need to limit the frequency at which this CPU trigger responds. You can do this by setting this to an appropriately high value. An example might be that you do not want to cause the trigger to respond more than once per hour, so you may set this value to 3600 seconds (or 1 hour).

Available Disk Space Trigger

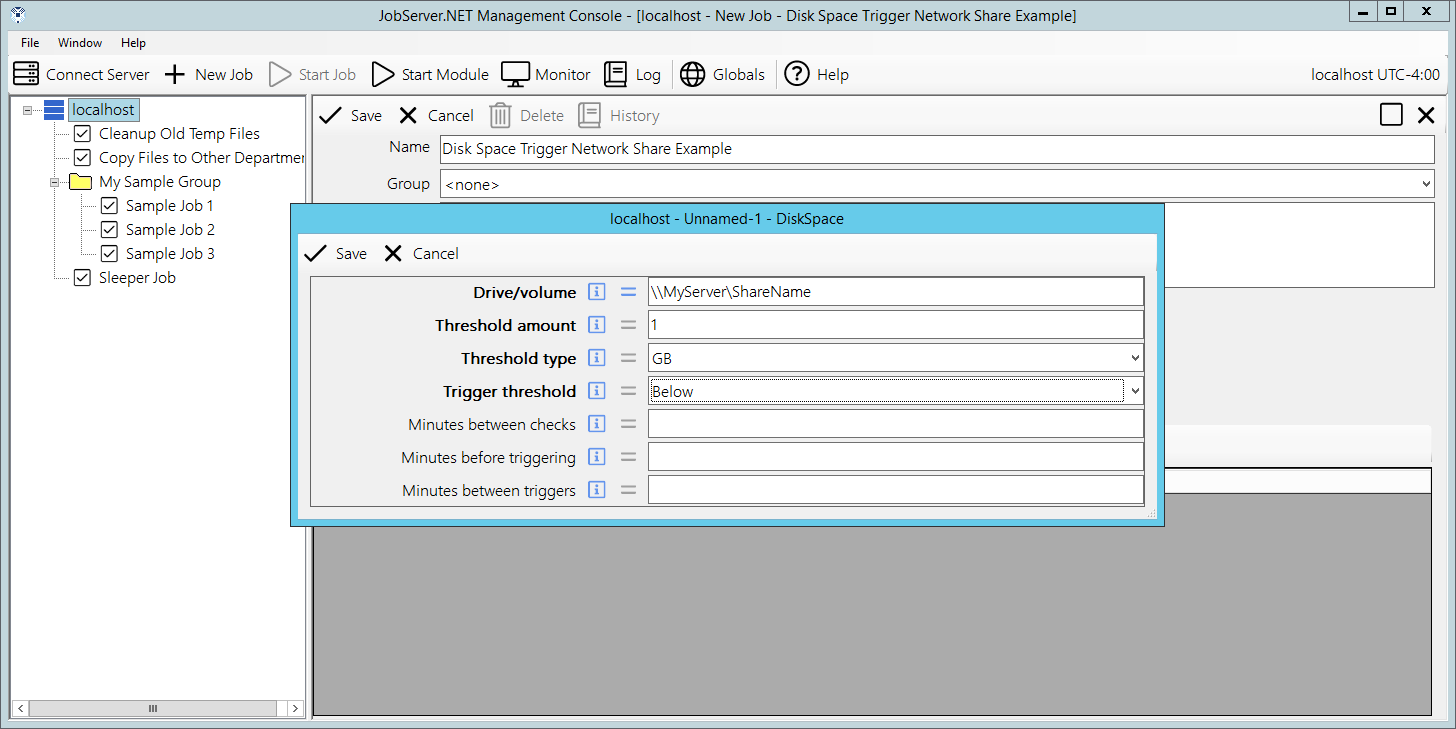

The disk-space trigger can be used when a specific job needs to be executed when the amount of available disk space on a given drive or volume drops below or goes above a determined threshold. The parameter field Drive/Volume allows the specification of a drive or volume name. For locally attached drives on the machine, this would be done by providing a valid drive specification such as C:\ or any other valid local drives. Keep in mind of course, that these would be referring to drives local to the machine running the JobServer.NET service. If you are running the JobServer.NET Manager application on a desktop machine connected to a remote service, then you are specifying the drives on the remote machine.

For specifying a volume available on the network, you should use the UNC path convention such as \\MyServer\ShareName to specify any path that is available on the network. Be certain that the proper permissions are available for JobServer.NET otherwise it will not be able to get access to the network volume. The next two parameter fields work in tandem to define the threshold. The first is the Threshold Amount and this should be a whole number value. This is used with the Threshold Type field which specifies if the amount is either a percentage of the size of the disk or volume. If percentage is used for type, then the value should be limited to a setting from 1 to 100. Otherwise, it defines the scale of the value allowing you to specify the amount as being a whole number of Kilobytes (KB), Megabytes (MB), Gigabytes (GB), Terabytes (TB), Petabytes (PB).

The next parameter is the Trigger Threshold which allows you to choose between triggering on the amount of free space being Above or Below the threshold value specified. The value specified for Minutes Between Checks allows you to control how frequently the available space is monitored. The value for Minutes Before Triggering controls the period which the available disk space must remain beyond the detected threshold before the trigger starts the job. Let us use an example where you have a disk-space trigger defined on a drive where processes might be creating temporary work files and they normally remove them once completed. If the amount of available space crosses the threshold while the process is running and you do not want the trigger to start before the process cleans up its temporary files, then the minutes before triggering setting should be increased to a value that is higher than the expected runtime of the process. If not, then it is possible that the trigger will start the job due to the existence of the temporary working files. Thus, be aware of such situations otherwise you may see unintended jobs running without an obvious reason as to why afterwards.

The last parameter is the Minutes Between Triggers field. This option allows you to control how often the trigger responds to crossing of the defined threshold. Again, if you have other processes that create large enough temporary working files, this option can help you get the desired behavior from the disk-space trigger. The default behavior is the same as setting this to zero. It will trigger on each threshold crossing. Putting a larger value in here can help you filter how frequently you are running jobs when this threshold is being reached on a regular basis.

File Watcher Trigger

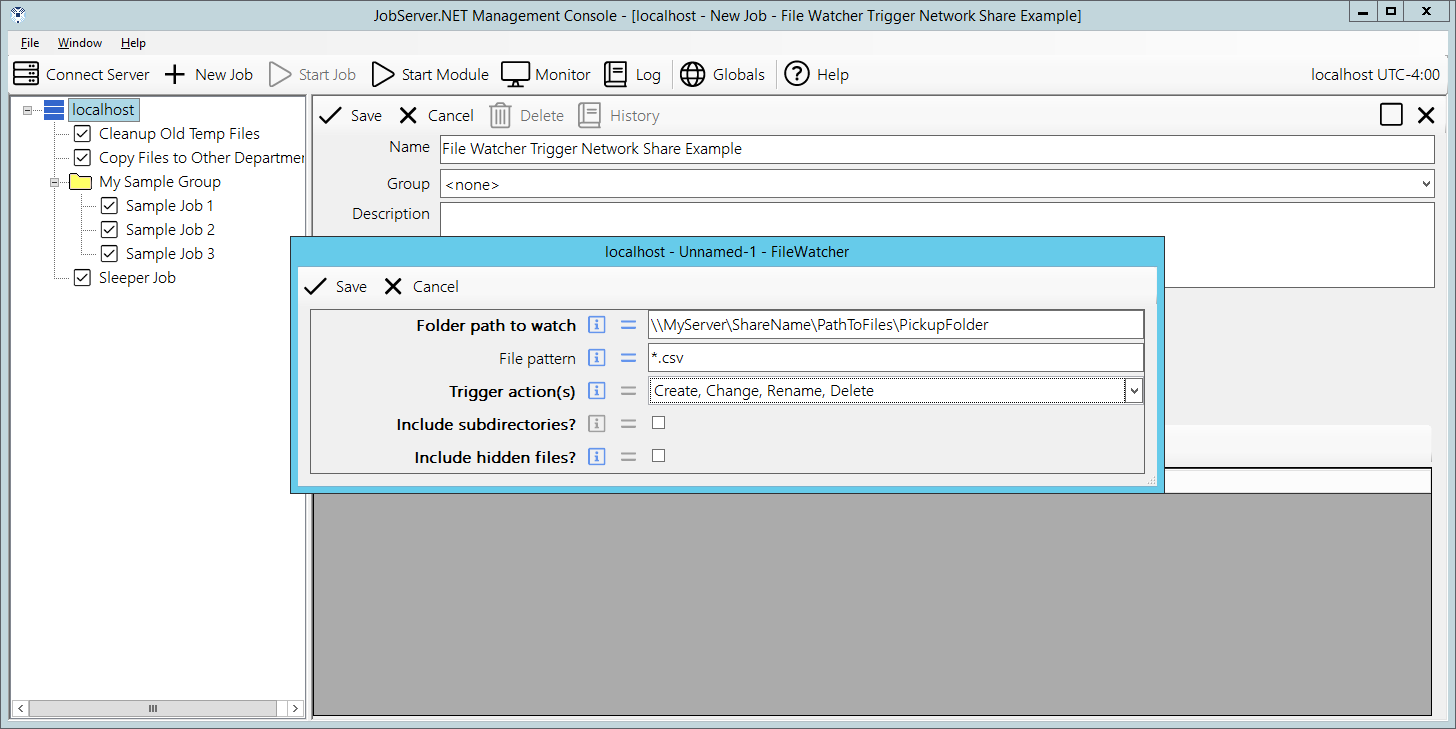

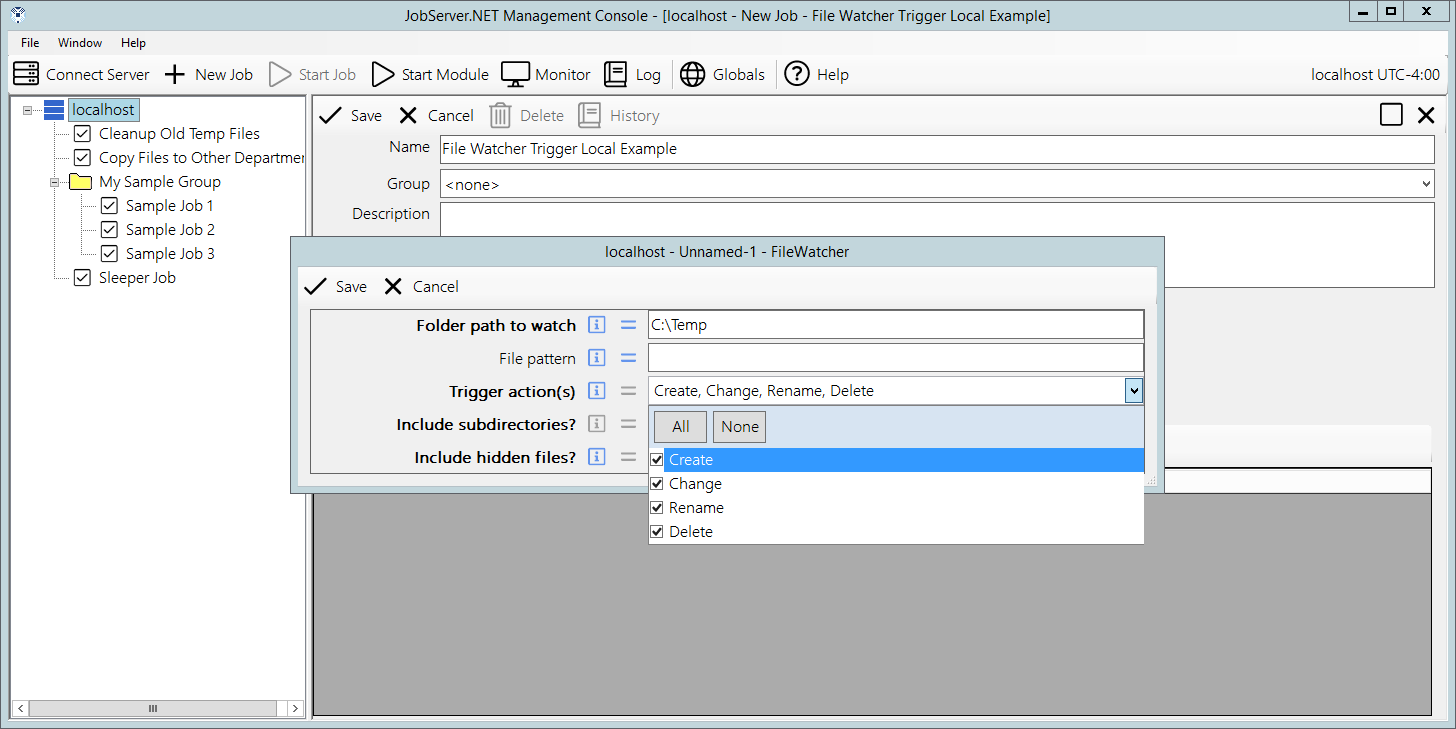

After the scheduler trigger, this is the next most popular trigger: it allows you to set up specific drives, folders, and network paths to be monitored for the existence of new or changed files. This makes it possible to set up a job that responds to files being dropped into a certain location on your network or on a local drive folder.

The first parameter is the Folder Path To Watch. This will be the complete local path like C:\MyFiles, or should be a network UNC path like \\MyServer\ShareName\PathToFiles\PickupFolder. Again, it is important to remember that JobServer.NET must have network permissions to any UNC paths.

The next parameter is the File Pattern. If not specified, it is equivalent to *.*, matching all files in the specified path. The file pattern used here must strictly conform to the simple pattern used in Windows Command Line and such, which only supports the use of the question mark and asterisk for wild card characters (i.e., it is not a regular expression). Therefore, to look only for CSV files in a specified folder, you may use a pattern like *.csv for this parameter.

The Trigger Actions parameter provides some control over the files that are picked up by the file-watcher. For most people, simply clicking the All option is enough and the file-watcher will report back all the files it finds in the path. For a finer degree of control, the additional trigger-actions, Create, Change, Rename and Delete can be used to respond to when those specific events happen to files in the path.

The parameter for Include Subfolders will also search the path for any sub-folders located in it. Normally this defaults to unchecked meaning only the files found directly within that topmost specified folder are found. When the subfolders option is enabled, then any number of subfolders deep will be included while watching for eligible files. The last parameter Include Hidden Files can be enabled (checked) if you want the file-watcher to pick up files that have the hidden attribute set. Normally the file-watcher will ignore any hidden files in the path, but this option allows you to override that behavior.

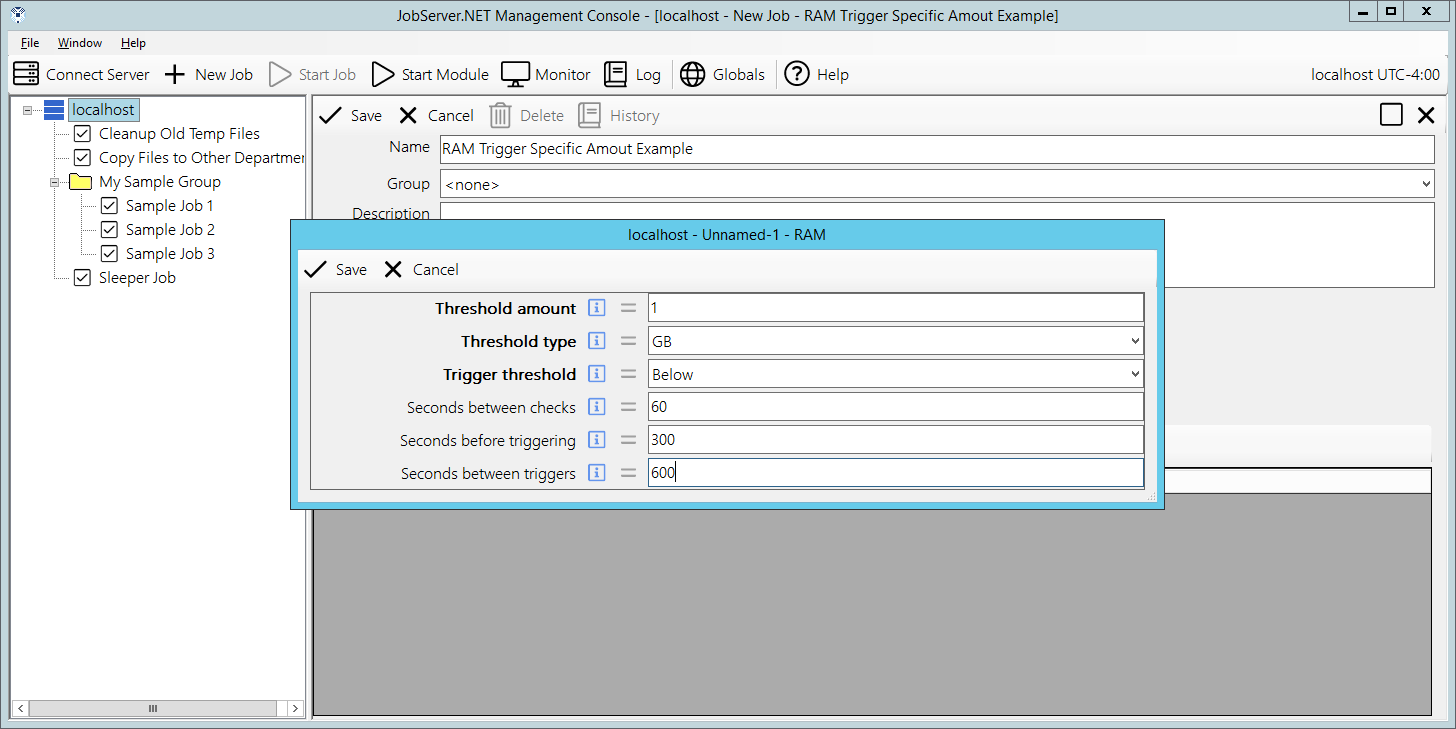

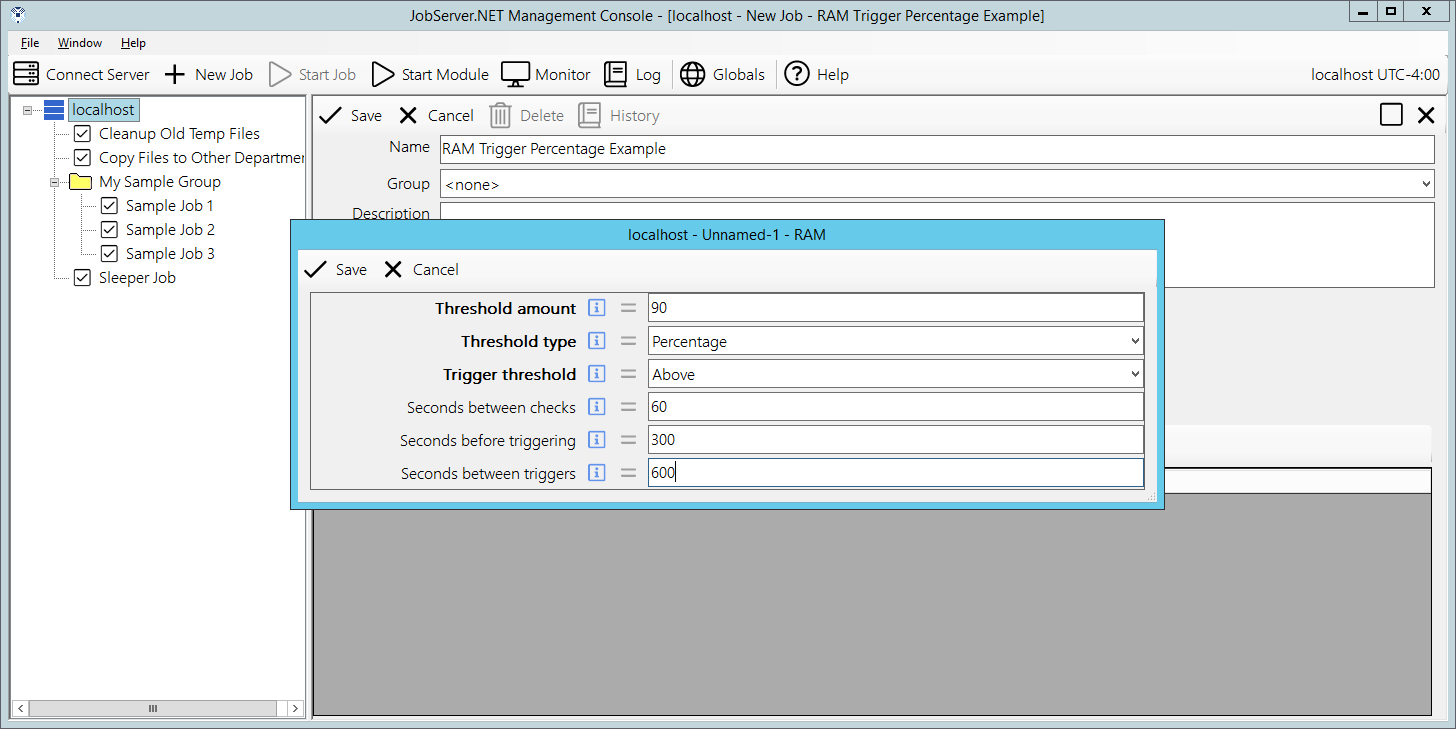

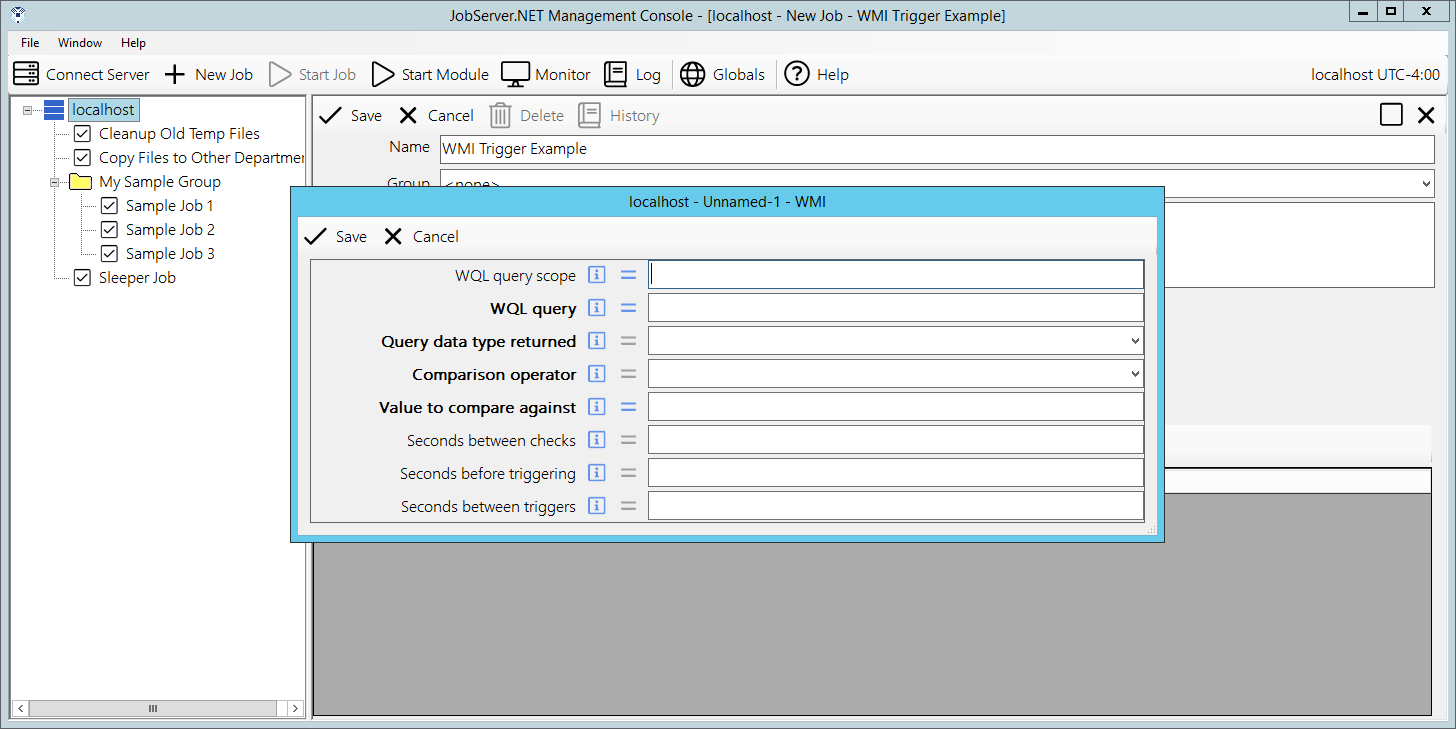

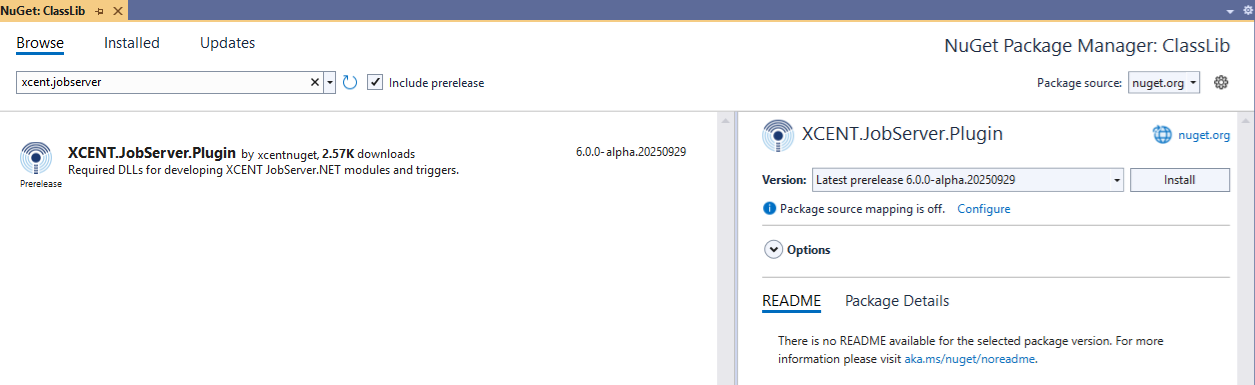

The trigger also defines an output parameter named FileList. The FileList parameter from this trigger is a list of one or more files that is returned by the file-watcher each time it detects any files matching the input parameters. The list of files is always a fully qualified path to the file and multiple files are formatted as one entry per line.